If your microsoft teams trigger not firing, the fix is almost always to identify where the chain breaks: the Teams event never happens, the connector never receives it, or the workflow runner never executes it. This guide walks you through that isolation and the exact remediations that typically restore reliability.

In practical Microsoft Teams Troubleshooting, you will get the fastest answer by testing the same trigger in a “minimal workflow” and comparing it against your production scenario. That single comparison quickly reveals whether you are facing configuration drift, permission scope gaps, or payload/filters that silently exclude your events.

Beyond configuration, triggers can appear “dead” due to platform behaviors: polling windows that miss events, throttling, queue backlogs, conditional access re-authentication, or policy boundaries (private channels, guest chats, sensitivity labels). The goal is to confirm the reality of the event, then confirm delivery, then confirm execution.

Giới thiệu ý mới: once you can pinpoint the failing layer, you can apply a targeted fix instead of “reconnecting everything” and hoping it works. Below, you will follow a structured diagnostic flow that keeps your automation stable even as Teams policies and tenants evolve.

Why is your Microsoft Teams trigger not firing?

Most “not firing” cases are caused by event scope mismatch, authentication/consent problems, or policy boundaries that prevent the connector from seeing the event in the first place. Next, you will confirm which of these categories applies to your trigger.

Did the Teams event actually occur in the same place your trigger watches?

Yes, it matters because many triggers watch a specific team, channel, chat type, or message surface, and the same “message” can exist in multiple locations with different IDs. Next, you will validate the exact team/channel/chat and message type your trigger is scoped to.

To illustrate, a message posted in a private channel is not the same event stream as a message posted in a standard channel, and a reply in a thread can be represented differently from a top-level post. If you are triggering off “new message,” confirm whether your platform treats replies as new messages, updates, or separate entities.

- Confirm channel type: standard vs private vs shared.

- Confirm message surface: channel post vs thread reply vs meeting chat vs group chat vs 1:1 chat.

- Confirm actor: user vs guest vs bot/app posting on behalf of someone.

- Confirm timing: did it happen within the polling window (if polling is used)?

Is your trigger webhook-based or polling-based?

Triggers fire differently depending on whether they rely on push callbacks (webhooks/subscriptions) or polling intervals (periodic checks), and the troubleshooting path is not the same. Next, you will determine the trigger mechanism so you can test the correct failure points.

Webhook-based triggers fail when callback URLs are blocked, validation handshakes fail, or payload parsing errors occur. Polling-based triggers fail when the polling window is too narrow, time filters drift, pagination is mishandled, or rate limits reduce data returned per check.

Are filters silently excluding the events you expect?

Yes, filters can prevent firing without showing “errors,” especially when the connector treats “no matching items” as a normal state. Next, you will remove filters temporarily to confirm the trigger can fire at all.

Common silent filters include channel ID mismatches, keyword conditions, message type restrictions (attachments only, mentions only), and “only from me” style options. A reliable approach is to run a minimal workflow that triggers on any new message in a known standard channel, then reintroduce filters one by one.

How do you isolate whether the failure is Teams, your connector, or your workflow?

Use a three-layer test: Teams event → connector delivery → workflow execution, and stop at the first layer that fails. Next, you will run simple checks that conclusively identify the broken link.

Layer 1: Prove the Teams event exists with a controlled test message

Post a controlled test message in the exact team/channel/chat your trigger watches, then capture its context (channel type, thread vs post, author, timestamp). Next, you will use that same message to validate delivery in your automation platform.

To make the test deterministic, use a unique marker string (for example, a GUID-like token) and post it as a top-level message in a standard channel. Then repeat as a thread reply. If only one of these fires, you have immediately identified a scope limitation rather than a global connector outage.

Layer 2: Prove the connector can “see” the event

Most platforms provide a connection test, sample data pull, or “test trigger” action that reveals whether the connector can retrieve the newest items. Next, you will use this capability to confirm that your integration identity has access to the event stream.

If the connector test returns no new messages despite your controlled post, treat it as an access/scoping issue. Re-check that the connected user is a member of the team, has permission to read the channel, and is not blocked by information barriers, sensitivity labels, or conditional access requirements.

Layer 3: Prove the workflow runner can execute and is not paused/throttled

Even if the trigger is delivered, workflows may not execute due to disabled scenarios, paused runs, exceeded quotas, or backlogged workers. Next, you will confirm that runs are enabled and that execution logs show attempts.

Look for indicators such as “scenario inactive,” “flow turned off,” “quota exceeded,” “retry scheduled,” or “delayed due to capacity.” If you see triggers arriving but no downstream actions run, you have a runner-level issue rather than a Teams issue.

This table helps you map symptoms to the failing layer so you can fix the correct component on the first attempt.

| Symptom | Most likely failing layer | Fastest confirmation | Typical fix |

|---|---|---|---|

| No trigger events at all, even with a controlled post | Teams event scope or connector access | Run “test trigger” / sample retrieval | Correct channel/chat scope; ensure membership; adjust policies |

| Trigger works in one channel but not another | Policy boundary / channel type | Compare standard vs private/shared channel | Move to supported surface; adjust app/permissions |

| Trigger events arrive but actions do not run | Workflow runner | Check run history and status | Enable flow; resolve quota/backlog; add retries |

| Intermittent firing (some messages missed) | Polling window / throttling / pagination | Review schedule + item counts | Widen windows; use delta; handle pagination; respect rate limits |

What Microsoft Teams settings commonly block triggers?

Teams triggers often fail because the connector is not authorized to read the relevant surface, especially for private/shared channels, guest/externals, and policy-restricted content. Next, you will verify the specific settings that most frequently cause “invisible events.”

Do private and shared channels change what your connector can read?

Yes, private and shared channels can restrict visibility to only certain principals, and some connectors/triggers do not support them fully. Next, you will confirm whether your trigger explicitly supports private/shared channels and whether the connected identity is a member of that channel.

Even when the connected user is a tenant member, private channel membership is separate from team membership. A user can be in the team but not in the private channel, which makes the connector “see nothing” for that channel. For shared channels (including cross-tenant), the access model and identifiers can differ again, which can break triggers that assume standard channel semantics.

Can app installation and consent policies stop event visibility?

Yes, tenant-level app permissions and consent controls can prevent a connector from accessing Teams data, even if the user can see it in the client. Next, you will check whether your organization requires admin consent and whether the connector has been granted the scopes it requests.

In locked-down tenants, users may be able to authenticate but not authorize the needed scopes, leading to “successful connection” with insufficient privileges. This is especially common when the automation tool relies on delegated permissions that are constrained by conditional access or limited consent policies.

Do sensitivity labels, DLP, and information barriers reduce what a trigger can observe?

Yes, compliance controls can affect visibility and may cause triggers to miss messages, attachments, or metadata. Next, you will test a controlled message in a non-restricted channel to confirm whether compliance constraints are the differentiator.

If your test message fires in an unrestricted channel but not in a labeled or restricted channel, treat it as a compliance boundary. In that case, the remedy is usually to move the automation to a supported, compliant surface or have your compliance/admin team confirm the connector’s allowed access for that workload.

Can conditional access force re-authentication that “breaks” long-lived triggers?

Yes, sign-in frequency, device compliance rules, and MFA requirements can invalidate long-running connections and prevent refresh tokens from being used. Next, you will review whether your connected identity is subject to stricter policies than your test account.

To keep triggers stable, use a dedicated automation account (where permitted) with well-defined conditional access rules, and monitor sign-in logs for repeated prompts or blocked token refresh events. If a policy forces re-authentication every few hours or days, your connector may appear connected but will stop receiving events until manually reconnected.

How do permissions and OAuth consent stop triggers from firing?

Permissions issues stop triggers by making the connector authenticate successfully but authorize insufficiently, so the event stream returns empty results. Next, you will validate consent, scopes, and the identity model your integration uses.

Is the connected identity the right one for the team and channel?

No, not always; many failures occur because the workflow is connected to a user who is not in the correct team/channel, or whose membership changed. Next, you will confirm the connected account’s membership and role within the team and channel.

Membership drift is common: someone leaves a team, is removed from a private channel, or loses a role. The Teams client may still show cached content, but the connector’s API calls return nothing. Ensure the connected identity is still an active member, not suspended, and not blocked from the specific channel.

Do delegated vs application permissions matter for your trigger?

Yes, the permission model affects what can be accessed and how reliably tokens can be refreshed. Next, you will identify whether your platform uses delegated permissions (user context) or application permissions (app context) and align that with your governance.

Delegated permissions depend on the user’s policies and sign-in constraints. Application permissions may require higher admin governance and are not always available in low-code connectors. If your scenario needs cross-user visibility (for example, monitoring a channel regardless of who posts), ensure the connector model supports that, otherwise your trigger may appear inconsistent.

How do “successful connections” still lead to missing events?

A connection can be “successful” while still missing required scopes, resulting in empty reads or partial data. Next, you will re-authorize the connector and confirm admin consent has been granted where required.

In Microsoft Teams Troubleshooting work, one of the most effective checks is to disconnect and reconnect using the intended automation account, then immediately run a “test trigger” to verify that the latest messages are visible. If a reconnect changes nothing, the issue is more likely scope/support limitations or policy boundaries rather than stale tokens.

How do you validate webhook-based triggers and subscription callbacks?

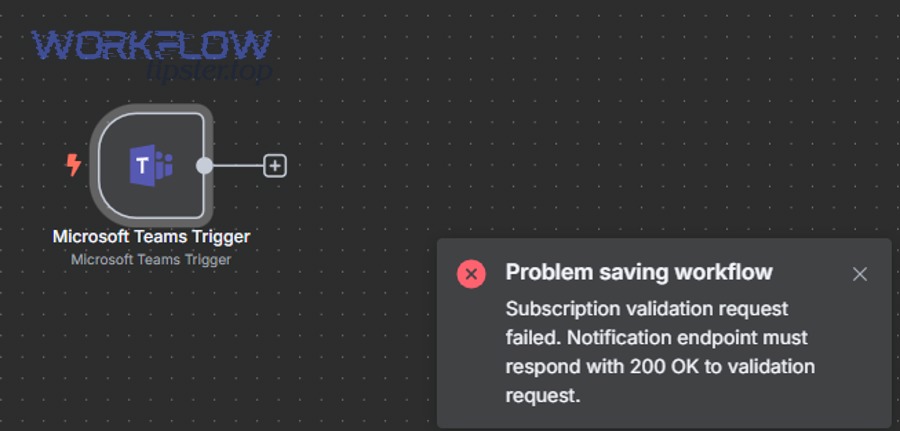

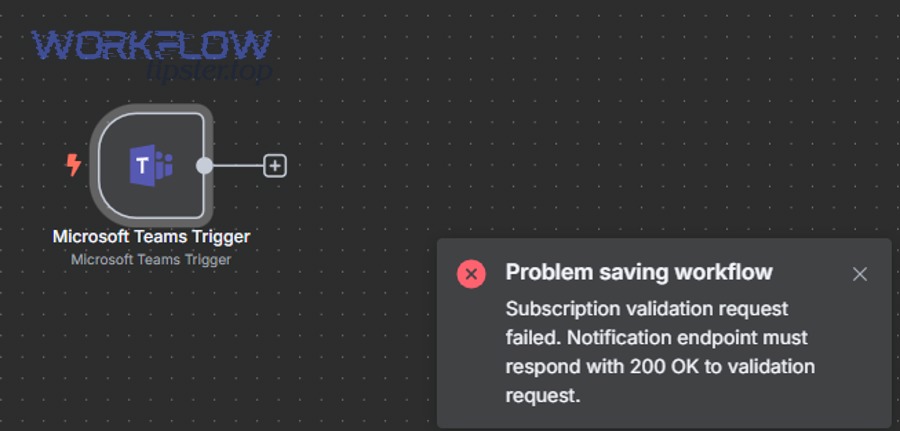

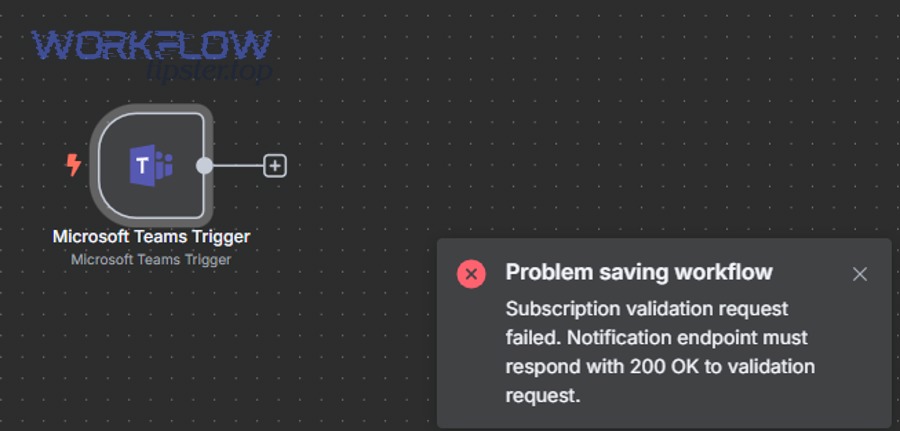

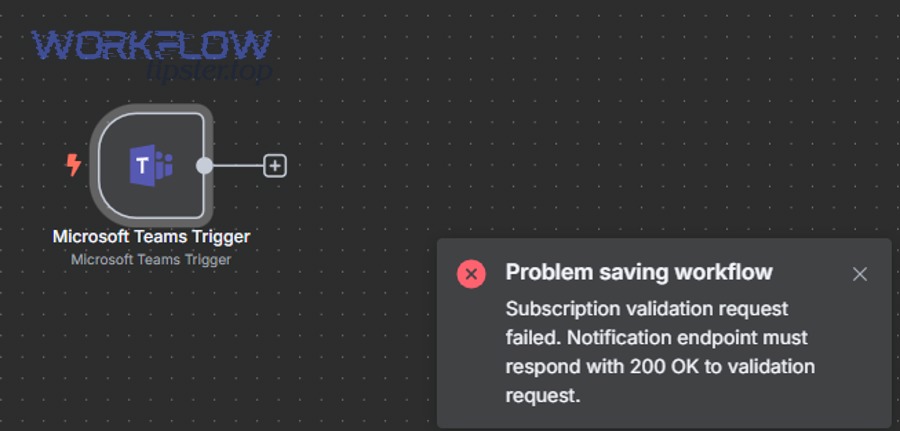

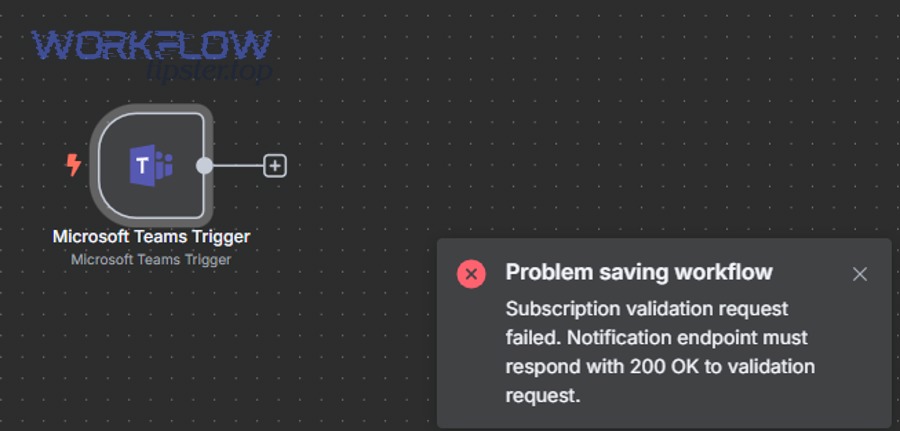

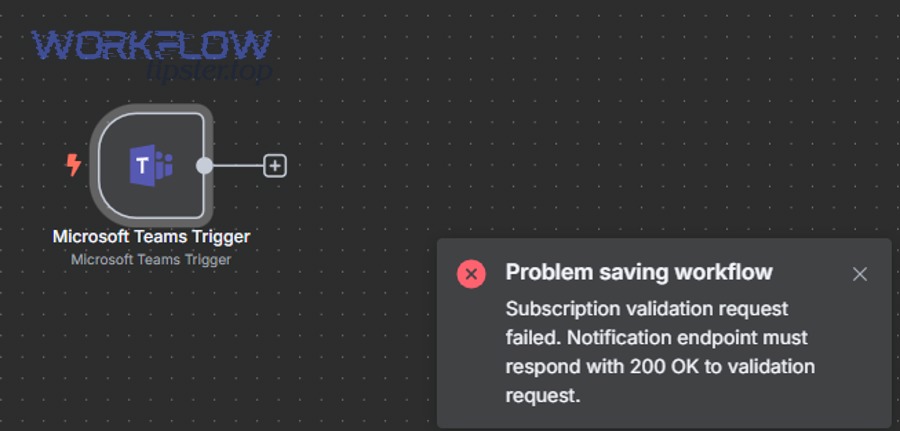

Webhook-based triggers fail when the callback endpoint cannot complete the subscription handshake or when it responds incorrectly under load. Next, you will validate connectivity, validation responses, and payload parsing so events can be accepted consistently.

Is your callback URL reachable from Microsoft’s service endpoints?

Yes, it must be reachable over HTTPS and respond within expected timeouts, or delivery will be retried and may be dropped. Next, you will verify DNS, TLS certificate validity, firewall/WAF rules, and whether your endpoint is publicly accessible.

Common blockers include IP allowlists that do not include Microsoft ranges, strict WAF rules that block unknown user agents, and TLS configurations that reject modern cipher suites. If your endpoint is behind a reverse proxy, confirm that it forwards the request body intact and does not buffer in a way that delays responses.

Are you handling validation and content-type correctly?

Yes, validation steps and strict content-type parsing are frequent causes of silent drop-offs. Next, you will confirm that your endpoint responds exactly as required during subscription validation and that it returns a 2xx quickly for event deliveries.

To be explicit, a mis-implemented handler often manifests as microsoft teams webhook 400 bad request in logs, but the root cause may be your service rejecting the validation token, expecting a different JSON schema, or enforcing overly strict request size limits.

Is your workflow parsing payload fields defensively?

Yes, defensive parsing prevents “bad payload” edge cases from killing the trigger pipeline. Next, you will ensure your handler tolerates missing optional fields, unexpected attachment structures, and null values.

Teams message events can vary by surface: a channel message, a reply, a message with an adaptive card, or a message with file references can all produce different shapes. Use schema validation with fallbacks: treat absent fields as optional, log raw payloads for inspection, and version your parsing logic so updates do not break production.

Why do polling triggers miss messages, and how do you prevent gaps?

Polling triggers miss events when the polling window is too small, time filters drift, or pagination/delta handling is incomplete. Next, you will tune schedules and retrieval logic so you capture every eligible event without duplicating processing.

Is your polling interval aligned with your “lookback” window?

No, not always; if you poll every 5 minutes but only look back 2 minutes, you will miss messages during delays or retries. Next, you will set a lookback window that exceeds the worst-case delay and then de-duplicate downstream.

A robust pattern is “widen then dedupe”: widen lookback to 10–30 minutes (depending on volume) and rely on idempotency keys (message ID + channel ID) to prevent duplicates. This shifts reliability from the trigger to your processing, where you have more control.

Are you retrieving all pages of results consistently?

Yes, pagination must be handled end-to-end or you will only process the first page and miss the rest. Next, you will confirm your connector or custom logic follows next links/cursors until completion.

This is where failures often appear as microsoft teams pagination missing records: the trigger runs, returns some items, but quietly stops before reaching the events you care about. If your platform abstracts pagination, confirm whether it enforces a maximum page count or item cap per run.

Do time zones and clock skew affect “since last run” logic?

Yes, time zone conversion and clock skew can produce gaps or duplicates, especially when filtering by timestamps. Next, you will store and compare timestamps in UTC and avoid relying on local machine time for critical boundaries.

Prefer server-side UTC timestamps, and when possible use durable cursor-based delta mechanisms rather than “createdAfter” filters. If you must filter by time, add a small overlap (for example, subtract 1–2 minutes from last processed time) and dedupe downstream.

This table summarizes polling hardening options so you can choose the safest approach for your workload and volume.

| Problem | Recommended change | Why it works | Trade-off |

|---|---|---|---|

| Missed events during delays | Increase lookback window + dedupe | Covers delayed delivery and retries | More duplicate candidates to filter |

| Only first page processed | Ensure full pagination/delta traversal | Captures all items consistently | Longer run time per poll |

| Timestamp drift | Store cursors and use UTC everywhere | Reduces time-boundary errors | Requires state storage |

| Rate limits/throttling | Backoff + smaller batch sizes | Avoids dropped calls and partial reads | Slower catch-up during spikes |

What run-time and platform issues make a trigger look dead?

Even with correct configuration, triggers can appear not firing due to throttling, queued execution, quota caps, or service incidents that delay event delivery. Next, you will distinguish “no events” from “events delayed.”

Is your automation platform delaying execution due to backlog or quotas?

Yes, platforms can queue runs when capacity is constrained or quotas are exceeded, making triggers seem silent. Next, you will check run history timestamps and platform status dashboards for delayed processing signals.

Indicators include runs that start significantly later than the event time, repeated automatic retries, or warnings about exceeded daily/monthly limits. If you see these, treat the fix as operational: reduce frequency, batch events, add throttling controls, or upgrade capacity where appropriate.

Are you being throttled by APIs or connector limits?

Yes, throttling can reduce the number of items returned or cause intermittent failures that the platform retries later. Next, you will implement backoff and reduce concurrency to stay within limits.

For high-volume channels, avoid designs where every message triggers heavy downstream work. Instead, buffer events to a queue and process them in controlled batches, which both reduces API pressure and improves deterministic processing.

Could a Microsoft service incident or regional issue be impacting events?

Yes, service health issues can delay or interrupt event pipelines. Next, you will compare your incident window against Microsoft 365 service health communications and your own tenant’s admin center advisories.

When incidents occur, the best practice is to keep your system idempotent and able to catch up. If the platform later redelivers events or your polling window re-reads them, your dedupe logic should prevent double-processing.

How do you build a reliable remediation playbook for Teams triggers?

Build reliability with a repeatable playbook: instrumentation, idempotency, retries with backoff, and health monitoring so a single missed run does not become a permanent data gap. Next, you will implement the highest-leverage controls first.

Step 1: Add instrumentation that shows where the chain broke

Log the trigger receipt, the normalized event identity, and the downstream action result so you can locate failures quickly. Next, you will standardize a minimal set of fields that every run captures.

- Event identity: team ID, channel ID/chat ID, message ID, thread/reply indicator.

- Timing: event created time (UTC), trigger received time (UTC), run start/end times.

- Connector context: connected account, tenant ID, permission model (if known), correlation IDs.

- Outcome: success/failure, error message, retry count, dedupe decision.

Step 2: Make processing idempotent so you can widen windows safely

Idempotency means processing the same event twice produces the same final state, which lets you widen polling windows and tolerate retries. Next, you will choose a stable idempotency key and enforce it consistently.

A typical key is a concatenation of tenant ID + channel/chat ID + message ID. Store it in a durable store (database, key-value store, or platform-provided state). If you see it again, skip or update safely rather than re-creating downstream artifacts.

Step 3: Use retries with exponential backoff and circuit breakers

Retries should be deliberate: back off when you hit transient faults, but stop when you hit persistent policy or permission errors. Next, you will distinguish retryable failures (timeouts, 429s) from non-retryable failures (access denied, unsupported channel type).

When you detect repeated permission errors, alert and stop retry storms. When you detect throttling or temporary outages, slow down and allow recovery. This approach protects both your quotas and the upstream service.

Step 4: Create a “minimal test flow” you can run anytime

A minimal test flow is a simplified trigger + log action that confirms whether events are arriving today, independent of your business logic. Next, you will keep this flow enabled (or ready to enable) as an always-available diagnostic tool.

This minimal flow is the fastest way to separate platform/connectivity problems from your own workflow logic. It also provides a baseline you can compare across tenants, channels, and connected accounts.

Contextual border: if you have validated the core pipeline (event → connector → runner), the remaining failures usually come from rare edge cases such as cross-tenant boundaries, guest identities, compliance retention behaviors, or message mutation patterns. The next section addresses those advanced scenarios.

Advanced edge cases: tenant boundaries, guests, and compliance

Advanced cases are less common but highly disruptive, typically involving cross-tenant interactions, guest identities, or compliance behaviors that mutate or restrict messages after posting. Next, you will apply targeted checks for these rare-but-real conditions.

Do guest users and cross-tenant chats change the event model?

Yes, guest and cross-tenant scenarios can introduce different identifiers, permissions, and access constraints that connectors may not fully support. Next, you will test the same trigger using an internal user in a standard internal channel to determine whether cross-tenant participation is the differentiator.

If internal-only works but guest/cross-tenant does not, document the limitation and choose a supported surface. In many organizations, the most stable automation path is to trigger on internal channels and then mirror relevant information outward via approved methods rather than trying to monitor cross-tenant streams directly.

Can retention/eDiscovery or policy-driven edits affect “new message” semantics?

Yes, some compliance behaviors can cause messages to be updated, rehydrated, or reclassified after creation, which can confuse triggers that rely on a “new item” definition. Next, you will check whether your platform treats message edits as new events, updates, or ignored changes.

To keep logic consistent, treat “create” and “update” separately when possible. If your platform only triggers on create, make sure your business requirement truly depends on create-time content rather than post-processed content that may appear later.

Do bots, adaptive cards, and rich content create inconsistent payloads?

Yes, rich content often changes payload shape, and strict parsing or schema assumptions can break processing even when the trigger technically fires. Next, you will log raw payloads for rich messages and normalize them into a stable internal schema.

For example, a card-based post may include nested structures, references, or attachments that differ from plain text. Your workflow should gracefully handle null or missing fields and treat “text” as optional when the message is primarily structured content.

When should you escalate, and what evidence should you bring?

Escalate when you have proven the event occurs but cannot be retrieved or delivered despite correct membership and consent, especially if the behavior changes across tenants or regions. Next, you will gather the minimum evidence that shortens resolution time.

- Exact repro steps: where the message was posted (team/channel), who posted, and the timestamps in UTC.

- Connector evidence: test trigger outputs (or lack thereof), connection identity, tenant ID, and any error codes.

- Workflow evidence: run history screenshots/exports, failure logs, and correlation IDs.

- Policy context: channel type, guest/cross-tenant status, sensitivity labels, conditional access notes.

Tóm lại, the fastest path to fixing microsoft teams trigger not firing is to isolate the failing layer, then apply a precise remediation: scope correction, consent/permission alignment, webhook/polling hardening, or operational capacity adjustments. Once your playbook is in place, triggers become predictable rather than fragile.