If you’re hitting a notion webhook 429 rate limit, you can fix it by doing three things in the right order: respect Retry-After, add exponential backoff with jitter, and throttle/queue Notion API calls so bursts don’t overwhelm your integration.

Next, it helps to understand what the 429 response is actually telling you—what “rate_limited” means, why it shows up during webhook spikes, and which signals (status code, error payload, headers, timing) confirm you’re diagnosing the right issue.

Then, you’ll want a durable implementation pattern that prevents repeat 429s: a rate limiter (throttle), a queue that smooths bursty events, and batching/caching so your workflow does less work per event.

Introduce a new idea: once your 429s are under control, you can use the same stability playbook to improve your overall Notion Troubleshooting posture—so adjacent failures like a notion webhook 500 server error don’t turn into cascading retries and duplicate updates.

Is a Notion Webhook 429 Rate Limit Error Fixable Without Turning Off the Workflow?

Yes—this notion webhook 429 rate limit issue is fixable without turning off the workflow because you can honor Retry-After, throttle request speed, and decouple event intake from Notion API processing with a queue.

To begin, the core problem is not the webhook itself—it’s the burst of downstream Notion API calls that follows, so you fix the burst rather than disabling the whole system.

Should you stop retries when Notion returns 429, or retry after a delay?

No, you should not stop permanently; you should retry after a delay because 429 is a pacing signal, Retry-After tells you the minimum wait time, and backoff with jitter prevents synchronized retry storms.

Next, reconnect this to the real failure pattern: when you “retry immediately,” you don’t just fail again—you often multiply requests (especially in automation tools that retry whole steps), which keeps you rate-limited longer.

Here’s the durable retry behavior that fixes the 429 loop:

- Read Retry-After first and wait at least that many seconds before retrying.

- Add exponential backoff so each consecutive 429 increases delay (e.g., 1s → 2s → 4s → 8s), capped at a reasonable maximum.

- Add jitter (randomness) so multiple workers don’t retry at the same instant.

- Cap retries and surface an alert after a threshold so transient problems don’t become infinite loops.

The practical benefit is simple: your system “breathes” instead of “panicking,” and Notion sees fewer bursts, which makes the 429 window shorter.

Can you keep your webhook endpoint live while you rate-limit Notion API calls downstream?

Yes, you can keep the webhook endpoint live because you can accept events quickly, store them in a queue, and process them at a controlled rate that stays under Notion’s limits.

Then, that queue becomes your safety buffer: the webhook stays responsive (no timeouts), while your Notion calls become steady and predictable.

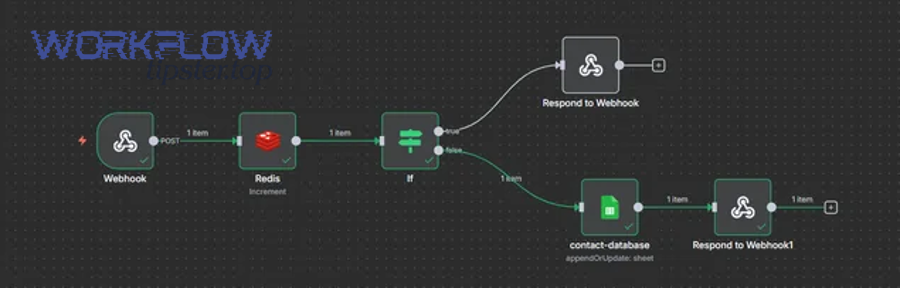

A strong workflow architecture looks like this:

- Webhook receives event → returns 200 quickly.

- Event is queued (database table, message queue, or platform queue).

- Worker processes events at a controlled rate (throttle enforced here).

- Retries happen inside the worker using Retry-After + backoff + jitter.

This design is especially useful when you have multiple automations triggering at once—bulk edits, imports, database syncs—because it turns “spiky” load into “smooth” load.

What Does “Notion Webhook 429 Rate Limit” Mean (and What Signals Prove It)?

A notion webhook 429 rate limit is an HTTP “Too Many Requests” response from the Notion API that originates when your integration sends requests faster than allowed, typically during bursty webhook-driven processing. (developers.notion.com)

To better understand the issue, focus on the “proof signals” that distinguish a true rate limit from look-alike failures (timeouts, auth errors, or internal server errors).

What is HTTP 429 “Too Many Requests” in Notion integrations?

HTTP 429 in Notion integrations is a rate-limiting response that tells your client to slow down because it exceeded the allowed request volume for that integration. (developers.notion.com)

Specifically, this often happens when your webhook event fan-outs into multiple Notion API calls—like searching, reading pages, then updating properties across many items—without pacing.

Common “you’re truly rate-limited” indicators:

- The response status is 429 and the error is rate_limited. (developers.notion.com)

- 429s appear in clusters right after bursts (e.g., after a bulk edit or backfill).

- Your retries “work” if you wait, but fail if you immediately retry.

A key nuance: your webhook is not “rate limited.” Your downstream Notion API usage is rate limited. So if you only tweak webhook configuration but leave Notion call volume unchanged, you’ll keep seeing 429.

What is the Retry-After header and how should you interpret it?

Retry-After is a response header that specifies the minimum number of seconds you should wait before sending the next request after a 429, and Notion explicitly expects clients to respect it. (developers.notion.com)

More specifically, treat Retry-After as the “floor” (minimum delay), not your entire strategy.

How to interpret it correctly:

- If Retry-After is 2, wait at least 2 seconds before retrying.

- If you’re still getting 429 after waiting, increase delay with exponential backoff.

- If multiple workers exist, add jitter so they don’t align.

This interpretation matters because it prevents the most common failure mode: a system that “politely” waits exactly Retry-After, but does so in perfect sync across many workers, causing another burst the moment the delay ends.

Which Root Causes Trigger Notion 429 in Webhook-Driven Automations?

There are 4 main root-cause groups behind notion webhook 429 rate limit errors: bursty event arrivals, request fan-out per event, excessive parallelism, and inefficiencies that multiply calls—each defined by how they inflate requests per second.

Let’s explore the “why” in a way that leads directly to the right fix, because guessing at causes often leads to the wrong lever (like adding more retries without reducing load).

What “request burst” patterns are most likely to hit Notion rate limits?

The most likely burst patterns are bulk edits, backfills, high-frequency triggers, and multi-step syncs because they generate short spikes of concentrated Notion API calls that exceed the average allowed pace.

Next, connect this to real workflows: even a “small” automation can create a burst if it loops over items and calls Notion repeatedly inside the loop.

Typical burst scenarios:

- Bulk edits/imports: one user action changes many pages, generating many webhook events.

- Backfills: you replay historical data and attempt to update hundreds/thousands of pages quickly.

- High-frequency triggers: a system emits events every few seconds, and each event produces multiple Notion calls.

- Cold start bursts: a workflow restarts and tries to “catch up,” sending many requests quickly.

A simple diagnostic question: “Do 429s appear right after a sudden jump in processed events?” If yes, you’re dealing with burst pressure, and queue + throttle is usually the correct fix.

What workflow design patterns amplify requests (fan-out, loops, per-item updates)?

The biggest amplifiers are per-item loops, fan-out branches, repeated searches, and property-by-property writes because they turn one webhook event into N×M Notion API calls.

Then, once you see the multiplier, you can reduce it—often without changing the outcome of the automation.

Common multipliers to look for:

- Search inside a loop (worst-case: one search per item).

- Update inside a loop with many small patch calls instead of fewer consolidated updates.

- Fan-out branches that each call Notion independently for the same context (duplicate reads).

- No caching (you re-fetch the same database schema/properties repeatedly).

- Parallel “map” steps with high concurrency.

If your workflow tool supports it, temporarily log:

- number of webhook events received per minute

- number of Notion API calls per event

- concurrency level

That’s enough to compute the real “request pressure” and identify which multiplier is doing the damage.

How Do You Fix Notion 429: Backoff, Throttling, Batching, and Queues?

Use a “Retry-After + backoff + throttle” method in 5 steps to fix notion webhook 429 rate limit errors: obey Retry-After, add exponential backoff with jitter, cap concurrency, reduce calls with batching/caching, and buffer events in a queue to smooth spikes. (developers.notion.com)

Next, apply the steps in the right order—because adding a queue without fixing retry behavior can still create synchronized bursts, and adding retries without throttling can increase load.

Here’s a quick map of symptoms to fixes (so you can choose the right lever fast):

| Symptom you see | What it usually means | Fastest stabilizing fix |

|---|---|---|

| 429 appears only during occasional bursts | short-lived overload | honor Retry-After + backoff + cap concurrency |

| 429 persists for long stretches | sustained overload | throttle + batching + queue |

| 429 occurs after retries | retry amplification | jitter + retry caps + circuit-breaker behavior |

| 429 occurs with multi-user traffic | shared integration pressure | global limiter across all tenants |

How do you implement exponential backoff + jitter for Notion 429 retries?

Exponential backoff + jitter means you retry the same Notion request after progressively longer delays with randomization, because that reduces contention, prevents synchronized retries, and gives the API time to recover.

Then, tie it back to the core outcome: your goal is not “retry fast”—your goal is “retry successfully without triggering another 429 wave.”

A solid default policy:

- Start with Retry-After if present; otherwise a small delay (e.g., 1–2 seconds).

- Multiply delay by 2 after each consecutive 429 (exponential backoff).

- Add jitter (random ±20–40%) to spread retries.

- Cap maximum delay (e.g., 60 seconds) and cap retries (e.g., 6–8 attempts).

- Reset delay after a successful request (don’t stay slow forever).

Why jitter matters: if 20 workers all got rate-limited at the same moment and all wait exactly 4 seconds, they all retry exactly 4 seconds later—creating a new burst.

According to a study by Stony Brook University from the Department of Computer Science, in 2016, researchers presented a randomized backoff approach that targets expected constant throughput under dynamic arrivals—illustrating why backoff with randomization is a reliable way to coordinate access to shared resources. (www3.cs.stonybrook.edu)

How do you throttle Notion API calls to prevent repeated 429s?

Throttling prevents repeated 429s by enforcing a maximum request rate and concurrency so your workflow never sends more Notion calls per second than your integration can sustain.

More importantly, throttling is the “always-on seatbelt”: backoff reacts after failure, but throttling prevents failure in the first place.

Two throttling layers to implement:

- Rate limit (requests per second)

- Allow a steady pace for Notion calls.

- If your system is busy, queue requests rather than firing them immediately.

- Concurrency limit (in-flight requests)

- Cap parallel Notion calls (e.g., 1–3 at a time) to prevent bursty spikes.

- This matters even if your average rate is low, because concurrency creates micro-bursts.

A strong mental model: throttling turns “infinite speed when there’s backlog” into “steady speed regardless of backlog.”

According to a study by Carnegie Mellon University from the Parallel Data Laboratory, in March 2005, an empirical analysis found that DNS-based rate limiting showed substantially lower error rates than schemes based on other traffic statistics, underscoring how the choice and tuning of limiting mechanisms can materially affect outcomes. (pdl.cmu.edu)

How do you batch and reduce Notion calls so you stay under limits?

You stay under limits by batching updates, caching repeated reads, and eliminating “per-item searches,” because these changes reduce total Notion requests per event without changing your automation’s business result.

Then, once request volume drops, your throttle and queue don’t have to work as hard—which means lower latency and fewer retries.

High-impact call-reduction tactics:

- Cache database schema / property mappings per run instead of re-fetching repeatedly.

- Replace “search per item” with one upfront search, then map results locally.

- Combine small updates into fewer page update calls where possible.

- Skip no-op writes (don’t update a property if the value is already correct).

- Use a “changed fields only” approach: only write what actually changed.

If you use an automation platform, look for:

- “Continue on fail” behaviors that silently retry steps

- “Split into items” modules that create per-item Notion calls

- branching paths that re-fetch the same Notion page in multiple branches

How do you use a queue to smooth webhook spikes and protect Notion?

A queue protects Notion by absorbing webhook spikes and releasing work at a controlled rate, so bursts don’t translate into bursts of Notion API calls.

In addition, a queue is how you keep the webhook endpoint fast: accept events immediately, process later.

A queue-based design typically includes:

- Enqueue quickly (store event ID, timestamp, payload, and a processing status).

- Worker pulls jobs at a fixed rate (throttle enforced here).

- Retry policy lives in the worker (Retry-After + backoff + jitter).

- Dead-letter handling for events that repeatedly fail beyond retry caps.

If you’re dealing with “spiky” traffic, smoothing matters because burstiness increases contention and failure probability in shared systems.

According to a study by the University of Washington from the Department of Computer Science and Engineering, in 2000, researchers noted that bursty traffic is associated with higher queueing delays, more packet loss, and lower throughput, which is exactly why smoothing bursts with pacing/queues is a practical reliability strategy. (cseweb.ucsd.edu)

What’s the Best Fix Strategy: “Retry-After Only” vs “Full Rate Limiter + Queue”?

Retry-After only wins on simplicity, a full rate limiter + queue is best for reliability at scale, and batching/caching is optimal for efficiency—so the “best” strategy depends on whether your priority is speed to patch, long-term stability, or reduced request cost.

However, the real trap is choosing a strategy that matches a small test workload but fails under real burst conditions.

To make the decision concrete, evaluate three criteria:

- Burst frequency: How often do spikes happen?

- Blast radius: Does one failure impact one workflow or many customers?

- Duplicate risk: Will retries cause double writes or inconsistent state?

When is honoring Retry-After alone enough?

Honoring Retry-After alone is enough when volume is low, bursts are rare, and the workflow can tolerate short delays because the system only needs a polite pacing signal rather than a full traffic-shaping layer. (developers.notion.com)

Then, your “patch” is mainly about correctness: don’t hammer Notion after 429.

This approach fits:

- single-user or small-team automations

- workflows that run a few times per hour/day

- minimal fan-out per event (one event → one or two Notion calls)

- low consequence if the workflow runs a little slower

Even here, you still want basic backoff + retry caps, because Retry-After alone doesn’t prevent synchronized retries if multiple events fail simultaneously.

When do you need throttling + queueing (and possibly batching) to eliminate 429s?

You need throttling + queueing when bursts are common, concurrency is high, or multiple users share the same integration because these conditions create sustained request pressure that Retry-After alone cannot control.

Meanwhile, batching/caching becomes the difference between “barely stable” and “comfortably stable,” because it lowers baseline volume.

Choose the full strategy if you have:

- fan-out workflows (one webhook event triggers many reads/writes)

- high-frequency triggers (every few seconds or minutes)

- multi-tenant traffic (many workspaces/customers)

- backfills or migrations (hundreds/thousands of items)

- adjacent instability where 429 coincides with other failures

This is also where you should broaden Notion Troubleshooting beyond 429: if you’re also seeing a notion webhook 500 server error, a queue prevents a transient Notion-side issue from causing your system to retry explosively and create additional 429 pressure. (developers.notion.com)

How Can Developers and Automation Builders Validate the Fix and Prevent Regression?

Developers and automation builders can validate the fix by tracking 429 frequency, retry counts, queue depth, and throughput, then load-testing with controlled bursts and setting alerts—because stable request pacing is measurable and regressions show up as rising 429 and backlog trends.

Especially, you want proof that your “steady-state” processing rate remains healthy during bursts, not just when traffic is quiet.

What metrics should you track to confirm your Notion 429 fix works?

Track 429 count, retry attempts, average Retry-After wait, request rate, and queue depth because these metrics reveal whether you’re still bursting, whether retries are controlled, and whether backlog is growing or shrinking.

In addition, these metrics connect directly to user impact: a growing queue means slower updates; rising 429 means a broken pacing guarantee.

A practical metrics checklist:

- 429 responses per hour/day (should trend down after fixes)

- Retries per successful request (should be low and stable)

- Average and max retry delay (should respect Retry-After and cap)

- Queue depth over time (should spike during bursts, then drain)

- Time-to-process per event (latency from webhook receipt → Notion update)

- Concurrency level (ensure caps are actually enforced)

Add two alerts:

- Alert if 429 rate exceeds a threshold for N minutes.

- Alert if queue depth increases continuously for N minutes.

How do you load-test webhook bursts without breaking production workflows?

Load-test safely by replaying recorded events into a staging environment, increasing burst size gradually, and confirming your throttling/queue drains predictably—so you learn your safe throughput without risking duplicate writes in production.

To illustrate the most common pitfall: many teams test with one event at a time, but production fails because 50 events arrive in a minute.

A safe load-testing approach:

- Use a staging Notion workspace (or a sandbox database).

- Replay real event shapes (same payload structure, smaller dataset).

- Start with small bursts (e.g., 10 events), then increase (25, 50, 100).

- Confirm:

- 429 stays near zero or remains controlled

- queue grows during the burst and drains afterward

- total processing completes within an acceptable window

- Verify idempotency: the same event replay should not create duplicates.

This is also the right place to test adjacent failure handling. For example, if you encounter notion attachments missing upload failed scenarios during heavy load, treat them as separate, retryable failures with their own backoff—so attachment retries don’t compete with normal update traffic and accidentally re-trigger 429. (developers.notion.com)

How Do You Prevent Notion 429 in Advanced Setups: Multi-User, Distributed Workers, and Retry Storms?

At this point, you’ve implemented the core fixes that stop 429 errors and stabilize throughput. Next, we’ll move from the main troubleshooting path into deeper, workflow-specific optimizations and edge-case engineering patterns that further reduce risk and improve scalability.

There are 4 advanced prevention layers for notion webhook 429 rate limit in complex systems: coordinated backoff across workers, adaptive throttling, webhook deduplication for idempotency, and priority queues that separate critical writes from non-critical reads.

More importantly, these layers solve the “hard” problems: not just one workflow, but many workflows; not just one worker, but many workers; not just one spike, but constant spikes.

How do you avoid a retry storm when multiple workers hit 429 at the same time?

You avoid a retry storm by coordinating retries globally using jitter, shared rate limits, and circuit-breaker behavior, because independent workers retrying in sync can create a self-inflicted burst that keeps Notion rate-limiting you.

Then, connect it to the practical failure chain: one outage → many failures → synchronized retries → bigger outage.

A reliable storm-prevention checklist:

- Global limiter shared by all workers (one “traffic cop” for Notion calls)

- Random jitter so retries spread across time

- Circuit breaker: if 429 persists, pause a whole class of requests briefly

- Backoff caps + fail-fast for non-critical operations

- Queue-based retries (retry becomes “re-enqueue with delay,” not “hammer now”)

This is the “burst vs steady-state” antonym pair in action: you want a steady-state request stream even when failures happen.

How does adaptive throttling differ from a fixed rate limit?

Fixed throttling uses a constant request pace, while adaptive throttling adjusts pace based on feedback like recent 429s and Retry-After, making it better for variable traffic and variable limit enforcement.

Specifically, adaptive throttling can speed up when the API is healthy and slow down when it’s not—without waiting to fail repeatedly.

A simple adaptive approach:

- Maintain a target rate.

- If you see 429, reduce the rate (e.g., multiply by 0.7).

- If you see sustained success, slowly increase rate (e.g., add small increments).

- Always honor Retry-After as a minimum wait.

Adaptive throttling is especially useful when you run multiple workflows across many users, because real-world traffic is not constant and a one-size fixed rate can be either too slow or too risky.

How do you deduplicate webhook events so retries don’t create duplicates?

You deduplicate webhook events by treating each event as an idempotent unit—store an event ID (or derived fingerprint), record processing state, and refuse to apply the same change twice even if the event is retried.

In addition, deduplication prevents the most expensive kind of regression: repeated writes that both consume rate limit and corrupt data.

Practical dedup patterns:

- Event store table keyed by event ID with status (received, processing, done, failed).

- Idempotency key per target Notion object + operation type (e.g., “update page X property Y from A→B”).

- Upsert logic: if already done, skip; if in progress, don’t parallel-process it.

When you combine deduplication with backoff, you get safer retries: retries become “event processing resumes,” not “duplicate updates happen.”

How can priority queues protect critical Notion writes while deferring non-critical reads?

Priority queues protect critical writes by ensuring user-visible updates process first, while non-critical reads and enrichment tasks wait, which reduces perceived latency and prevents optional traffic from consuming the same rate budget during spikes.

To sum up, priority is how you keep the system useful under load.

A practical priority split:

- High priority: user-facing status updates, important database writes

- Medium priority: sync operations that users expect soon

- Low priority: enrichment, analytics, periodic backfills

This is also where reliability improves across error types: when Notion returns a 500 or intermittent failures, you can pause low-priority classes first, preserving capacity for the work that matters.

Evidence (key facts used): Notion documents that rate-limited requests return HTTP 429 with a rate_limited error and instructs integrations to respect the Retry-After header; it also notes an average request limit per integration and suggests backing off or using queues. (developers.notion.com)