If you’re seeing “limit exceeded” in a Smartsheet integration, you can fix it by treating it as a rate-limit event: pause the burst, read the rate-limit reset signal, and resume with controlled pacing instead of repeated retries.

You’ll also want to understand what the error is actually telling you—Smartsheet uses HTTP 429 Too Many Requests for throttling, and you’ll often see an associated error payload that includes errorCode 4003 (“Rate limit exceeded”).

From there, the most reliable path is to implement a retry approach that reduces pressure on the API (backoff + jitter), and then redesign request patterns to prevent the issue from returning during peak jobs or bursts.

Introduce a new idea: once you can recover safely, you can go deeper—logging the right headers, isolating bursty workloads, and applying proactive throttling so your integration stays stable even under growth.

What does “Smartsheet API limit exceeded” mean (HTTP 429 / errorCode 4003)?

“Smartsheet API limit exceeded” means the Smartsheet API is throttling your application for sending requests too quickly, returning HTTP 429 Too Many Requests and often an error payload indicating rate limit exceeded (commonly errorCode 4003).

To better understand the issue you’re facing, it helps to interpret the response as a traffic-control signal, not a mysterious failure. When Smartsheet throttles you, it expects you to slow down and use the returned rate-limit headers to decide when to try again.

What is HTTP 429 “Too Many Requests,” and how is it different from other API errors?

HTTP 429 is a temporary, client-side pacing error: the server is reachable and healthy, but it is refusing to serve you because you’ve exceeded an allowed request rate during a time window.

Specifically, 429 differs from other common API errors in ways that matter for troubleshooting:

- 429 vs 401/403 (auth/permission): Authentication errors remain until you fix credentials or access rights. Rate limits often resolve automatically if you wait and send fewer requests.

- 429 vs 404 (not found): 404 indicates the resource path/ID is wrong or missing. 429 says the request might be fine, but your timing isn’t.

- 429 vs 500/503 (server instability): 5xx errors often require resilience too, but the remedy is not always “slow down.” With 429, slowing down is the main point.

- 429 vs 400 (bad request): 400 means your request structure is wrong; retrying the same request will keep failing. With 429, retrying can succeed—if you delay correctly.

The practical takeaway is simple: treat 429 as a capacity boundary and switch from “keep trying” to “retry with control.”

What is errorCode 4003, and why might you see it alongside 429?

errorCode 4003 is a Smartsheet error code that commonly appears in the response body as “Rate limit exceeded,” and you may see it alongside the 429 response when the API communicates throttling via both HTTP status and a structured payload.

In practice, log both values because they serve different purposes:

- HTTP status (429): quick classification for generic handlers (rate limit, retryable)

- errorCode/message (4003): vendor-specific context (Smartsheet throttling vs other 429-style scenarios)

- refId (if present): helps support teams trace a particular request

When you’re troubleshooting, having both 429 and 4003 in your logs makes it easier to confirm you’re solving rate limiting, not authentication, payload format, or endpoint errors.

Is the Smartsheet “limit exceeded” error temporary and safe to retry?

Yes—the Smartsheet “limit exceeded” error is temporary and usually safe to retry because (1) it represents throttling rather than permanent failure, (2) it includes signals telling you when the limit resets, and (3) controlled retries restore service without changing business logic.

However, the safety comes from how you retry. If you retry immediately and aggressively, you can lock your system into a loop that keeps producing 429s.

Yes or no: Should you immediately retry the same request after a 429?

No—you should not immediately retry after a 429 because (1) the rate window hasn’t reset yet, (2) repeated immediate retries can create a retry storm across workers, and (3) you’ll waste compute while increasing overall time-to-recovery.

Next, connect that to what Smartsheet explicitly recommends: read the returned rate-limit headers (especially the reset time) and wait until the window resets before trying again.

A practical default that many teams adopt is a short, safe “cooldown” when rate limiting appears—Smartsheet’s own best-practices guidance gives an example of sleeping and retrying after encountering the rate-limit error.

When can retries make things worse (retry storms) and how do you avoid them?

Retries become dangerous when multiple workers react the same way at the same time—especially during nightly sync jobs, bulk updates, or event bursts—because every worker retries in sync and hammers the API again.

More specifically, retry storms happen when these conditions align:

- High concurrency: many threads/queues/processes attempt the same endpoint simultaneously.

- Uniform retry delay: everyone waits the same fixed time (or retries instantly), so collisions repeat.

- No global coordination: each worker believes it’s acting alone, but your integration behaves like a single “noisy” client.

To avoid this, implement three guardrails:

- Exponential backoff: increase delay after each 429 so pressure falls quickly.

- Jitter (randomness): add randomness so workers spread out in time rather than stampeding together.

- Retry caps + circuit breakers: stop retrying forever; fail gracefully, queue work, and resume later.

This “slow down + spread out + cap retries” approach keeps your integration from turning a temporary rate limit into an extended outage.

Which signals should you check to diagnose the rate limit (headers, logs, and timing)?

There are three main types of signals you should check to diagnose Smartsheet API rate limiting: (1) X-RateLimit-* headers (limit, remaining, reset), (2) request timing and burst patterns in logs, and (3) concurrency and endpoint hotspots across workers.

Then, to better understand why you hit the limit, you need to treat the investigation like a pacing problem: what volume did you send, how quickly, and from how many parallel paths?

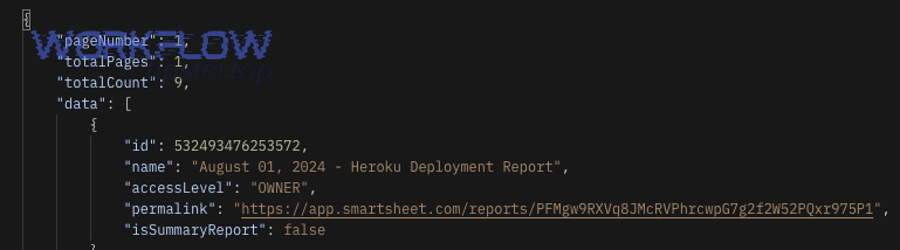

What rate-limit headers and response fields should you log for every 429?

You should log a compact “429 diagnostic record” so you can answer two questions later: when can I retry safely and what caused the spike. Smartsheet documents that you can inspect the X-RateLimit-* headers when you receive a 429, including X-RateLimit-Limit, X-RateLimit-Remaining, and X-RateLimit-Reset.

Log these fields for every 429 response:

- HTTP status: 429

- Endpoint + method: e.g.,

GET /sheets/{id}(and the HTTP method) - Timestamp (UTC): when your client received it

- X-RateLimit-Limit: the allowed volume for the window

- X-RateLimit-Remaining: how many calls were left (often 0 at throttle time)

- X-RateLimit-Reset: epoch seconds for when the window resets

- Smartsheet error payload fields: errorCode (e.g., 4003), message, refId (if present)

- Caller context: tenant/customer ID, job ID, worker ID, correlation ID

- Request size hints: pagination size, batch size, row counts (where relevant)

With this record, you can compute “time until reset” and stop guessing.

How do you tell whether the issue is burst traffic, high concurrency, or inefficient polling?

Burst traffic, high concurrency, and inefficient polling all create rate-limit events—but they leave different fingerprints.

Burst traffic (spikes) looks like:

- A short window where requests jump sharply (e.g., 10× baseline)

- Many 429s clustered closely in time

- Often triggered by one event (bulk update, import, automation storm)

High concurrency looks like:

- Many workers firing continuously, not just a short spike

- 429s appear repeatedly across the entire job duration

- Endpoint distribution is broad (lots of different calls competing)

Inefficient polling looks like:

- A steady high volume of repetitive reads

- The same endpoints repeated on a timer (status checks, “get everything” sync)

- 429s appear predictably (every few minutes) as windows fill

To diagnose quickly, compare:

- Requests per second vs baseline

- Unique endpoints hit during the window

- Number of active workers during the incident

- Which job/tenant dominated call volume

Once you know which pattern you’re in, the fix becomes clearer: spikes need smoothing, concurrency needs coordination, polling needs redesign.

What is the fastest troubleshooting workflow to resolve “limit exceeded” in production?

There are five fast steps to resolve “limit exceeded” in production: (1) pause the burst, (2) reduce concurrency, (3) wait until the rate window resets, (4) resume with backoff + jitter, and (5) ramp up gradually while monitoring 429 rate.

Besides being fast, this workflow protects your integration from making the incident worse—especially when multiple workers are involved.

What should you do in the first 15 minutes after you see repeated 429s?

In the first 15 minutes, your goal is to stop the bleeding and restore predictability. Here’s a practical emergency playbook you can execute immediately:

- Freeze non-essential workloads

- Pause optional syncs, backfills, and analytics pulls

- Stop “refresh everything” jobs

- Reduce concurrency hard

- Drop worker count

- Lower parallel threads per tenant

- Serialize the noisiest endpoints temporarily

- Switch retries to “reset-aware waiting”

- Read X-RateLimit-Reset and wait until reset

- If reset isn’t available in your wrapper, apply a conservative delay and re-check

- Introduce jitter

- Randomize retry delay per worker so they stop colliding

- Add per-tenant staggering (tenant A starts at :00, tenant B at :10, etc.)

- Record incident context

- Which job started it?

- Which tenant(s) spiked?

- Which endpoints dominated volume?

This is also the right place to fold in broader smartsheet troubleshooting habits: always preserve the failing request’s correlation ID, job ID, and response metadata so you can reproduce the scenario safely later.

How do you safely resume traffic after throttling (ramp-up strategy)?

To safely resume, you should ramp up traffic in controlled stages rather than returning to full speed immediately.

A strong ramp-up strategy looks like this:

- Stage 1: Recovery baseline

- Run 1 worker (or minimal concurrency)

- Use strict backoff + jitter

- Confirm 429 rate falls near zero

- Stage 2: Stepwise increases

- Increase concurrency by small increments (e.g., +1 worker per 2–5 minutes)

- Watch 3 signals: 429 rate, average latency, queue depth

- Stage 3: Cap at stable throughput

- Stop increasing when 429 begins to reappear consistently

- Use that level as your “safe max” until you redesign long-term

If you’re integrating multiple failure modes, this ramp-up also helps you distinguish rate limiting from other issues you might see at the same time, like smartsheet timeouts and slow runs troubleshooting scenarios (where latency climbs even without 429s) or payload problems (where 400 errors appear due to malformed requests).

Which retry strategy works best: fixed delay vs exponential backoff vs token bucket throttling?

Fixed delay is simplest for small scripts, exponential backoff with jitter wins for preventing retry storms, and token bucket throttling is optimal for predictable high-volume integrations that need steady throughput without bursts.

However, the “best” strategy depends on whether you’re reacting to an outage or designing for long-term stability. Let’s compare them clearly.

Before the details, here’s a decision table that summarizes what each strategy is best for.

| Strategy | Best for | Main strength | Main risk |

|---|---|---|---|

| Fixed delay | Low volume, single worker | Easy to implement | Synchronizes retries → collisions |

| Exponential backoff + jitter | Most integrations | Avoids retry storms, adapts to load | Needs careful caps and idempotency |

| Token bucket (proactive throttling) | High volume, predictable traffic | Prevents bursts, stable throughput | Misconfigured parameters can add queue delay |

How does exponential backoff with jitter reduce repeated 429 errors?

Exponential backoff with jitter reduces 429s by rapidly decreasing request pressure after throttling and desynchronizing clients so they don’t retry in lockstep.

Specifically, you implement it like this:

- After the first 429, wait baseDelay × 2^attempt

- Add random jitter (e.g., ±20–50%) so workers spread out

- Cap the delay (e.g., max 30–120 seconds) so retries don’t drag on forever

- Stop after a maximum attempt count, then queue the work for later

This approach works because rate limiting is often a “window” problem: once you get out of the crowded window, requests succeed again.

If you want a practical Smartsheet-aligned version, Smartsheet explicitly advises implementing detection and handling of throttling via the X-RateLimit-* headers and waiting until the rate limit resets when you get a 429.

When should you use token bucket/leaky bucket throttling instead of “retry later”?

You should use token bucket (or similar proactive rate limiting) when your integration has steady, high-volume demand and you want to prevent bursts from ever hitting the API hard enough to trigger 429.

Here’s the comparison that matters:

- Reactive retry (“retry later”) is best when rate limits are occasional and unpredictable. It’s a safety net.

- Proactive throttling (token bucket) is best when you know your integration generates lots of traffic and you want a stable throughput guarantee.

Token bucket is especially powerful for multi-worker systems because it creates a single shared truth: “only this many requests can go out right now.”

According to a study by Imperial College London from the Department of Electrical and Electronic Engineering, in 2012, simulations showed token bucket shaping reduced packet loss to zero for bursty MPEG4 traffic but increased delay due to queueing.

How can you prevent Smartsheet API rate-limit issues long-term?

There are six main ways to prevent Smartsheet API rate-limit issues long-term: (1) reduce unnecessary reads, (2) batch writes, (3) cap concurrency per tenant, (4) cache aggressively, (5) smooth bursts with queues, and (6) use proactive throttling to keep throughput steady.

More importantly, prevention is not a single trick—it’s an architectural shift from “burst and pray” to “budget and pace.”

What are the most effective ways to reduce API calls without losing data freshness?

You can reduce calls without losing freshness by switching your integration to change-aware patterns:

- Cache read-heavy objects

- Cache sheet metadata that rarely changes

- Cache stable reference data used for mapping and validation

- Use delta logic

- Store last sync timestamp / version marker

- Fetch only changes since the last known good state (when supported by your design)

- Avoid “full scans”

- Don’t fetch the entire sheet for each small update

- Don’t re-check everything on every run “just to be safe”

- Batch operations

- Group updates into fewer API calls when possible

- Combine small changes per job window rather than immediate per-event writes

- Limit pagination size responsibly

- Larger pages reduce calls but can increase payload size and processing time

- Tune page size based on your runtime and payload constraints

This is also where you prevent adjacent pain points that often travel with rate limiting. For example, when you optimize request volume, you often reduce the likelihood of smartsheet timeouts and slow runs troubleshooting incidents because you’re sending fewer expensive calls and doing less repeated work.

Which is better for your use case: polling frequently or processing changes in batches?

Batch processing is usually better for stability, while frequent polling is only best when you truly require near-real-time updates and can afford the API budget.

- Batching wins when you care about reliability

- Smoother traffic

- Fewer spikes

- Easier throttling and budgeting

- Polling wins when you need low latency

- Faster detection of changes

- Higher API cost

- Greater risk of rate limiting if not engineered carefully

If you currently poll and hit 429s, a practical transition is: keep polling, but poll less frequently, cache responses, and process changes in scheduled batches. Then you can eventually replace polling with event-driven signals where available in your ecosystem.

And while you’re refactoring, validate payload construction carefully—many teams accidentally create extra retries because the request fails for a different reason (for example, smartsheet invalid json payload troubleshooting becomes relevant when a malformed body triggers 400 errors that workers keep trying to “fix” by retrying). Logging the error type correctly prevents wasted calls.

How do you optimize Smartsheet API rate-limit handling for edge cases and specific stacks?

You optimize Smartsheet API rate-limit handling by combining proactive throttling, tenant-aware fairness, burst smoothing, and resilience patterns (circuit breakers + dead-letter queues) so 429 events degrade gracefully instead of breaking your entire workflow.

Next, move from “one-size-fits-all backoff” to stack-specific tactics that protect you under growth and complexity.

What is the difference between “throttling proactively” and “retrying reactively” after a 429?

Proactive throttling prevents 429s by limiting outbound traffic before it hits the API, while reactive retrying responds after 429s occur; proactive wins for steady high-volume systems, and reactive is best as a safety net for occasional spikes.

Specifically, proactive throttling:

- Sets a global/tenant budget (token bucket)

- Smooths bursts automatically

- Keeps latency predictable during normal operation

Reactive retrying:

- Requires fewer moving parts

- Works well when traffic is low or sporadic

- Can still cause retry storms without jitter and coordination

A robust integration usually uses both: token bucket for baseline pacing, plus backoff+jitter for unexpected throttles.

How should multi-tenant integrators prevent one customer from consuming the entire request budget?

Multi-tenant integrators should use fairness controls so one noisy tenant can’t starve others:

- Per-tenant quotas: allocate a request budget per tenant per window

- Weighted fair queues: prioritize paid tiers or critical workflows

- Separate queues per tenant: isolate spikes so they don’t spill over

- Per-tenant concurrency caps: prevent “fan-out” jobs from monopolizing workers

- Adaptive shedding: pause noncritical syncs for a noisy tenant first

This is also where smartsheet field mapping failed troubleshooting becomes operationally linked: when a mapping job fails and retries repeatedly for a single tenant, that tenant can create a concentrated request flood. If you cap tenant retries and route mapping failures to a dead-letter queue, you protect your global budget and keep other tenants healthy.

Why do bursts from automations/webhooks cause limit spikes, and how do you smooth them?

Automation and webhook bursts cause spikes because many events arrive at once, and naive integrations respond by firing a full set of API calls per event—creating synchronized traffic that fills the rate window quickly.

To smooth bursts, apply these techniques:

- Debounce: wait briefly (e.g., 5–30 seconds) to combine events

- Batch windowing: group updates into time slices

- Queue buffering: let events accumulate and drain at a controlled rate

- Worker caps: limit how many workers can drain the queue at once

- Coalescing: if 10 events target the same sheet/row, merge them into one action

This approach turns “burst traffic” into “steady throughput,” which is exactly what rate limits reward.

Which resilience patterns help when 429 errors persist (circuit breakers, DLQs, and safe replays)?

When 429s persist, resilience patterns keep your system stable by preventing endless retries and preserving work safely:

- Circuit breaker: stop sending traffic temporarily after sustained 429 rate; switch to queued mode

- Dead-letter queue (DLQ): store failed jobs/events for controlled replay later

- Idempotency strategy: ensure replays don’t double-apply updates

- Safe replay scheduler: replay when reset windows look healthy again

- Progress checkpoints: store “last successful step” so retries resume from the correct point

According to a study by the University of Hawaii from its ALOHA System research program, in 1970, the authors noted retransmissions must use different delays to avoid repeated interference, and showed performance becomes unstable as retransmissions grow without control.