A GitLab → Asana → Slack DevOps alerts workflow is the most reliable way to turn “something broke” signals (pipelines, merge requests, deployments) into owned work (Asana tasks) and actionable communication (Slack alerts) so incidents don’t die in chat.

Then, the best setup depends on how much control you need: some teams want the simplest path (native notifications), while others need routing by environment, severity, and service—so your workflow stays fast without becoming noise. (sre.google)

Next, you’ll also need a durable mapping layer—so every Slack alert links to the right GitLab object and the right Asana task, and updates happen in one place instead of scattering across channels and boards.

Introduce a new idea: once the core workflow works end-to-end, you can harden it with deduplication, state-change rules, and escalation patterns so “alerts = notifications” stays true even when systems retry, events replay, or multiple services fail at once.

Title & outline quick analysis (requested in Step 1):

– Main keyword (keyword focus): gitlab to asana to slack devops alerts

– Predicate (main action): Automate / Set Up

– Relations Lexical used: Synonym (“Alerts = Notifications”)

– Search intent type from outline: primarily How-to, supported by Definition, Boolean, Grouping, and Comparison headings.

What is a GitLab → Asana → Slack DevOps alerts workflow?

A GitLab → Asana → Slack DevOps alerts workflow is an automation workflow that captures GitLab events (like pipeline failures or merge request changes), turns them into structured tasks in Asana, and posts concise, actionable Slack notifications so a DevOps team can respond quickly and consistently.

Specifically, this definition matters because DevOps alerts only help when they create ownership and next actions, not just visibility—so the workflow must connect signal → task → communication in a single chain. (sre.google)

What GitLab events should trigger DevOps alerts and task updates?

GitLab events should trigger DevOps alerts and task updates when they represent impact, risk, or blocked delivery, because those are the moments when teams need shared context and a clear owner.

In practice, you can group triggers into four “signal families,” each with a different operational meaning:

- CI/CD health signals: pipeline failed, job failed, tests flaky, deployment failed

- Change risk signals: merge request opened, merge request approved, merge request merged

- Operational work signals: issue created/assigned/blocked, incident issue labeled “sev”

- Security/compliance signals (if you track them): vulnerability finding, license policy violation

The key rule is to avoid “everything that changes” and instead focus on “what demands action,” because non-actionable alerts train the team to ignore the channel. (sre.google)

What does “issue-to-task notifications” mean for DevOps teams?

“Issue-to-task notifications” means you don’t just announce the GitLab event—you create a work container (an Asana task) that captures the event’s context, assigns responsibility, and tracks progress, while Slack delivers the right level of urgency.

In effect, GitLab is the system of record for code and CI/CD, Slack is the team’s attention surface, and Asana becomes the execution log that answers: Who owns this? What’s the next step? When is it resolved?

This is why teams often prefer issue-to-task patterns for incident response and release reliability: Slack shows the alert instantly, but Asana ensures the alert becomes a trackable outcome.

Should DevOps teams automate GitLab alerts into Slack and Asana?

Yes—DevOps teams should automate GitLab alerts into Slack and Asana because it (1) reduces time-to-ownership, (2) improves follow-through by making work visible and assigned, and (3) reduces alert fatigue by enabling structured routing and consistent message design.

However, you only get these benefits if your alerts are actionable, because noisy alerts are operational debt that grows every week. (sre.google)

When does automation reduce incidents vs create alert fatigue?

Automation reduces incidents when it creates signal quality and closure loops, and it creates alert fatigue when it creates volume without action.

To make this real, use a simple comparison:

- Automation reduces incidents when:

- Alerts represent user impact or delivery risk (pipeline/deploy failures, prod regressions)

- Each alert points to a runbook or next action

- Each alert has an owner (explicit or default on-call)

- Automation creates alert fatigue when:

- Alerts fire for every “normal change” (every pipeline start, every push)

- Alerts lack context (no link, no owner, no severity)

- Alerts duplicate (retries, repeated failures, multiple channels)

A 2025 research discussion of over-alerting and warning fatigue shows how repeated, non-actionable alerts lead stakeholders to disengage—people “opt out” when alerts don’t reliably indicate action-worthy events. (scholarsarchive.library.albany.edu)

What are the minimum requirements before you automate?

There are 5 minimum requirements before you automate GitLab → Asana → Slack alerts, based on operational sanity rather than tooling:

- Clear ownership model: Who responds by default (on-call rotation, team, or service owner)?

- Stable naming conventions: Service names, environments, severity labels, channel naming.

- A single “work home” in Asana: A project and sections that match your incident lifecycle.

- Message standards in Slack: What fields every alert must include (severity, env, link, owner).

- A testing path: A safe way to trigger test events without spamming production channels.

If you skip these, you don’t “automate,” you just accelerate confusion.

How do you design the GitLab → Asana → Slack workflow architecture?

You design the GitLab → Asana → Slack workflow architecture by choosing one trigger path, one mapping model, and one routing strategy, then validating the chain end-to-end so each GitLab event produces exactly one task outcome and one Slack notification outcome.

To better understand the design, treat this as a system with three layers: trigger (GitLab) → orchestration (rules) → execution surfaces (Asana + Slack).

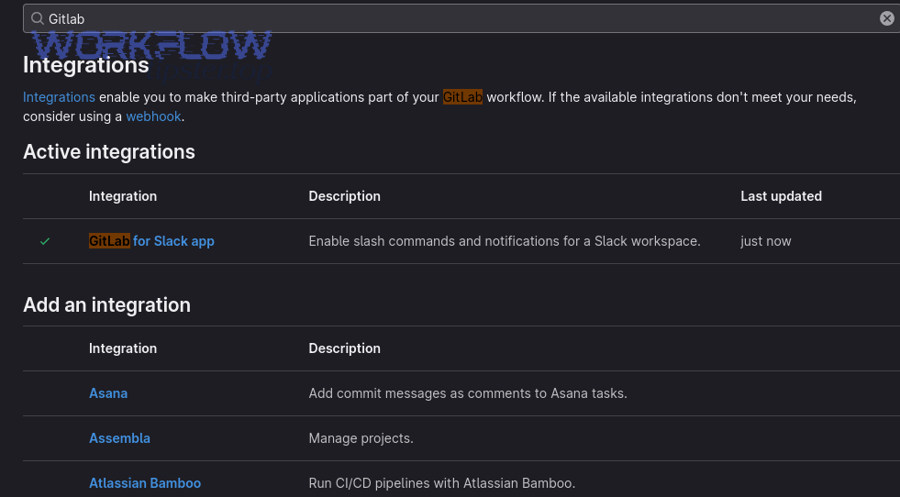

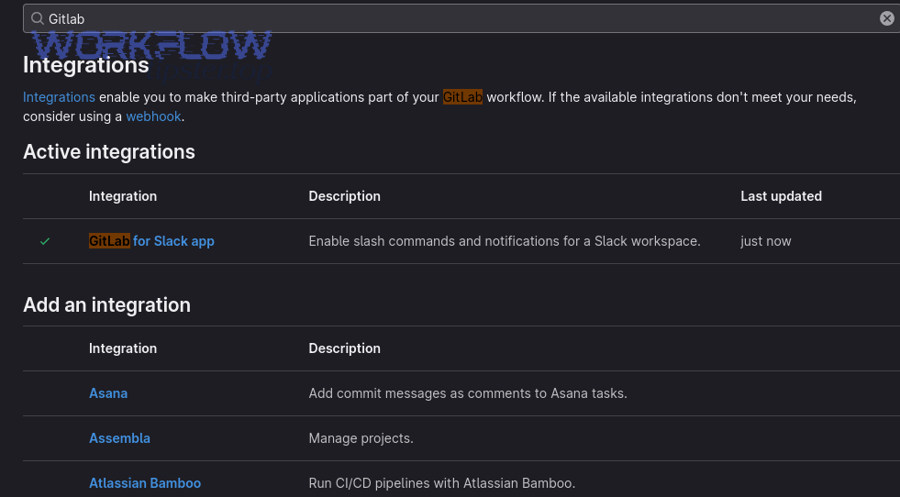

Which integration method fits best: native app, webhook, or automation platform?

Native app wins in speed and simplicity, webhooks are best for control and custom routing, and automation platforms are optimal for cross-tool workflows that need mapping, dedup, retries, and multi-step actions.

Here’s the practical decision logic:

- Choose native notifications when you only need “post GitLab events to Slack” and you can accept limited filtering.

- Choose webhooks when you need to route alerts by environment, severity, or repository conventions (and you want full payload control).

- Choose an automation platform when you need “GitLab → create/update Asana task → post Slack message → update Slack thread on changes,” plus logging and retries.

This architecture choice is the foundation for maintaining signal quality over time.

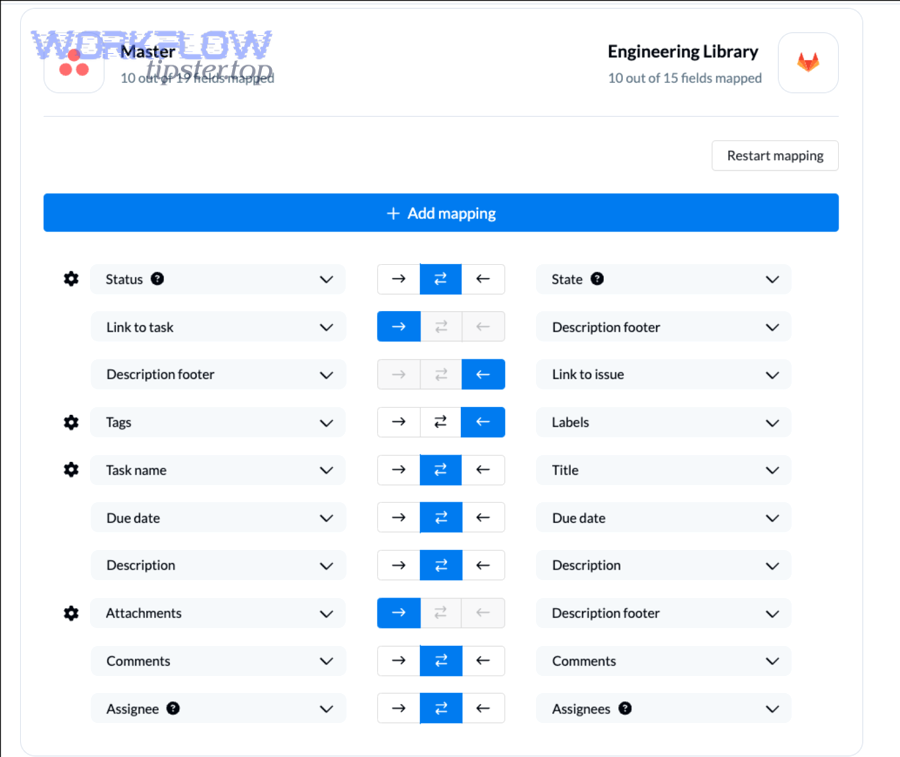

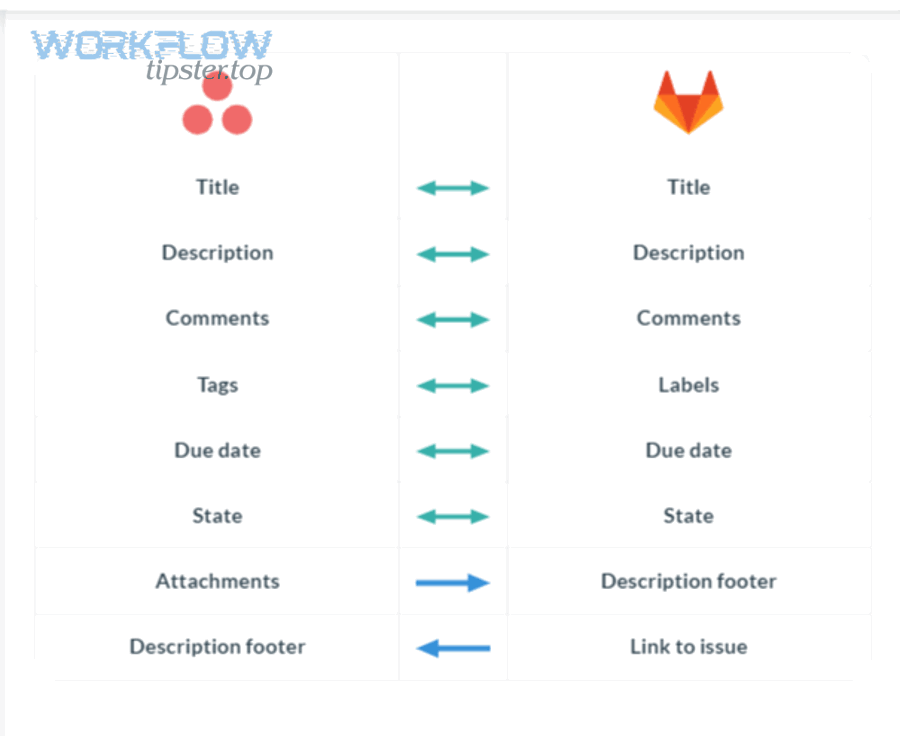

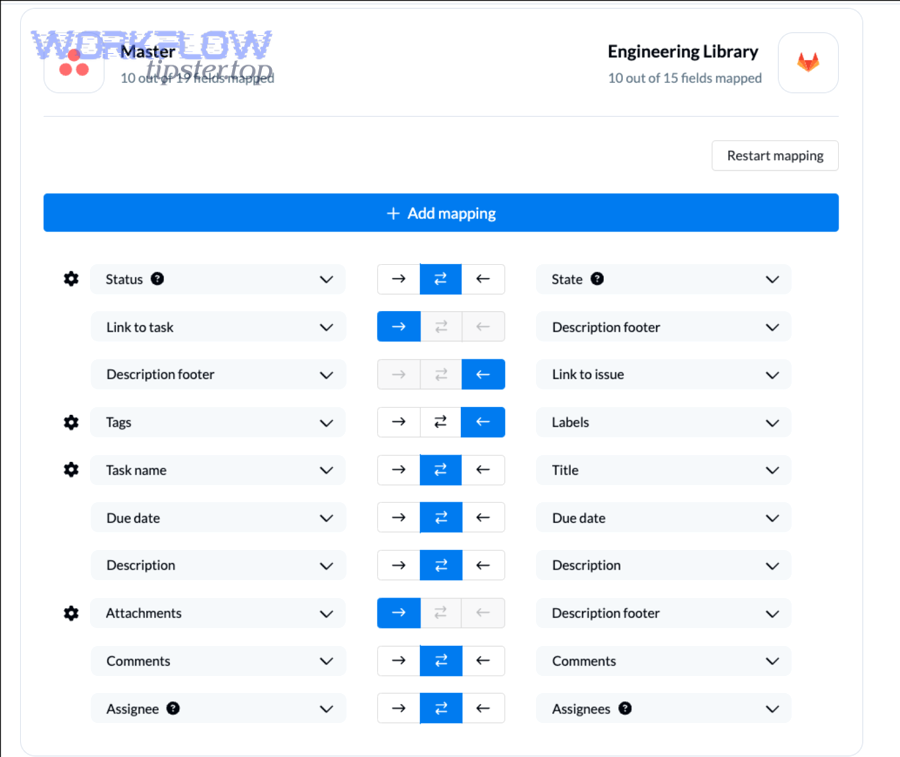

How should you map GitLab objects to Asana tasks and Slack messages?

You should map GitLab objects to Asana tasks and Slack messages using a stable identifier, a consistent field schema, and a single source of truth for status.

A strong mapping model looks like this:

- Stable key (dedup anchor): GitLab issue ID, MR ID, or pipeline ID (stored in Asana task custom field or description)

- Asana task title: [SEV-x] [env] Service – short problem summary

- Asana task body: links to GitLab + last known status + owner + runbook link

- Slack message: one-line summary + severity + environment + link to Asana + link to GitLab

This mapping is what keeps the hook-chain intact: Slack alerts stay brief, Asana tasks stay actionable, and GitLab stays authoritative for the underlying artifact.

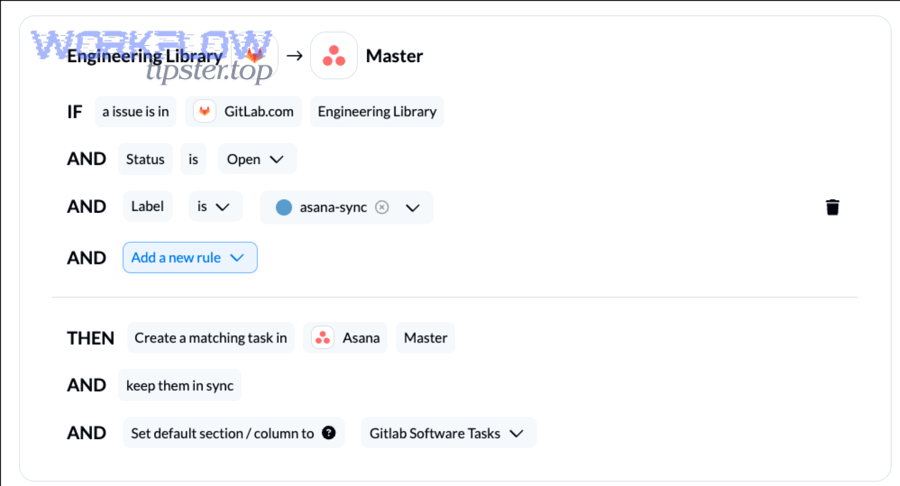

How do you set up GitLab triggers for DevOps alerts?

You set up GitLab triggers for DevOps alerts by selecting high-signal events, scoping them to the right projects or groups, and enforcing secure delivery (tokens/secrets) so only trusted sources can generate alerts.

Next, treat trigger setup as “signal design,” not “checkbox selection,” because every enabled event competes for the team’s attention. (sre.google)

How do you choose the right GitLab events for incident-grade alerts?

Pipeline failure wins for fast detection, deployment failure is best for user impact risk, and merge-request events are optimal for change governance—so the right set depends on whether you’re optimizing for reliability, speed, or safety.

A practical set for incident-grade alerting is:

- Alert immediately: deployment failed, pipeline failed in default branch, production hotfix pipeline failed

- Alert with lower urgency or in a thread: pipeline recovered, deployment succeeded after failure, flaky tests detected

- Don’t alert by default: pipeline started, every push, minor MR edits

Google’s incident response guidance emphasizes that alerts should be actionable and symptom-focused to minimize noise and maximize response effectiveness. (sre.google)

How do you secure the integration so alerts can’t be spoofed?

You secure the integration by using least-privilege credentials, validating webhook origins (where applicable), and restricting where messages can be posted so attackers cannot use your alert channel as a social engineering surface.

A secure baseline includes:

- Dedicated integration accounts (not personal tokens)

- Minimal scopes (only what is required to read events / create tasks / post messages)

- Secret management (store tokens in a secret vault or CI secret store, rotate regularly)

- Channel permissions (limit who can change integrations in Slack and who can rename/repurpose incident channels)

This matters because an alert channel is an authority surface: if it’s easy to spoof, the team’s trust collapses.

How do you create and update Asana tasks from GitLab events?

You create and update Asana tasks from GitLab events by defining task templates, mapping fields consistently, and implementing “create-or-update” logic so one incident corresponds to one task that stays current throughout the lifecycle.

Then, use Asana as the operational memory: Slack gets attention, Asana holds the plan.

What Asana task structure works best for DevOps incidents?

There are 3 main task structures for DevOps incidents—single-task, parent-with-subtasks, and project-by-service—based on how complex your incidents get and how many parallel responders you expect.

- Single-task structure (best for small teams):

- One task per incident with fields: severity, env, owner, service, links

- Comments track updates; attachments hold screenshots/logs

- Parent-with-subtasks (best for cross-functional incidents):

- Parent task is the incident container

- Subtasks track parallel workstreams (rollback, root cause, comms, fix verification)

- Project-by-service (best for larger orgs):

- Central incident project for intake

- Service projects for execution; links connect the chain

If you also run other automation workflows like “freshdesk ticket to basecamp task to google chat support triage,” this same structure pattern applies: one system captures demand, one system assigns work, and one system delivers communication.

How do you prevent duplicate Asana tasks for the same GitLab incident?

You prevent duplicate Asana tasks by using a dedup key and an idempotent create-or-update rule: if the task already exists for a given GitLab ID, update it; otherwise create it.

Practical dedup strategies:

- Store

gitlab_issue_id/mr_id/pipeline_idin an Asana custom field - Search by that field before creating a new task

- If your system cannot search reliably, store a unique string in the task description and match on it

- Update the existing task when status changes (failed → fixed), rather than creating a new task

This one change usually cuts alert noise dramatically because retries and repeated failures stop multiplying tasks.

How do you send Slack alerts that stay actionable, not noisy?

You send Slack alerts that stay actionable by standardizing the message format, routing alerts to the correct channels, and using threads/updates so ongoing incidents don’t flood the main channel.

Moreover, “actionable” is not a vibe—it’s a design requirement: if an on-caller can’t act, the alert should be a dashboard metric, not a notification. (sre.google)

What should a good Slack DevOps alert message include?

There are 7 must-have fields in a good Slack DevOps alert message, based on what responders need in the first 30 seconds:

- What happened: pipeline failed, deployment failed, MR blocked, incident opened

- Where: service + environment (prod/staging/dev)

- Severity: SEV label or priority mapping

- Owner/mention: who should respond now (team or on-call)

- Links: GitLab artifact link + Asana task link

- Next action hint: rollback? check logs? runbook?

- Update strategy: “Updates in thread” or “This message will update”

If your organization also runs “github to monday to discord devops alerts,” you’ll notice the same pattern: the best alert is short, structured, and link-rich—only the tools change.

How do you route alerts to the right channel by environment or severity?

You route alerts correctly by applying environment-first routing and severity-second routing so the most urgent alerts land where responders already live.

A simple routing table looks like this:

- Prod + high severity: #oncall / #incidents

- Prod + medium severity: #devops-alerts (with on-call mention only when needed)

- Staging/dev failures: #ci-cd / #engineering-infra (no paging behavior)

- Security or compliance: #security-alerts (separate audience, separate handling)

When routing works, your Slack channels become predictable. When routing fails, people mute channels—and your entire workflow loses value.

How do you test, monitor, and troubleshoot the workflow end-to-end?

You test, monitor, and troubleshoot the workflow by validating each link in the chain (GitLab trigger → orchestration → Asana task → Slack alert), instrumenting logs, and defining what “failure” means so you can alert on broken alerting.

In addition, prioritize and triage alerts the same way you triage incidents: invest effort where it removes the most waste and reduces missed critical events. (sei.cmu.edu)

What are the most common failure points and how do you fix them?

The most common failure points fall into four buckets—auth, mapping, delivery, and duplication—because those are the places where cross-tool systems break first.

- Authentication failures (tokens revoked/expired, wrong scopes)

- Fix: rotate credentials, use dedicated integration accounts, validate scopes

- Mapping failures (wrong project/channel IDs, missing custom fields)

- Fix: keep a configuration registry; validate required fields at deploy time

- Delivery failures (webhook timeouts, platform rate limits, network blocks)

- Fix: retries with backoff, dead-letter queue, fallback posting

- Duplication (replayed events, multiple triggers, retry storms)

- Fix: dedup keys, create-or-update logic, update Slack message instead of posting new

According to a 2017 case study from Carnegie Mellon University’s Software Engineering Institute, prioritizing alerts with automation and classification aims to focus analyst effort where it matters most—reducing wasted effort on low-value signals. (sei.cmu.edu)

How do you measure success (signal quality) after rollout?

You measure success by tracking signal quality and operational outcomes, not just “messages sent.”

Use a small scorecard:

- Time-to-ownership: minutes from alert → assigned Asana owner

- Time-to-ack in Slack: minutes until first responder acknowledgment

- Duplicate rate: duplicate alerts/tasks per incident

- Actionability rate: % of alerts that result in a task update or remediation step

- SLA adherence: % incidents resolved within target window

If you see a rising duplicate rate, you likely need idempotency. If you see a falling actionability rate, your filters are too broad.

What advanced patterns improve GitLab → Asana → Slack alerting quality?

You improve alerting quality by adding state-change rules, escalation logic, least-privilege governance, and idempotent delivery so the workflow stays reliable as your systems scale.

Below, these patterns live past the contextual border because they’re not required for a basic setup—but they dramatically improve outcomes when you have multiple services, multiple teams, and multiple sources of alerts.

How do you reduce alert noise with state-change rules?

You reduce alert noise by alerting on transitions instead of continuous states, because responders need “it broke” and “it recovered,” not every intermediate update.

Effective rules include:

- Alert when pipeline changes from success → failed on default branch

- Post an update when it changes failed → success (recovery notification)

- Suppress repeats for X minutes unless severity increases

- Convert low-severity events into a periodic digest

Google’s guidance that alerts should be actionable aligns with this pattern: state-change alerts preserve actionability, while repeated alerts destroy it. (sre.google)

How do you implement escalation paths for unresolved incidents?

You implement escalation by adding a time-based rule: if no one acknowledges or updates the Asana task within a defined window, escalate to the next audience.

A practical escalation ladder:

- T+0: post in primary channel with owner mention

- T+10 minutes (no ack): notify #oncall or team lead

- T+30 minutes (no progress): notify incident commander channel and assign backup owner

- T+60 minutes: open a formal incident process and require status updates in a thread

This keeps urgency aligned with impact and prevents “silent failures” where alerts exist but nobody responds.

How do you design audit-friendly, least-privilege access for integrations?

You design audit-friendly access by separating identity, permissions, and change control:

- Use dedicated service accounts for GitLab/Asana/Slack integrations

- Restrict scopes to only what the workflow needs

- Log every workflow config change (who changed routing, templates, tokens)

- Rotate secrets on a schedule and after staff changes

If your company also runs “airtable to google slides to google drive to docusign document signing,” you already know the governance lesson: automation saves time, but governance saves organizations.

What idempotency and dedup strategies prevent repeated alerts across retries?

Idempotency prevents repeats by ensuring the same event produces the same outcome—even if it’s delivered twice.

Use these strategies:

- Event fingerprinting:

source + object_id + event_type + statebecomes your dedup key - Create-or-update tasks: same GitLab ID updates the same Asana task

- Message updates: update the Slack message (or thread) instead of posting a new one

- Replay handling: treat webhook replays as updates, not new incidents

In short, idempotency is the technical backbone of “Alerts = Notifications,” because it ensures every notification remains trustworthy even under retries and replays.

Evidence (summary sentences embedded above):

– Actionable, symptom-focused alerting is emphasized in Google’s incident management guidance. (sre.google)

– Alert prioritization and automation to reduce wasted effort is discussed in Carnegie Mellon University SEI’s case study. (sei.cmu.edu)

– Warning fatigue and over-alerting behaviors (opting out when alerts are not useful) are explored in a 2025 academic paper hosted by the University at Albany repository / related journal listing. (scholarsarchive.library.albany.edu)