You can automate GitHub → Jira → Discord DevOps alerts by turning GitHub events (issues, pull requests, CI failures, releases) into Jira work item creation/updates and posting real-time Discord notifications that route the right signal to the right team at the right time. The goal is simple: fewer missed incidents, faster triage, and traceable work that actually closes.

Most teams also need a clear definition of what “DevOps alerts” means in this chain—because not every GitHub event should become a Jira ticket, and not every Jira update should ping the whole Discord server. Below, you’ll learn how to model “signal vs noise,” choose triggers, and design routing rules so the workflow stays actionable.

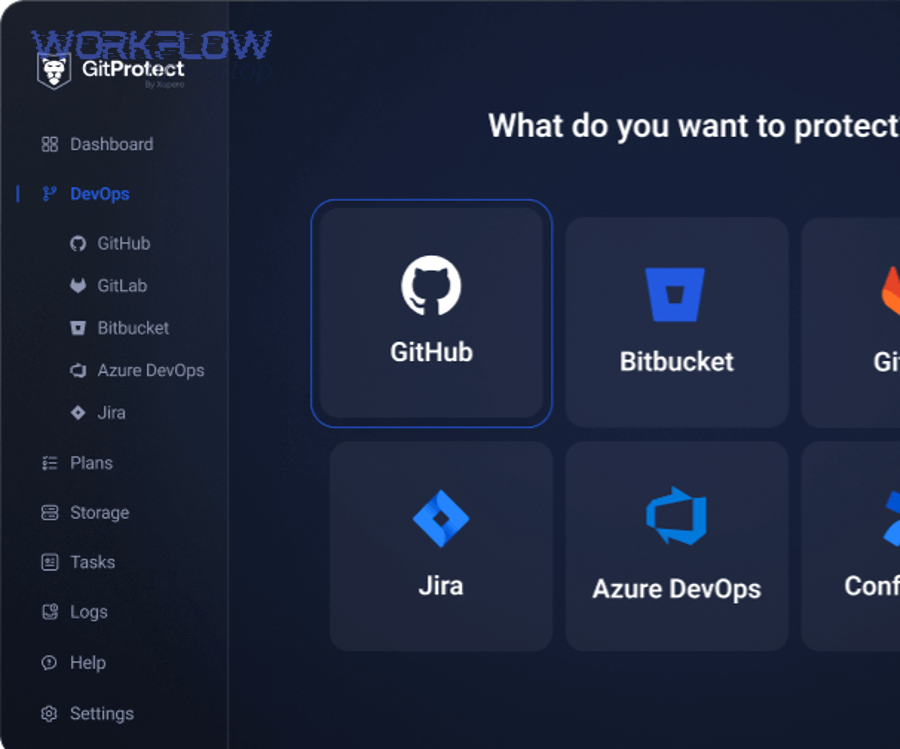

A second decision follows immediately: which implementation approach should you use—GitHub Actions, Jira automation, or an external workflow tool? Each option can work, but they differ in maintainability, reliability at scale, and how well they handle deduplication, retries, and multi-repo routing.

Introduce a new idea: once you can build the pipeline, the real advantage comes from operating it like a product—securely, reliably, and without alert fatigue—so your automation workflows keep improving as your DevOps surface area grows.

What is a GitHub → Jira → Discord DevOps alerts workflow (and what does it automate)?

A GitHub → Jira → Discord DevOps alerts workflow is an integration pipeline that captures GitHub activity, converts the action into Jira work item updates, and broadcasts a structured notification to Discord so DevOps teams can respond fast without losing traceability.

Next, to better understand why this chain works, you need to separate events (what happened) from work (what must be done) and visibility (who needs to know).

In practice, this workflow automates four outcomes that DevOps teams repeatedly need:

- Capture the right trigger from GitHub

Examples: a CI job fails on main, a pull request is merged into a release branch, a security alert fires, or an incident label appears on an issue. - Create or update the correct Jira work item

This is where you ensure the “alert” becomes work you can assign, prioritize, and close. - Route the notification into Discord with context

Discord provides the real-time surface: on-call sees it immediately; the rest of the team sees it in the right channel. - Preserve traceability

A good workflow always links back to the source: GitHub artifact → Jira issue → Discord message (and ideally back-links too).

A useful mental model is: GitHub generates signals, Jira manages commitments, and Discord accelerates coordination.

What counts as a “DevOps alert” in this workflow (PRs, builds, incidents, or tickets)?

A “DevOps alert” in this workflow is any time-sensitive signal that requires a decision, an action, or escalation—typically triggered by CI/CD failures, risky merges, security findings, deployment anomalies, or incident-labeled work items.

Then, to reconnect this to your day-to-day process, treat “alert” as the start of a response loop, not just a message.

Most teams get confused because they mix three categories:

- Activity notifications (high volume): PR opened, comment added, branch created

- Quality signals (medium volume): tests failed, coverage dropped, lint errors, build unstable

- Operational incidents (low volume, high urgency): deployment rollback, outage indicator, high severity security alert

A GitHub → Jira → Discord chain becomes powerful when you enforce this rule:

- Activity notifications should usually be Discord-only (awareness), sometimes filtered.

- Quality signals may update a Jira issue if they block shipping or violate policy.

- Operational incidents should create/update a Jira incident task (or your equivalent) and notify Discord with strong routing (mentions, on-call channel, escalation path).

This is how you stop “alerts” from becoming noise: you intentionally define what deserves a ticket.

Which GitHub events should you choose for Jira creation vs Discord-only notifications?

There are 3 main types of GitHub events you should classify for automation—ticket-worthy, action-supporting, and awareness-only—based on urgency, ownership, and frequency.

Below, to connect the workflow to real outcomes, you’ll use this classification to decide what becomes Jira work vs what stays as a Discord notification.

Type A: Ticket-worthy events (create or update Jira)

Use these when something must be tracked, assigned, and resolved:

- CI failure on protected branches (main, release/*)

- Security scanning alert above a severity threshold

- Deployment failure/rollback signal (from GitHub Actions or release tooling)

- Bug/incident issues labeled with “sev1/sev2”, “incident”, “hotfix”

Type B: Action-supporting events (update Jira + targeted Discord)

Use these to keep Jira accurate without spamming everyone:

- PR merged that resolves a Jira issue

- Build passes that unblocks a release ticket

- A required check flips from failing to passing

Type C: Awareness-only events (Discord-only, filtered)

Use these when the team just needs visibility:

- PR opened (only in a dev channel, or only for certain repos)

- New release published (announce channel)

- Changes requested in PR review (only to the author or reviewers)

A practical heuristic is volume-based: if an event can happen dozens of times a day, do not let it create Jira issues by default.

What are the core building blocks you need to implement GitHub → Jira → Discord alerts?

There are 6 core building blocks for GitHub → Jira → Discord alerts: triggers, authentication, transformation/mapping, routing, message formatting, and reliability controls.

To better understand how these pieces fit, think of your workflow as a small “notification service” that must be predictable, secure, and debuggable.

What permissions, tokens, and webhook settings are required across GitHub, Jira, and Discord?

You need authenticated access on all three systems, plus correctly scoped event delivery, to avoid silent failures and permission-based partial updates.

Next, because most broken alert pipelines fail at authentication, you should treat credentials as a first-class design concern.

GitHub requirements (typical):

- Ability to read events from repos (webhooks or Actions events)

- A secret for webhook signature validation (if using webhooks)

- Permission to read metadata about PRs, checks, commits (for context)

Jira requirements (typical):

- API token or OAuth credentials

- Permission to create issues, add comments, and transition issues (if you automate status)

- Permission to view development tools if you’re showing linked dev info panels

Atlassian explicitly documents that linking GitHub development data to Jira work items requires adding the Jira work item key into branch names, commit messages, or PR titles, which then lets Jira show branches/PRs/builds/deployments in context. (support.atlassian.com)

Discord requirements (typical):

- Either a Discord webhook URL for the channel, or a bot token for advanced behavior

- Permission to post messages in specific channels

- Permission to mention roles (if you use @oncall or @devops)

Security baseline you should enforce:

- Least privilege tokens (only needed scopes)

- Secret rotation and documented ownership

- Separate environments (dev/staging/prod webhooks and channels)

Do you need a Discord bot, or is a Discord webhook enough?

No, you do not need a Discord bot for most GitHub → Jira → Discord DevOps alerts because a webhook is simpler, safer to operate, and sufficient for posting structured notifications with links, mentions, and formatting.

However, to hook this decision to real requirements, you should choose a bot when you need interactivity, richer routing, or message lifecycle control.

Use a Discord webhook when:

- You only need to post messages into a channel

- Routing is handled upstream (your workflow decides the channel)

- You want minimum maintenance and minimal permissions

Use a Discord bot when:

- You need commands (“/ack”, “/snooze”, “/create-ticket”)

- You need to update or delete previous messages (message lifecycle)

- You need advanced routing inside Discord (threading rules, per-user DMs)

Three reasons webhooks win for most teams:

- Lower operational overhead (no bot hosting or state)

- Smaller permission footprint (reduced blast radius)

- Fewer moving parts (fewer failure modes under pressure)

Which implementation approach is best: GitHub Actions, Jira automation, or an external workflow tool?

GitHub Actions wins in repo-local control, Jira automation is best for Jira-native transitions, and an external workflow tool is optimal for cross-repo routing and complex transformations.

Meanwhile, the best choice depends on where you want your “source of truth” for automation logic and how much you need to standardize across many repositories.

Here’s what each approach tends to optimize:

- GitHub Actions: tight coupling to code, PRs, CI status, secrets per repo/org

- Jira automation: status transitions and Jira-centric logic

- External workflow tools: centralized rules, multi-system enrichment, retries/queues, multi-repo scale

To make this decision concrete, the table below compares them using criteria DevOps teams actually feel during incidents.

What this table contains: a practical comparison of the three common implementation approaches for GitHub → Jira → Discord alerts, based on speed, control, and scaling behavior.

| Approach | Best for | Weak spot | When it becomes painful |

|---|---|---|---|

| GitHub Actions | CI/CD-triggered alerts, repo-specific rules | Harder multi-repo standardization | Many repos with “almost the same” workflow |

| Jira automation | Updating Jira status based on linked dev activity | Limited GitHub event shaping | Complex transformations or dedupe |

| External workflow tool | Central routing, enrichment, queues, multi-destination | Another platform to operate | If you lack ownership/observability |

A key Jira-side capability is that Jira can link GitHub branches, commits, PRs, builds, and deployments to work items when work item keys are present, giving teams visibility directly inside Jira. (support.atlassian.com)

How does a no-code/low-code workflow compare to custom code for DevOps alerting?

No-code/low-code wins in speed and maintainability for standard integrations, while custom code is best for deep control, specialized routing, and advanced idempotency.

However, to better understand the trade-off, you should compare them across three criteria that matter under alert load: change velocity, reliability, and ownership.

No-code/low-code is best when:

- You need to ship fast and iterate on routing rules weekly

- Your mapping needs are “fields + templates + conditions”

- You want built-in retries, dashboards, and connectors

Custom code is best when:

- You need event fingerprinting across multiple sources (dedupe at scale)

- You need strict compliance handling (PII redaction, audit logging)

- You want to avoid vendor constraints or per-task billing

In DevOps reality, most teams start with low-code and selectively introduce code only where complexity proves persistent (routing, dedupe, compliance, and cost).

When is GitHub Actions better than a webhook-driven relay service?

GitHub Actions is better when you want alerts tightly bound to CI results and repository context, while a webhook-driven relay service is better when you need centralized control across many repos and consistent routing policies.

Next, to choose confidently, consider how “close to the code” your alert logic must be.

Choose GitHub Actions when:

- Your triggers are mostly CI/CD outcomes (build, test, deploy)

- You want versioned workflow logic alongside code

- You need repo-specific secrets and environments

Choose a webhook relay when:

- You need a single policy for dozens of repos

- You need queueing/retries beyond “workflow rerun”

- You need to enrich payloads (ownership from a service catalog, severity mapping, on-call schedule)

If you routinely struggle with “workflow sprawl” across repos, centralized routing becomes the long-term win.

How do you map GitHub activity into Jira issues without creating noise?

You map GitHub activity into Jira issues without noise by using a minimum viable ticket model, strict creation rules, and deduplication based on a stable event fingerprint (service + type + environment + severity).

Then, to reconnect this to what your team experiences, remember that noise doesn’t come from “too many alerts”—it comes from “alerts that don’t produce decisions.”

A high-quality mapping strategy has three layers:

- Ticket creation rules (when to create)

- Ticket update rules (when to append/update instead)

- Ticket closure/transition rules

If you skip any layer, you’ll create tickets your team can’t close, which guarantees alert fatigue.

Which Jira fields should you map from GitHub to keep tickets actionable?

There are 2 main mapping tiers you should use—minimum viable fields and rich context fields—based on how fast the responder must understand impact and ownership.

Specifically, actionable Jira tickets share one property: they answer “what, where, who, and what next” without hunting.

Minimum viable Jira ticket fields (recommended for every alert ticket):

- Summary: concise event + service + environment (“CI failed on main for payments API”)

- Description: what happened + immediate impact + link to GitHub run/PR

- Priority/Severity: derived from rules, not opinion in the moment

- Component/Service: mapped from repo or label

- Assignee/Team: mapped from code owners or service ownership

- Links: GitHub PR/commit, CI run, release/deploy reference

Rich context fields (use when incidents are complex):

- Deployment version/build number

- Failing test suite/module name

- Error signature (top stack trace line or failure reason)

- Runbook link (internal or public)

- Customer impact estimate (if available)

A practical technique: embed the Jira issue key back into the dev workflow so GitHub activity stays linked to Jira’s development panel. Atlassian documents that adding the work item key to branch names/commit messages/PR titles links the data to Jira automatically. (support.atlassian.com)

Should every alert create a Jira issue (or only update an existing one)?

No, every alert should not create a Jira issue because (1) duplicates destroy trust, (2) frequent signals belong in Discord awareness, and (3) many alerts represent the same underlying incident that should update one tracked ticket.

However, to make that “No” actionable, you need a simple create-vs-update policy your automation enforces consistently.

Create a new Jira issue when:

- The alert is a new incident class (new fingerprint)

- The last occurrence is outside a suppression window (e.g., 24 hours)

- The alert severity is high enough that work tracking is mandatory

Update an existing Jira issue when:

- The fingerprint matches (same service + environment + event type)

- The alert is a repeat/flare-up of the same incident

- The alert adds new evidence (new logs, new failing step, new affected deployment)

Three reasons update-first reduces noise:

- One owner can coordinate resolution

- History stays in one place

- Discord can reference one stable ticket link

If you want measurable proof that responsiveness matters, research on pull request response times shows that a large portion of PRs receive a first response within a day, and bots contribute heavily to the fastest responses—highlighting how automated signals can accelerate reaction loops when implemented carefully. According to a study by researchers (Hasan et al.) published on arXiv in 2023, 80.16% of pull requests received a first response within less than a day, and 70% of sampled “≤10 minute” first responses were generated by bots. (arxiv.org)

How do you format Discord notifications so DevOps teams act fast?

You format Discord notifications for fast action by using a predictable template that includes severity, a one-line summary, ownership, the next action, and direct links to the Jira issue and GitHub artifact.

Next, to keep your hook chain tight, remember this: the Discord message is the “front door,” but Jira is the “record of work,” so your message must always point to the ticket.

A strong Discord alert message usually answers five questions in under 10 seconds:

- What happened? (event + scope)

- How bad is it? (severity)

- Who owns it? (team/assignee)

- What should happen next? (action)

- Where is the evidence? (links)

You can also keep optional sections for details (error signature, affected deploy, rollback status) so the message stays readable.

What should a good Discord DevOps alert message include (include links, mentions, context)?

A good Discord DevOps alert message includes 8 core elements: severity, summary, service/environment, owner, action, Jira link, GitHub link, and timestamp—plus optional evidence such as error signature and runbook link.

Then, to illustrate how this prevents confusion, treat every message as a micro runbook entry.

Recommended message structure (copyable pattern):

- [SEV-1] Payments API — CI failed on

main - Owner: @devops-oncall (or team role)

- Action: Investigate failing step “integration-tests”; rerun only after fixing

- Jira: DEVOPS-1234

- GitHub: Actions run link / PR link

- Context: Last green build 2h ago; failing since commit abc123

- Timestamp: 2026-02-04 09:18 ET

If you use Discord embeds, keep the embed title as the summary and embed fields as owner/action/links so the message is scannable.

Here’s a short example of a related workflow pattern that many teams also automate for go-to-market pipelines: google forms to hubspot to airtable to discord lead capture. That chain works because it uses the same principle—structured data becomes tracked work, then gets routed to a real-time team surface.

What’s better for alert routing: one shared channel or multiple channels by service/team?

One shared channel wins for global visibility, multiple channels win for focus, and a hybrid channel strategy is optimal for fast response without fatigue.

However, to connect this to your team’s reality, think in terms of “who must act” vs “who should know.”

One shared channel is best when:

- You’re a small team

- Ownership is fluid

- Everyone rotates on-call

- You need shared situational awareness

Multiple channels are best when:

- You have multiple services with clear ownership

- Alerts are frequent and specialized

- You need separate escalation paths (on-call, incidents, release)

Hybrid strategy (recommended for most teams):

- A #devops-incidents channel for high severity

- A #build-failures channel per service group (or per platform)

- A #release-announcements channel for deployment info

- A #automation-health channel for the workflow itself

This hybrid approach is the best “meronymy” design: different channels are parts of one system that collectively reduces mean time to acknowledge.

Can you make GitHub → Jira → Discord alerts reliable at scale (without duplicates and missed events)?

Yes, you can make GitHub → Jira → Discord alerts reliable at scale because you can enforce idempotency, validate delivery, implement retries with backoff, and monitor the automation itself like production software.

More importantly, to hook the issue directly: reliability is not optional—duplicates create mistrust, and missed alerts create outages.

A reliable alert pipeline typically implements:

- Webhook signature validation (trust)

- Idempotency keys (no duplicates)

- Retry policy with exponential backoff (no missed messages)

- Rate limit handling (stability under load)

- Dead-letter/fallback paths (recoverability)

- Observability (you can see what happened)

If you use Jira’s official integration surfaces, it also helps to understand what data is and isn’t stored. Atlassian’s GitHub integration FAQ explains that commit messages and branch names are read to link development information to Jira work items and that code is not stored during this process. (support.atlassian.com)

What are the most common failure points (auth, rate limits, payload mismatch, permissions)?

There are 4 main failure categories in this workflow: authentication/permissions, event delivery issues, payload mapping errors, and downstream limits (rate limits/timeouts).

Next, to reduce debugging time during incidents, you should treat each category as a checklist with quick tests.

1) Authentication and permissions

- Expired tokens (Jira API token rotated, GitHub secret revoked)

- Missing scope (can read but cannot create/transition Jira issues)

- Discord role mention permission missing

2) Event delivery

- Webhook not firing due to incorrect event subscription

- Signature validation mismatch (secret mismatch)

- GitHub Actions workflow not triggered due to branch filters

3) Payload mapping

- Jira field IDs mismatch (custom fields changed)

- Status transition invalid (workflow constraints)

- JSON formatting errors or missing required fields

4) Rate limits and timeouts

- Burst of events after outage

- API throttling from Jira or Discord

- Long enrichment calls (ownership lookup) causing timeouts

A key operational improvement is to log one “correlation ID” across the whole chain so you can trace one alert from GitHub → Jira → Discord in seconds.

What monitoring and fallback alerts should you set up for the automation itself?

You should set up monitoring and fallback alerts by adding 3 control loops: health checks for delivery, failure notifications for the pipeline, and backlog/latency alerts that detect slowdowns before you miss critical incidents.

Then, to keep the workflow resilient, you should route pipeline failures to a dedicated channel and optionally create a Jira ops ticket automatically.

Monitoring layer 1: Delivery success

- Count: events received vs events processed vs messages sent

- Alert on gaps (received but not sent)

Monitoring layer 2: Failure notifications

- Any failed workflow run posts to #automation-health

- Include error reason, correlation ID, and retry state

Monitoring layer 3: Latency/backlog

- Alert when processing latency exceeds threshold (e.g., >2 minutes for SEV-1)

- Alert on growing retry queue

If you need a proven motivation for controlling interruptions and notification overload, software engineering research has investigated how interruptions affect developer performance and stress in controlled settings. According to a study published as an ICSE 2024 paper, researchers conducted a controlled human study with 20 participants completing software engineering tasks under interruptions while recording physiological measures. (kjl.name)

Contextual Border: You now have the full, end-to-end blueprint to implement and operate the core GitHub → Jira → Discord DevOps alerts workflow—triggers, permissions, approach selection, mapping, message formatting, and reliability. Next, the content shifts from “how to build it” to “how to optimize it” for advanced, real-world conditions.

How can you optimize GitHub → Jira → Discord alerts for advanced DevOps use cases (without alert fatigue)?

You optimize GitHub → Jira → Discord alerts by engineering for “signal over noise,” adding escalation tiers, preventing duplicates with idempotency, and enforcing security controls so your workflow remains trustworthy under load.

Next, to deepen the micro-semantics, you’ll move from the basic mechanics to advanced patterns that distinguish a mature alerting system from a noisy chat feed.

How do “signal” vs “noise” alert strategies differ for DevOps teams (and which reduces fatigue)?

Signal wins when alerts produce decisions, noise dominates when alerts produce scanning, and the best strategy reduces fatigue by gating, bundling, and routing alerts based on severity and ownership.

However, to connect this to your pipeline, “signal vs noise” is not a philosophical debate—it is a set of rules your automation applies.

Signal strategy (what you want):

- Low volume, high clarity

- Each message implies an action or escalation path

- Clear owner and next step

- Strong context and links

Noise strategy (what to avoid):

- High volume “FYI” spam

- Mixed severities in the same channel

- No owner, no next action

- Duplicate messages that erode trust

Three practical ways to reduce noise:

- Severity gating: only SEV-1/SEV-2 create Jira tickets; others are Discord-only

- Suppression windows: prevent repeated messages from the same fingerprint in a short time

- Digesting: bundle low-severity alerts into a periodic summary

This is where antonymy becomes operational: signal and noise are opposites, and your workflow should explicitly choose one.

What escalation patterns can you add (mentions, on-call rotations, severity tiers, incident channels)?

There are 4 main escalation patterns you can add—role mentions, tiered channels, incident threads, and escalation timers—based on how quickly you need a human to respond.

Then, to make the escalation reliable, your Jira ticket and Discord message must reflect the same severity logic.

Pattern 1: Role mentions

- @devops-oncall for SEV-1

- @service-owners for SEV-2

- No mentions for SEV-3 (digest only)

Pattern 2: Tiered channels

- #devops-incidents (SEV-1 only)

- #service-alerts-payments (service-specific)

- #build-failures (engineering productivity)

Pattern 3: Incident threads or dedicated incident channels

- One Discord thread per incident (keeps discussion attached)

- Auto-create channel for SEV-1 with naming convention: incident-YYYYMMDD-payments

Pattern 4: Escalation timers

- If not acknowledged in 10 minutes, mention next tier

- If not mitigated in 30 minutes, post to leadership channel (use carefully)

This is the same “support triage” logic teams use in other ecosystems, like freshdesk ticket to linear task to google chat support triage, where the system escalates the right work to the right collaboration surface based on urgency.

What does idempotency mean for webhook-based alerts, and how do you prevent duplicate Jira tickets?

Idempotency means the same event can be processed multiple times without creating multiple Jira tickets, and you prevent duplicates by generating a stable fingerprint and making “create vs update” deterministic.

Next, to hook this to reliability, remember that webhooks retry by design—so duplicates are not a bug in the platform, they’re a bug in your handling.

How to implement idempotency (practical pattern):

- Create an event fingerprint

Example:service + environment + event_type + primary_identifier

- service: repo or component

- environment: prod/staging

- event_type: ci_failed / deploy_failed / security_high

- primary_identifier: workflow run id or deployment id

- Store fingerprint → Jira issue mapping

- If fingerprint exists, update the existing issue (comment, status, fields)

- If fingerprint does not exist, create a new issue and store the mapping

- Add a suppression window

- If the same fingerprint fires repeatedly, update once and aggregate counts

How you make this visible in Discord:

- Post a message like “Updated existing incident ticket DEVOPS-1234 (repeat #5)”

- Include the count and last-seen timestamp

This single pattern often eliminates the majority of alert fatigue caused by duplicate tickets.

What security and compliance controls matter (least privilege, secret rotation, audit logs, PII redaction)?

The controls that matter most are least privilege, secret rotation, auditability, and data minimization because alert payloads can leak sensitive identifiers and automation tokens can become high-impact credentials.

Besides, security is also a trust mechanism: if responders don’t trust what they see, they won’t act quickly.

Least privilege checklist

- Separate tokens per environment (dev vs prod)

- Separate Discord webhooks per channel purpose

- Jira permissions limited to specific projects/work item types

Secret rotation and ownership

- Rotation schedule (e.g., quarterly) or triggered rotation after staff changes

- Clear owner for each integration credential

- Emergency revoke plan (documented)

Audit logs

- Log every ticket create/update with correlation ID

- Log every Discord post (timestamp, channel, fingerprint)

- Preserve enough to debug, not enough to expose secrets

PII and sensitive data redaction

- Strip user emails unless required

- Avoid dumping raw logs into Discord; link to secure log storage instead

- Use “error signatures” rather than full stack traces in chat

If your organization operates regulated systems, treat this workflow as production software—because it is.