STEP 1 — Title & outline analysis (for intent alignment)

Main keyword focus: google docs to pagerduty

Predicate (main action): automate / connect

Relations Lexical used: Antonym (“No-Code, Not Manual”)

Search intent types present in the outline: Definition, Boolean, Grouping, How-to, Comparison (plus advanced patterns as micro-semantic expansion)

If you want to connect Google Docs to PagerDuty, the fastest path is a no-code automation that turns a clear Docs signal (like a status change, approval comment, or structured update) into a PagerDuty event or incident with the right context attached—without relying on manual copying, messaging, or paging. (zapier.com)

Then, you need to decide what “incident alerts” should mean for your on-call team: which document activities are valid triggers, which PagerDuty actions are safe to automate, and how to prevent noise from constant edits. (support.pagerduty.com)

Besides that, teams often want to compare options—quick no-code setups versus more configurable tools or custom code—so you can match the workflow to your governance needs, reliability expectations, and budget. (zapier.com)

Introduce a new idea: once the integration works, the real win comes from making it dependable—secure permissions, deduplication rules, and monitoring—so the workflow helps responders instead of paging them for document churn.

What does it mean to connect Google Docs to PagerDuty for incident alerts?

Connecting Google Docs to PagerDuty means linking a document-based signal to an on-call response action through an automation workflow, so the right responder gets the right alert with supporting context attached—without relying on manual copying, messaging, or paging.

To better understand this connection, start by separating “where the signal happens” from “where the response happens,” because that distinction determines how you design reliable triggers.

In practice, the connection is a workflow with three layers:

- Signal layer (Google Docs): You define what counts as an operationally meaningful update. A meaningful update is not “someone typed a sentence.” It is a discrete change that matters to incident response: an approval comment, a template field changing from “Draft” to “Ready,” a checklist completion, or a postmortem section marked “Final.”

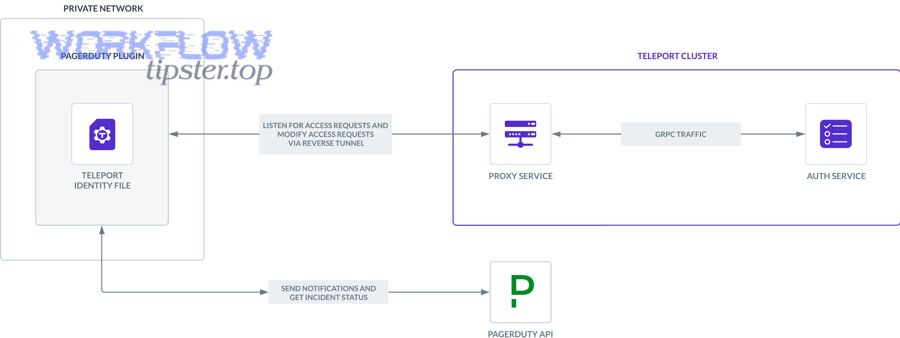

- Workflow layer (automation): A no-code platform watches for the signal, applies conditions, and formats a payload. This is where you add guardrails like “only run when the document is in this folder,” “only run when the editor is in the incident commander group,” or “only run if the text contains SEV-1.”

- Response layer (PagerDuty): The workflow creates an event/alert or creates an incident (depending on the action you choose) and routes it to the correct service and escalation policy. PagerDuty then turns that signal into on-call notifications and incident workflow steps. (support.pagerduty.com)

The “No-Code, Not Manual” part matters because manual escalation is slow and inconsistent. People paste incomplete links, forget to include severity, and lose the trail of what changed. A well-designed automation does the opposite: it creates a consistent incident record and always includes the context that responders need in the first minute.

Here is the mental model that keeps terminology consistent throughout your setup:

- “Docs signal” = the specific event you treat as meaningful (status, approval, checklist, comment keyword).

- “Incident alert” = a routed PagerDuty event/alert or incident that can notify responders.

- “Context injection” = the information your workflow attaches (doc link, summary, owner, severity hint, timestamps).

If you build around those three terms, your workflow reads like a runbook: “When the docs signal happens, create an incident alert with context injection.”

Can Google Docs actually trigger PagerDuty incidents or alerts?

Yes—Google Docs can trigger PagerDuty incidents or alerts, because (1) automation platforms can treat Google Docs/Drive activity as a trigger, (2) PagerDuty accepts incoming events/incidents via integrations and APIs, and (3) those actions can be routed to on-call schedules without manual steps. (zapier.com)

Next, the important question is not “can it be done,” but “should every docs change page someone,” because your answer determines whether this workflow improves response or creates alert fatigue.

Here’s the practical reality: Google Docs was built for collaboration, not alerting. That means the raw activity stream is often too noisy for paging. Your job is to create a deliberate signal inside the document.

Which Google Docs events can act as reliable triggers?

A reliable trigger is a discrete event that represents a stable state change, not continuous editing. In many implementations, you get more reliability by triggering off Drive-level events around the document rather than the granular content edits.

Common reliable trigger patterns include:

- New document created in a controlled folder: Useful for “new incident doc created” or “new postmortem doc created.”

- File moved into an “Approved/Ready” folder: Folder movement is a strong signal because it reflects intent, not typing.

- A comment keyword or approval phrase: For example: “APPROVED,” “SEV2 READY,” “PAGE ONCALL.”

- Template field change (status field): If your org uses structured headings like “Status: Draft/Ready/Final,” you can treat “Ready” as a trigger.

Unreliable trigger patterns include:

- Any edit in the doc: Too frequent, too ambiguous.

- Every comment: Unless you filter by keyword, this becomes notification spam.

- Real-time collaborator activity: Great for collaboration, poor for on-call.

The best “docs signal” is one that a responder can explain in one sentence: “We only page when the incident doc is moved to Ready-for-Escalation.”

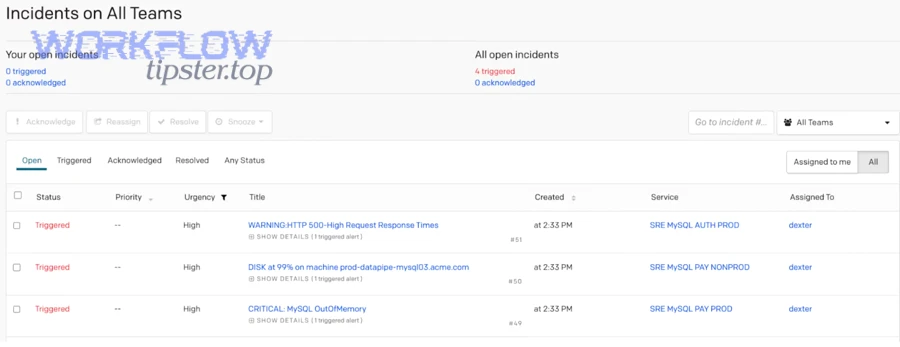

Which PagerDuty actions map best to documentation signals?

Documentation signals are strongest when they enrich incident response, not when they attempt to replace incident judgment. That means the safest mappings are actions that add clarity and coordination.

High-safety mappings:

- Create an event/alert that can deduplicate: Best when you want PagerDuty to group noise and still keep history.

- Create an incident with a clear title and link: Best when the doc is the authoritative “incident record.”

- Add a note to an existing incident: Best when the doc is evolving (status updates, mitigation steps).

- Add responders or notify a team: Best when the doc indicates a verified escalation.

Higher-risk mappings (use carefully):

- Auto-acknowledge: Can hide real problems if the wrong trigger fires.

- Auto-resolve: Usually inappropriate unless the upstream system is authoritative.

PagerDuty’s own guidance distinguishes how alerts and incidents relate in incident response, which is why “notes, enrichment, and routing” typically outperform “auto-resolve” in real teams. (support.pagerduty.com)

How do you map triggers to actions to reduce alert fatigue?

You reduce alert fatigue by forcing your workflow to answer three questions before it sends anything: (1) Is this signal intentional? (2) Is this signal important enough to page? (3) Is this signal already represented?

A practical mapping approach looks like this:

- Trigger: “Doc moved to ‘Ready for On-Call’ folder”

- Condition: “Doc contains ‘SEV1’ or ‘SEV2’ in the header”

- Action: “Create incident (or event) with title + doc link + owner + last-change summary”

- Guardrail: “Do not create a new incident if a dedup key exists for this doc ID in the last 60 minutes”

To make this concrete, the table below shows common Docs signals and the safest PagerDuty response actions they should map to.

| Docs signal (trigger) | Best PagerDuty action | Why it works for on-call |

|---|---|---|

| New incident doc created from template | Create event/alert or incident | Establishes a consistent incident record early |

| Doc moved to “Ready for Escalation” | Create incident | Intentional state change reduces noise |

| “APPROVED” comment added by IC | Add responders / notify team | Human verification before escalation |

| Mitigation section updated during active incident | Add note to incident | Keeps timeline and context synchronized |

| Postmortem marked “Final” | Create follow-up task/alert (non-paging) | Drives closure without waking someone up |

What Google Docs triggers and PagerDuty actions should on-call teams use?

There are 4 main types of Google Docs triggers and PagerDuty actions on-call teams should use—creation, state-change, human-verified signals, and enrichment updates—based on one criterion: whether the signal represents a discrete operational decision rather than ongoing collaboration.

To illustrate what “discrete operational decision” means, compare “editing a paragraph” with “moving the doc to Ready for Escalation.” The first is activity; the second is intent.

Which Google Docs events can act as reliable triggers?

Use reliable triggers that are naturally “chunky” and intentional:

- Creation triggers: New doc created from a template in a controlled folder.

- Lifecycle triggers: Doc moved into a specific folder (“Ready,” “Approved,” “Final”).

- Approval triggers: Specific keywords in a comment from a defined role (IC, SRE lead).

- Checklist triggers: A section or checkbox-like marker that indicates completion.

If your team already standardizes incident documentation, the most reliable triggers come from those standards: template names, folder structures, and role-based approvals.

Which PagerDuty actions map best to documentation signals?

Use PagerDuty actions that route, enrich, or coordinate, rather than actions that attempt to finalize incident status automatically.

Recommended action hierarchy (from safest to most sensitive):

- Add context (notes, links, tags)

- Notify the right team (route to a service/escalation policy)

- Create an alert/event (deduplicate-friendly)

- Create an incident (paging impact)

- Change incident state (ack/resolve)—only with strict human gating

This hierarchy keeps your automations aligned with the operational goal: help responders decide faster, not decide for them.

How do you map triggers to actions to reduce alert fatigue?

Treat alert fatigue as an engineering constraint. Every workflow should include a filter, a dedup key, a cooldown window, and a fallback path so the alert stays useful even when enrichment fails.

Alert fatigue is not just annoying—it measurably disrupts focus. According to a study by University of California, Irvine from the Department of Informatics, in 2008, people took on average about 23 minutes to resume an interrupted task after an interruption. (ics.uci.edu)

That finding is exactly why your Docs-to-on-call design must bias toward fewer, better alerts.

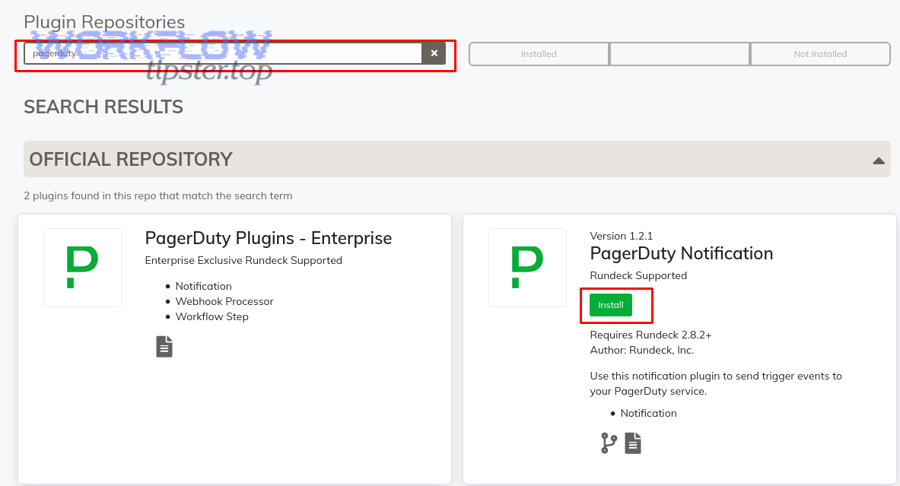

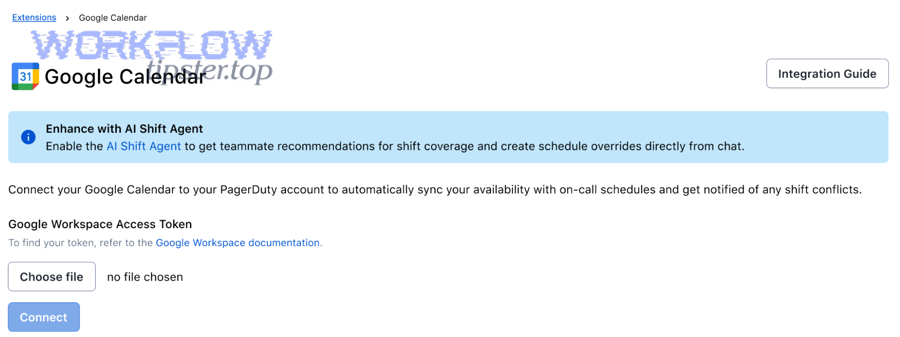

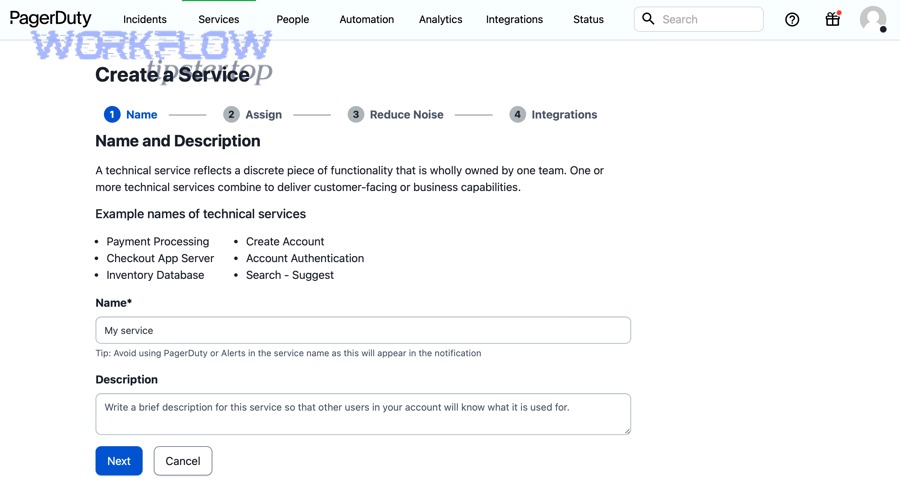

How do you set up a no-code Google Docs → PagerDuty workflow step by step?

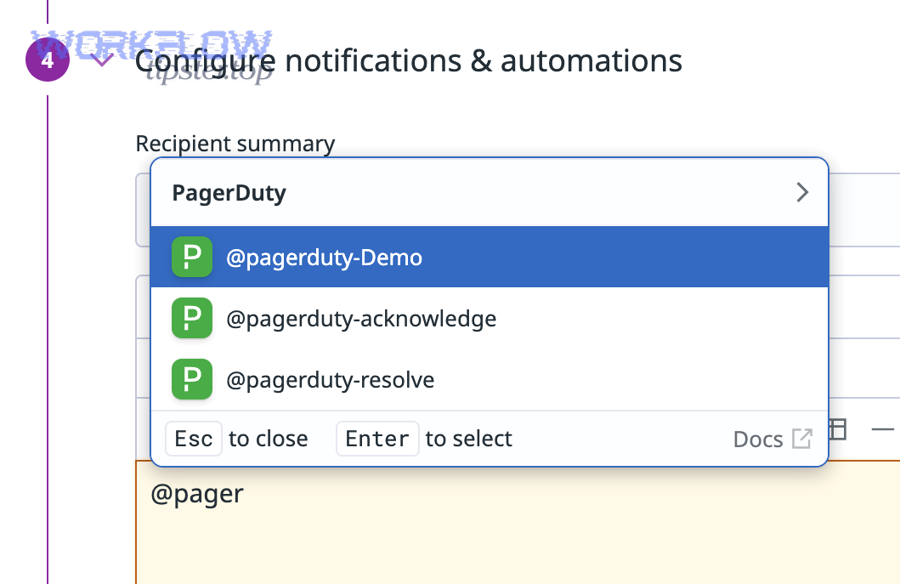

A no-code Google Docs → PagerDuty workflow follows 6 steps—choose a platform, connect accounts, select a trigger, select an action, add rules, and test—so you can generate consistent incident alerts with the right context in minutes instead of manual escalation. (zapier.com)

Below, the goal is not to describe every tool feature; the goal is to show a setup method that produces a stable workflow you can trust.

What do you need before you start (accounts, permissions, access scope)?

Before you build the workflow, define these four prerequisites:

- A controlled location for incident docs

- Use a specific folder or shared drive location for incident-related documents.

- Keep templates in one place and generated incident docs in another, so triggers stay clean.

- A workflow owner (not a single person’s personal account)

- If a workflow runs under a personal account, it can break when the user changes roles or leaves.

- Prefer shared ownership or a managed automation account where possible.

- PagerDuty routing targets

- Know which PagerDuty service (or team) should receive the alert.

- Decide escalation policy expectations: immediate page vs notify-only.

- A “docs signal” definition

- Choose exactly what triggers: created doc, moved folder, approval keyword, or status marker.

- Write it down as a one-line rule.

If you skip these prerequisites, you typically end up with a workflow that “works” but pages the wrong team, breaks on permission changes, or spams responders.

How do you build the trigger → action flow and add conditions?

Build the workflow in this order, because each step reduces ambiguity:

- Select the trigger event

- Example: “New document created in folder” or “File moved to folder.”

- Avoid “any update” triggers unless you can filter strongly.

- Select the PagerDuty action

- Start with “create event/alert” or “create incident” depending on impact.

- If you are unsure, start with event/alert to prove your signal quality first.

- Add conditions (filters)

- Only run when doc template name matches an incident template.

- Only run when doc includes a severity label (SEV1/SEV2).

- Only run when doc is in production folder (not staging).

- Add context injection

- Include doc link.

- Include doc owner/editor.

- Include a short summary (“Status changed to Ready for Escalation”).

- Add noise controls

- Add a cooldown window.

- Add a dedup key based on doc ID and severity.

- Choose routing

- Map severity to PagerDuty service or escalation policy if needed.

This is also where you can naturally tie in broader Automation Integrations: once your team gets comfortable with “signal → rules → action,” the same design pattern applies to adjacent workflows like airtable to zendesk or airtable to jira, because the logic is identical even if the apps differ.

How do you test, launch, and monitor the workflow?

A proper test is not one successful run. A proper test proves that the workflow behaves correctly under normal and abnormal conditions.

Test checklist (minimum):

- Happy path: trigger fires, PagerDuty receives it, incident alert includes doc link and summary.

- Noise test: minor edits do not fire.

- Role test: only approved roles can trigger escalation.

- Duplicate test: repeating the same signal does not create multiple incidents.

- Failure test: remove access to doc folder and confirm the workflow fails loudly (with logs/alerts).

Launch checklist:

- Start with notify-only (if your team can tolerate it) for 1–2 weeks.

- Measure how many alerts were “useful.”

- Tighten filters before enabling paging.

Monitoring checklist:

- Enable workflow run logs and error notifications.

- Review failures weekly.

- Re-verify permissions monthly, especially in organizations with frequent shared drive changes.

If you’re connecting to PagerDuty via an integration endpoint, PagerDuty’s developer documentation explains how event-based integrations work and what information is typically required to send events successfully. (developer.pagerduty.com)

Which integration method is best: Zapier vs n8n vs Make vs Workato vs custom code?

Zapier wins in setup speed, n8n is best for flexibility and self-host control, Make is optimal for visual multi-step scenarios, Workato is strongest for enterprise governance, and custom code is best when you need full control over security, idempotency, and complex logic. (zapier.com)

Next, choose based on your team’s reality, not the tool’s marketing. The “best” method is the one that stays reliable at 2 a.m. and remains maintainable after org changes.

Here are the decision criteria that actually matter for on-call integrations:

- Signal quality tools (filters, conditions, dedup): how easily you can prevent noise.

- Reliability controls (retries, logging, alerting): how quickly you can diagnose and recover.

- Governance (access, audit logs, change control): how safely you can manage it at scale.

- Total cost (licenses + maintenance): including the cost of broken workflows.

How do these options compare on setup speed, flexibility, and total cost?

Zapier: fastest to launch and easiest for non-technical teams; great for proving value quickly. It is ideal when you need a clean, minimal workflow: one trigger, one action, strong filtering.

n8n: stronger when you need multi-step logic, custom data shaping, or a controlled environment. It shines when your workflow logic needs to grow (enrichment, branching, conditional routing).

Make: strong for multi-step visual orchestration and scenario-style automation; good when you want richer transformations without writing code.

Workato: a fit for organizations that need stronger enterprise-grade governance and centralized management.

Custom code: the best option for deep control and unique constraints, but you pay with engineering time, ongoing maintenance, and operational ownership.

A pragmatic strategy is to start with no-code to validate your “docs signal,” then move to more configurable tooling or code only when the workflow proves valuable and needs tighter controls.

Which option is best for on-call governance and compliance?

Governance is about preventing silent failure and preventing unauthorized paging.

In higher-governance environments, prioritize:

- Role-based access to edit workflows

- Audit logs for workflow changes and execution history

- Separation of environments (dev/test/prod)

- Least-privilege credentials and periodic access reviews

That is why enterprise-grade platforms often win when compliance is strict. But even if you stay no-code, you can still implement governance by using shared ownership, carefully scoped permissions, and a documented change process.

If your on-call process also depends on meetings or coordination, you can extend the same “signal-based” design to adjacent workflows such as “google docs to google meet” scheduling actions—just keep the same guardrails so collaboration events do not become paging events.

How do you make Google Docs → PagerDuty automation secure and reliable?

Making Google Docs → PagerDuty automation secure and reliable requires three pillars—least-privilege access, noise controls (dedup + cooldown), and observable operations (logs + failure alerts)—so your workflow pages the right people for the right reasons and never fails silently.

Then, treat the workflow like production infrastructure, because if it pages people at night, it is operationally critical.

What permissions and authentication practices reduce risk?

Use these practices to prevent security and continuity failures:

- Avoid personal-account ownership: If a workflow is owned by one person, it can break with offboarding.

- Scope access to the minimum: The automation only needs access to the specific folders or documents it monitors.

- Limit PagerDuty credentials: Use the minimum permissions required for the action you are taking.

- Document credential rotation: If keys rotate, your workflow must be updated in a controlled way.

If you integrate via APIs, PagerDuty documents multiple integration approaches (including event-based integrations and incident creation), which helps you choose the right level of access and the right method for your workflow. (developer.pagerduty.com)

How do you prevent duplicate incidents and alert spam?

Duplicates usually come from two places: noisy triggers and retry behavior. You stop them with design rules:

- Use a deduplication key

- Base it on doc ID + severity + environment.

- Use the same key for repeated updates that should “group” rather than “re-page.”

- Add a cooldown window

- Example: do not create new incidents from the same doc for 30–60 minutes.

- Require intentional signals

- Folder moves or approval keywords beat raw edits.

- Separate paging from documentation updates

- Use “notes” for frequent updates.

- Use “incident creation” only for verified escalation points.

If you build these rules early, you avoid the worst failure mode: a document with multiple collaborators unintentionally triggering repeated pages.

What should you log and review to keep workflows healthy over time?

Reliability improves when you treat workflow logs like application logs. At minimum, track:

- Workflow runs: timestamp, trigger event, and outcome.

- Failure reasons: permission errors, timeouts, payload failures.

- PagerDuty response: accepted vs rejected requests, incident/event IDs.

- Change history: who changed the workflow, when, and why.

Operationally, the best cadence is:

- Weekly: review failures and false positives.

- Monthly: review permissions and ownership.

- Quarterly: revisit triggers/actions as your on-call process evolves.

If your organization also runs customer support automations, you can reuse the same reliability discipline in workflows like “airtable to zendesk,” because the underlying problem is identical: you are building trust in an automated handoff.

What advanced workflows can you build (and when should you avoid automation) for Google Docs → PagerDuty?

Zapier-style workflows win for quick, standardized patterns, richer builders win for multi-step enrichment, and manual gates are optimal when the decision must remain human—so the best advanced workflow is the one that adds context and coordination without removing judgment from on-call responders.

In addition, advanced workflows are where micro-semantics matter: dedup strategies tied to doc structure, bidirectional status patterns, and explicit “do not automate” boundaries.

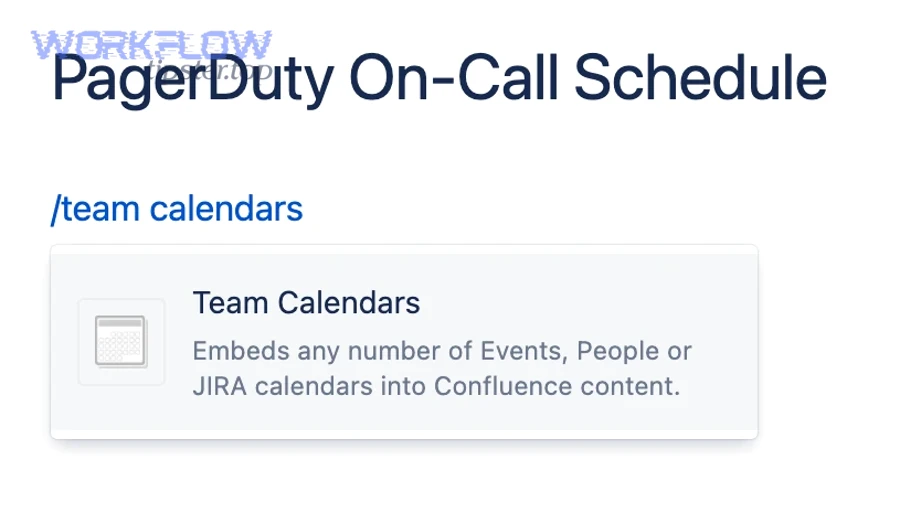

How can you turn a Google Doc into an incident runbook that stays synced with incidents?

You can turn a Google Doc into a synced incident runbook by using a strict template (fixed sections + status fields) and automating two actions: (1) create or update the PagerDuty incident with the doc link and key fields, and (2) append major incident milestones back into the document as a timeline.

A runbook-style doc works when it has consistent structure:

- Header fields: Severity, Service, Environment, Incident Commander, Start time

- Impact summary: One paragraph, updated as facts change

- Mitigation steps: Bullet list with owner + timestamp

- Links: dashboards, logs, deploys, tickets

- Decision log: what you tried, what worked, what you rolled back

When the doc structure is stable, automation becomes safer because it can reliably “read” and “write” expected fields.

How do you enrich incidents with the right doc context (links, sections, owners, severity hints)?

You enrich incidents by injecting context that reduces the responder’s first 5 minutes of searching. The best enrichment package includes a direct link, the most relevant section, owner and IC, a severity hint, a one-sentence change summary, and a last-updated timestamp.

A simple enrichment checklist you can use before enabling paging:

- Does the incident alert show the doc link immediately?

- Can the responder identify severity within 10 seconds?

- Can the responder identify the owning team and IC quickly?

- Does the alert avoid dumping the entire doc and instead provide a clean summary?

When enrichment is strong, automation feels helpful. When enrichment is weak, automation feels like spam.

What are the most common failure modes (rate limits, permission drift, missing triggers), and how do you debug them?

The most common failure modes are predictable, which means you can design a clear debug playbook around permission drift, missing triggers, duplicates, and payload failures.

- Permission drift

- Symptom: workflow suddenly fails after months of success

- Cause: doc moved, shared drive policy changed, owner removed

- Fix: re-scope access to the new folder, use shared ownership, revalidate permissions monthly

- Missing triggers

- Symptom: doc updates happen, but no alert is sent

- Cause: trigger was based on an event that does not fire for certain edits

- Fix: switch to a more “intent-based” trigger (folder move, approval keyword)

- Duplicate alerts

- Symptom: multiple incidents for one doc

- Cause: retries + no dedup key; multiple collaborators triggering the same condition

- Fix: dedup key + cooldown + stronger gating signal

- Payload failures

- Symptom: workflow runs but PagerDuty rejects or doesn’t create expected output

- Cause: missing required fields or wrong integration method

- Fix: validate required fields and align with PagerDuty’s integration method documentation (developer.pagerduty.com)

A debugging best practice is to preserve a “safe fallback” action: if enrichment fails, still send a minimal alert containing a working doc link and a clear title.

When is a manual escalation process better than no-code automation?

Yes—manual escalation is better than no-code automation when (1) compliance requires human verification, (2) the signal is too ambiguous to encode reliably, and (3) the cost of a false page is higher than the cost of a slower escalation.

In short, “No-Code, Not Manual” is not a religion; it is a default. If your incident workflow includes sensitive decisions, a hybrid pattern often wins:

- Automation prepares the package (context, summary, routing suggestion)

- A human approves the page (keyword comment, checkbox, or explicit “PAGE” action)

That hybrid gives you the best of both worlds: responders get high-quality context, and the on-call schedule stays protected from accidental triggers—especially in teams that already manage multiple workflows across systems such as Airtable to Jira, where strong gating is the difference between a helpful automation and a noisy one.