An Airtable webhook 400 Bad Request almost always means your request is malformed or fails validation, so the fastest fix is to verify the JSON body, headers, endpoint, and field schema in a strict order until one mismatch is exposed.

Next, you should interpret the error correctly: 400 is usually a parsing/encoding or payload-shape issue, and the solution is to narrow the failure to one of four buckets (body, headers, URL/method, schema) before you change anything else.

Then, you’ll want practical debugging tactics that work in real tools—Postman, Zapier, Make—or custom code, so you can reproduce the failing request, log what was actually sent, and fix the exact field or formatting mistake.

Introduce a new idea: once you can reliably fix 400 errors, you can prevent them with lightweight validation, “minimal payload” isolation, and resilient field-mapping habits that reduce future breakage when your Airtable base evolves.

What does “Airtable Webhook 400 Bad Request” mean?

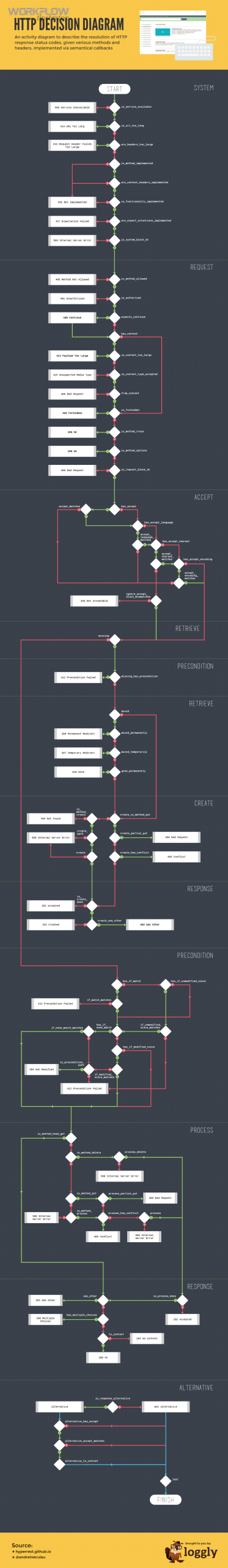

An Airtable Webhook 400 Bad Request is a client-side HTTP error that occurs when Airtable cannot parse or validate your request—most commonly because the encoding or JSON body is not valid or not shaped as the API expects.

To better understand why this matters, you need to treat “400” as a signal that your request is broken before Airtable can even apply business logic, which means debugging starts with what you sent, not what you wanted to send.

Which part is usually “bad”: JSON body, headers, endpoint, or field schema?

There are 4 main “bad request” buckets—JSON body, headers, endpoint/method, and field schema—based on what layer rejects your call first, and you can usually identify the bucket by reproducing the request and reducing it to a minimal payload.

Specifically, each bucket leaves a distinct footprint in how the error appears:

- JSON body bucket: Airtable rejects parsing, often when your body is not valid JSON, has invalid encoding, or is not sent as JSON at all.

- Headers bucket: Airtable receives bytes but cannot interpret them as JSON because Content-Type is wrong, or authorization headers are missing/malformed.

- Endpoint/method bucket: You call the wrong URL path, table identifier, or HTTP method, causing Airtable to interpret the request incorrectly or route it unexpectedly.

- Field schema bucket: The request parses, but one or more fields fail Airtable’s schema expectations (wrong data type, invalid select value structure, malformed linked record array, attachment object shape, etc.).

The hook-chain rule that keeps you moving is simple: the earlier the layer, the more “global” the failure feels. If Airtable cannot parse the body, no individual field can be blamed yet.

Is 400 Bad Request the same as 401/403/404/422 in Airtable integrations?

400 wins for “malformed request content,” 401 is best for “missing/invalid authentication,” 403 is optimal for “authenticated but not allowed,” 404 is typically “wrong resource/route,” and 422 often points to “well-formed request that fails validation rules.”

However, many automation tools wrap errors, so you should compare what Airtable actually returned versus what your tool summarized:

- 400: You usually need to fix JSON formatting, encoding, headers, or payload shape first.

- 401/403: You need to fix token, scopes/permissions, or access to the base/table—this is where “airtable permission denied” commonly appears in user-facing UIs, even if the underlying HTTP code is different.

- 404: You need to fix base/table identifiers, endpoint path, or environment (wrong base, wrong table name, wrong versioned URL).

- 422: You need to fix a specific field’s format or value, because the request is readable but unacceptable.

If you treat every error as a 400 problem, you will chase formatting ghosts while the real issue is permissions, routing, or validation.

Is your JSON payload valid and encoded correctly?

Yes—an Airtable webhook 400 bad request is very often solved by fixing JSON validity and encoding because (1) Airtable can’t parse malformed JSON, (2) invisible encoding issues corrupt the payload, and (3) unescaped characters break otherwise-correct structures.

Then, once you accept that “valid JSON” is a non-negotiable gate, you can debug with a repeatable checklist instead of trial-and-error changes inside your automation tool.

What is the fastest checklist to confirm “valid JSON” before sending?

There are 7 fastest checks you can run to confirm valid JSON based on syntax, encoding, and transport expectations: braces/quotes, commas, data types, escaping, encoding, payload wrapper, and tool mode.

- Check 1: JSON parser validation — paste the payload into a JSON validator so you see the exact character offset of failure.

- Check 2: Double quotes only — keys and string values must use double quotes, not single quotes.

- Check 3: Trailing commas — remove trailing commas in objects/arrays.

- Check 4: Correct primitives — use true/false/null without quotes; keep numbers as numbers unless Airtable expects strings.

- Check 5: Escaping — escape embedded quotes and ensure line breaks are handled correctly in strings.

- Check 6: UTF-8 clean payload — ensure UTF-8 encoding and watch for BOM/invisible characters if payload is generated from files or copied from spreadsheets.

- Check 7: Send as JSON — confirm the request body is sent as raw JSON, not form-encoded or “text” mode.

This checklist works because it matches how JSON parsers fail: first by syntax, then by type/shape, and finally by tool transport.

How do you fix the most common invalid JSON payload patterns?

There are 6 common invalid JSON payload patterns—missing commas, mismatched braces, unescaped quotes, wrong array/object shape, incorrect null/boolean usage, and accidental string concatenation—based on what breaks parsing versus what breaks schema expectations.

More specifically, you can fix them with targeted edits that preserve intent while restoring valid syntax:

- Missing comma between fields: add a comma between adjacent key/value pairs to restore object structure.

- Unescaped quotes inside a string: replace “ inside a string with \" (or escape in your serializer) so the string stays one string.

- Line breaks pasted from rich text: ensure your JSON serializer encodes newlines properly instead of embedding raw control characters.

- Wrong wrapper shape: when Airtable expects { “records”: [ … ] }, do not send a bare array or a single record object if the endpoint requires a records array.

- String vs array mismatch: linked records and multi-select fields often require arrays, not single strings.

- Null handling: send null intentionally (without quotes) only when the API accepts it; otherwise omit the field entirely to avoid validator rejection.

If you consistently serialize from native objects (instead of manual string building), you eliminate most syntax errors before they reach Airtable.

Are you calling the correct Airtable endpoint and HTTP method?

Yes—calling the correct Airtable endpoint and HTTP method prevents 400 bad request because (1) the expected payload shape differs by endpoint, (2) method mismatches change how the server interprets the request, and (3) wrong resource identifiers push valid JSON into an invalid context.

Next, you should treat URL and method as a pair: a correct body sent to the wrong URL is still wrong, and a correct URL called with the wrong method can look like a “bad request” in many automation logs.

Which endpoint should you use for create vs update vs upsert?

Create wins with POST to the records endpoint, update is best with PATCH for partial changes, and replace is optimal with PUT when you intentionally overwrite a record’s fields according to the API’s rules for the chosen method.

To illustrate how this affects 400 debugging, consider what each action implies for your payload:

- Create: you must send the fields needed for a new record in the expected wrapper; missing required structure often fails immediately.

- Update (PATCH): you send only fields you want to change; sending incompatible fields is the common failure mode.

- Replace (PUT): you risk clearing fields you omit depending on endpoint rules; payload completeness matters more.

Upsert is often implemented by your integration logic (find-or-create) rather than a single “upsert endpoint,” so “400” can show up when your second step sends fields that don’t match the record you found.

How can you confirm the request URL is correct in Postman/Zapier/Make?

There are 5 reliable ways to confirm the request URL is correct—copying the raw request, comparing base/table identifiers, validating URL encoding, logging the final resolved URL, and testing with a minimal GET—based on whether the tool uses templating and hidden defaults.

- Method 1: copy the request as cURL (or equivalent) so you see the final URL after variables resolve.

- Method 2: verify the base ID and table name/ID match the base you’re actually editing.

- Method 3: check URL encoding for spaces and special characters in table names or parameters.

- Method 4: log the final URL string in your automation step (especially if you build it dynamically).

- Method 5: run a simple GET against the same base/table to confirm routing before you POST complex bodies.

This methodical approach is the core of practical airtable troubleshooting because it isolates “wrong destination” from “wrong payload” in minutes.

Are your headers and authentication set correctly?

Yes—headers and authentication frequently cause Airtable webhook 400 bad request because (1) a wrong Content-Type can make Airtable treat JSON as plain text, (2) malformed Authorization headers can break request processing in tools, and (3) proxy/tool wrappers may transform your body unless headers are explicit.

Besides that, headers are the hidden layer most automation builders skip, so fixing them often feels like “magic” when it’s actually basic HTTP correctness.

Which headers are required and which ones commonly cause 400?

There are 3 headers you should treat as critical—Authorization, Content-Type, and Accept—based on whether Airtable can authenticate you and parse your payload as JSON.

- Authorization: use the expected Bearer token format; missing or malformed tokens lead to auth failures that tools may mislabel.

- Content-Type: set application/json when sending JSON; if it’s application/x-www-form-urlencoded or omitted, the server may not parse JSON correctly.

- Accept: request JSON responses so error details remain consistent across environments.

The most common “header-caused 400” happens when a tool UI shows JSON, but actually sends form fields, or when it injects extra quoting around a JSON block.

Is “400” sometimes a tool-wrapped error for auth/config issues?

Tool-wrapped 400 wins in “generic error summaries,” while true Airtable 401/403 is best identified by the raw HTTP response body and status code, and schema-driven 422 is optimal to suspect when the payload is valid but one field is rejected.

Meanwhile, you can confirm the truth by capturing the raw response:

- In Postman: review the exact request/response in the console and compare what was sent with the API specification.

- In Zapier/Make: expand the step details to view the raw request body after mapping and before sending (or replicate with cURL).

- In custom code: log the serialized JSON string, headers, and final URL immediately before the HTTP call.

This is also the point where users often jump to “airtable permission denied,” but the real cause can be a missing header or a token that was never applied to the request step.

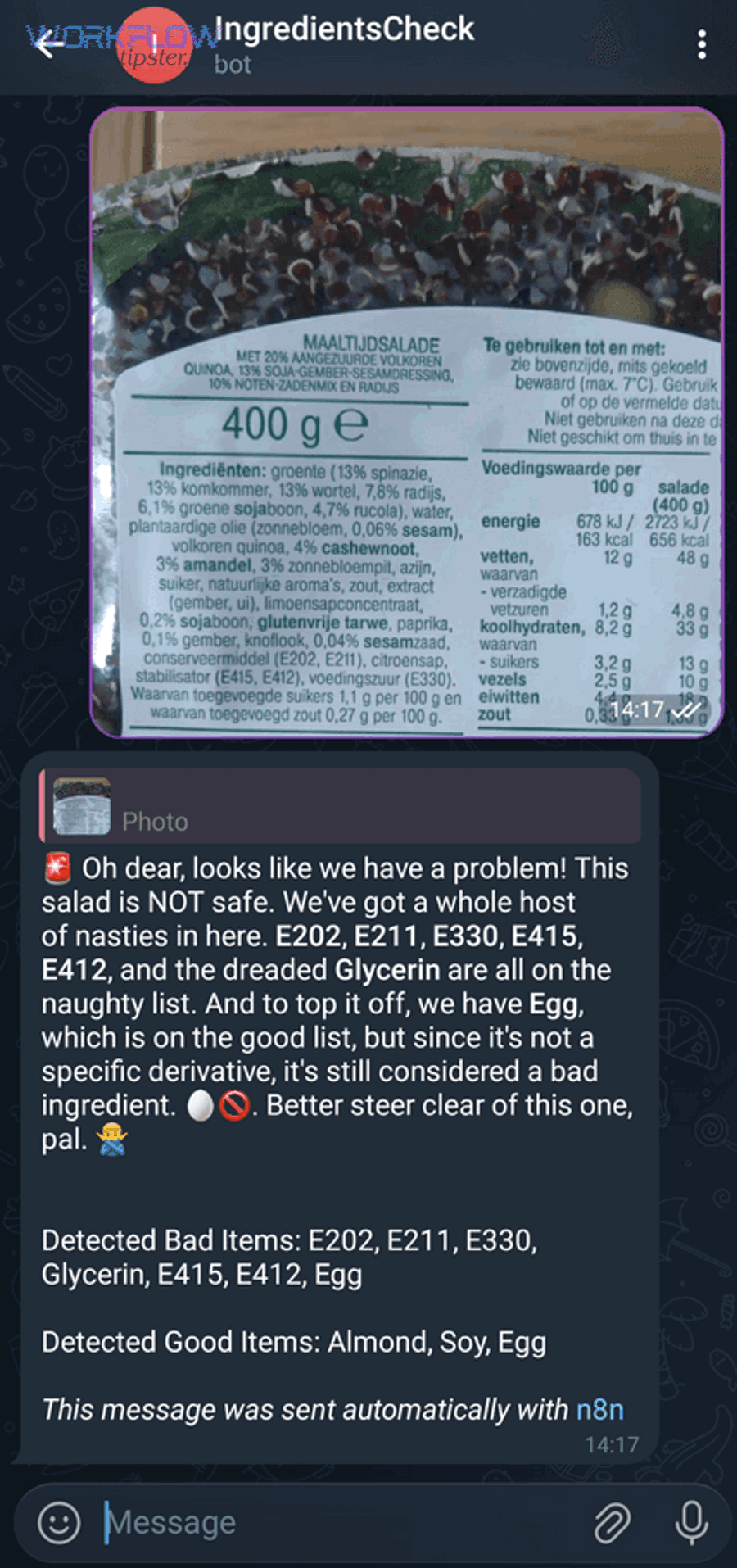

Is your Airtable field mapping compatible with the table schema?

Yes—field mapping compatibility is a top driver of Airtable webhook 400 bad request because (1) Airtable validates each field’s data type, (2) special field types require arrays/objects rather than strings, and (3) renamed fields or changed select options silently break old mappings.

More importantly, schema issues are the hardest to spot by eye, so you need a controlled process that isolates the exact field that fails.

Which Airtable field types most often trigger 400 due to wrong format?

There are 6 Airtable field types that most often trigger 400 errors—linked records, single/multiple select, attachments, dates/times, collaborator/user fields, and formula-driven fields—based on whether the API expects arrays/objects and strict formats.

- Linked records: commonly require an array of record IDs rather than a single ID string.

- Multiple select: often requires an array of option names (or structured objects depending on API expectations), not a comma-separated string.

- Attachments: usually require an array of objects (for example, with a URL) rather than a raw URL string.

- Date/time: typically expects ISO 8601 strings; locale formats like “01/02/2026” can cause validation failures.

- Collaborator fields: may require user IDs/emails in specific shapes depending on configuration and permissions.

- Computed fields (formula/rollup): are not writable; attempts to set them can cause failures that feel like “bad requests.”

When you see a 400 after adding “just one more field,” you should assume this list contains your culprit until proven otherwise.

How do you validate field names and types to avoid “unknown field” or type mismatch?

There are 5 validation moves that prevent unknown-field and type-mismatch errors—checking exact field names, confirming writable fields, verifying select options, confirming linked record IDs, and aligning date/time formats—based on how Airtable enforces schema constraints.

- Validate exact field name: copy the field name from the base (watch for trailing spaces and punctuation).

- Validate writability: ensure the field is not computed and is allowed to be set via API.

- Validate select values: ensure the option exists and matches spelling/case if the API treats options strictly.

- Validate linked IDs: confirm each linked record ID exists in the target table, and send it in an array when required.

- Validate date shape: standardize on ISO 8601 for predictable parsing.

If you adopt these checks, you stop debugging “Airtable is broken” and start debugging “my mapping drifted from the schema.”

What’s the safest “minimal payload” strategy to isolate the failing field?

There are 3 safest minimal-payload strategies—single-field baseline, incremental add-on, and binary split—based on how quickly you need to isolate the failing field without losing track of what changed.

- Strategy A: Single-field baseline — send one simple text field that you know exists and is writable; confirm success; then add fields one-by-one.

- Strategy B: Incremental add-on — add one field per test run and keep a change log so you can revert instantly.

- Strategy C: Binary split — if you have many fields, test half the fields at once; if it fails, halve again until one field remains.

This approach is powerful because it creates a clean hook chain: success establishes a known-good request shape, and each new field becomes a controlled experiment.

What are the top causes of Airtable Webhook 400 Bad Request and their fixes?

There are 8 top causes of Airtable webhook 400 bad request—invalid JSON, wrong Content-Type, wrong endpoint/method, wrong payload wrapper, field type mismatch, invalid linked record arrays, invalid attachment objects, and non-writable/computed fields—based on where Airtable rejects your request first.

In addition, the quickest way to apply fixes is to map each cause to a concrete symptom and a single corrective action, so you stop changing multiple things at once.

This table contains the most common 400 causes and the exact “first fix” that resolves each one in the shortest time.

| Cause | What it looks like | First fix to try |

|---|---|---|

| Invalid JSON syntax | Parsing fails; error points to malformed JSON | Validate JSON; serialize from objects; remove trailing commas |

| Wrong Content-Type | Body looks right in tool UI but fails on send | Force Content-Type: application/json and send raw JSON |

| Wrong endpoint or method | Works in one place, fails in another environment | Confirm URL and method match create/update endpoint expectations |

| Wrong wrapper shape | Sending array/object that endpoint does not accept | Match API wrapper (for example, records array when required) |

| Field type mismatch | Fails after adding specific field | Fix format for that field type (array/object/ISO string) |

| Linked records sent as string | Linked field won’t set; request fails | Send array of record IDs (even if only one) |

| Attachments sent as URL string | Attachment field errors or fails validation | Send array of attachment objects in the expected format |

| Computed field is being written | “Should be read-only” type behavior | Remove computed fields from payload; write to input fields only |

Which fixes work best for Zapier & Make scenarios?

There are 6 fixes that work best in Zapier and Make—force JSON mode, inspect mapped output, convert strings to arrays, standardize date/time, remove empty fields, and isolate to one field—based on how no-code tools transform payloads before sending.

- Force JSON sending: ensure the step is sending raw JSON, not key/value form fields disguised as JSON.

- Inspect mapped output: view the final payload after mapping; many 400 errors are introduced by templating.

- Convert linked fields properly: if your input is one ID, wrap it in an array so Airtable receives the correct shape.

- Normalize dates: generate ISO strings instead of locale-formatted dates from spreadsheet/CRM inputs.

- Remove empty strings for non-text fields: an empty string is not the same as null and can break validation for selects, numbers, or dates.

- Reduce the payload: use the minimal payload strategy to find the exact field that breaks the request.

If your automation fails intermittently, also check upstream steps for partial outputs, because the “bad request” may be caused by missing mapped values rather than wrong formatting.

Which fixes work best for custom code (Node/Python/C#) requests?

There are 7 fixes that work best in custom code—use a real JSON serializer, log the exact outgoing string, set headers explicitly, validate schema before send, retry safely, handle encoding, and test with cURL—based on how developers accidentally introduce malformed payloads during string manipulation.

- Use serializers: build a native object and serialize it; do not concatenate JSON strings manually.

- Log the outgoing payload: print the exact JSON string (and headers) right before the request.

- Set Content-Type explicitly: do not trust defaults from HTTP clients or middleware layers.

- Validate schema in-app: validate required fields and types before calling Airtable to fail fast with clear messages.

- Handle encoding intentionally: enforce UTF-8 and avoid copying payload fragments from rich-text sources without sanitizing.

- Use minimal retries: do not retry 400 blindly; retry only after modifications or when you are sure the request is transiently broken by upstream data.

- Reproduce with cURL: a clean cURL reproduction proves whether the bug is in your HTTP client, middleware, or payload generation logic.

According to a capstone report by Rochester Institute of Technology from the Department of Computer Science, in 2021, researchers collected and analyzed 47,610 JSON Schemas, highlighting how widely schema-based validation is used to prevent malformed JSON structures in real systems.

Contextual Border: The sections above fully resolve the primary intent—how to diagnose and fix Airtable webhook 400 bad request by correcting JSON, headers, endpoints, and schema mapping. The next section expands into prevention, edge cases, and adjacent errors for deeper semantic coverage.

How can you prevent Airtable 400 errors from recurring in production automations?

You can prevent Airtable 400 errors by adding lightweight validation and observability before the request is sent—specifically (1) validate payload shape, (2) log and replay failures safely, and (3) make field mapping resilient to schema changes so your automation does not degrade over time.

Next, when you shift from “fix” to “prevent,” you stop reacting to incidents and start controlling the inputs that create malformed requests in the first place.

How do you log and replay failed webhook requests safely?

There are 4 safe logging and replay patterns—sanitized payload snapshots, correlation IDs, controlled replays, and environment separation—based on keeping debugging detail while protecting secrets and personal data.

- Sanitized payload snapshots: store the outgoing request body after mapping, but redact tokens, emails, and any sensitive fields.

- Correlation IDs: attach an internal ID to every request so you can trace one failure across steps.

- Controlled replays: replay only the failing payload in a test base or staging environment to avoid duplicating production data.

- Environment separation: keep distinct tokens and base IDs for staging versus production to prevent accidental writes during debugging.

This practice is also how you diagnose intermittent “airtable trigger not firing” complaints: logging reveals whether the trigger never emitted data, or it emitted malformed data that later produced a 400.

What edge cases can break “valid” JSON payloads in real workflows?

There are 5 edge cases that can break “valid-looking” JSON—hidden characters, large-text copy/paste artifacts, mixed locale formats, null/empty confusion, and partial payloads from upstream steps—based on where automation data is sourced and transformed.

- Hidden characters: BOM and non-printing characters can cause parsers to fail even when the JSON looks correct.

- Rich-text artifacts: pasted text may include smart quotes or control characters that break serialization if not normalized.

- Locale/timezone drift: dates emitted as “MM/DD/YYYY” or localized strings can fail strict parsing; standardize to ISO 8601.

- Null vs empty string: empty string often fails numeric/select/date fields; omit the field or send null only if accepted.

- Partial payloads: when upstream steps time out or return empty objects, your JSON stays valid but becomes invalid for schema requirements.

These edge cases explain why an integration may work for 90% of records and fail for 10%: the input data, not the automation logic, is inconsistent.

When is it not a 400 problem—what “opposite” errors should you check instead?

400 wins when the request is malformed, but 401/403 is best for authentication and access issues, and 404/422 is optimal when routing or validation rules—not parsing—are the real reason the request fails.

On the other hand, prevention means you should recognize these patterns quickly:

- If tokens/scopes changed: suspect 401/403 before you rewrite payload code.

- If table/base changed: suspect 404 (wrong route/resource) before you blame JSON.

- If one field value is unacceptable: suspect 422-style validation issues before you chase encoding problems.

This comparison keeps your troubleshooting efficient: you fix the correct layer instead of “formatting everything” when the real blocker is permission or routing.

How do you design resilient field mapping to survive schema changes?

There are 4 resilient mapping strategies—versioned contracts, schema checks, defensive defaults, and controlled renames—based on how Airtable bases evolve while automations remain static.

- Versioned contracts: define a stable payload contract in your integration layer and update it intentionally when fields change.

- Schema checks: periodically verify required fields exist and are writable before running high-volume automations.

- Defensive defaults: omit optional fields when values are missing instead of sending empty strings to strict field types.

- Controlled renames: when you rename fields in Airtable, update automation mappings immediately and document the change.

In short, prevention is the final hook in the chain: if you validate payloads and stabilize mapping, Airtable webhook 400 bad request stops being a recurring incident and becomes a rare, quickly diagnosable event.