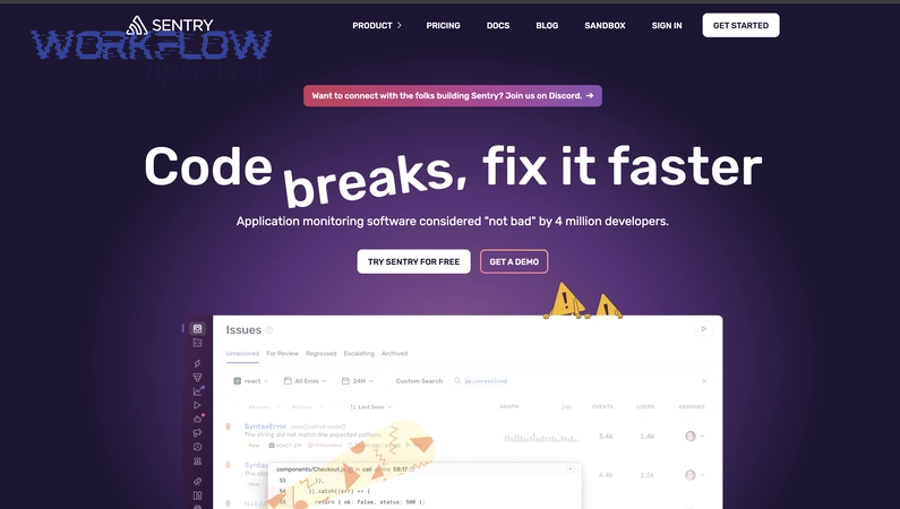

If your team uses Sentry to detect errors and performance regressions, then you should automate incident documentation by connecting Google Docs to Sentry so every incident starts with accurate context, links, and timestamps—without waiting for someone to write it from scratch.

Next, you’ll see what “Google Docs → Sentry” automation actually means in practice, including what belongs in an incident document and which Sentry fields are worth capturing first so the doc stays useful (not noisy).

Then, you’ll get a set of proven workflow patterns and a step-by-step setup approach that works whether you use a no-code automation builder or a custom webhook + Docs API flow.

Introduce a new idea: once the core workflow is working, the real value comes from choosing the right trigger, mapping fields safely, and making the automation reliable enough that DevOps teams can trust it during real incidents.

What does it mean to connect Google Docs to Sentry for automated incident documentation?

Connecting Google Docs to Sentry for automated incident documentation is a workflow that uses Sentry events (alerts, issues, or webhooks) to create or update a Google Doc incident record automatically, so the team captures facts fast and adds analysis later.

To better understand the value, it helps to separate what gets automated (facts and links) from what stays human (root-cause reasoning and corrective decisions).

In a well-designed integration, Sentry provides the “signal” (an alert fired, an issue spiked, a regression appeared), and Google Docs provides the “shared workspace” where responders write the narrative: impact, timeline, mitigation, root cause hypotheses, and action items. The automation’s job is not to “write the postmortem for you.” Its job is to remove the blank-page penalty and keep the incident document aligned with what Sentry already knows.

When you implement this flow, you’ll typically choose one of two document behaviors:

- Create: Generate a new doc when an incident-worthy trigger happens (best for postmortems and major incidents).

- Update: Append to a rolling incident log or update a shared incident doc (best for lightweight operations and daily triage).

The Sentry side of the equation is designed for integrations like this: Sentry’s integration platform exposes webhooks so external services can receive notifications about events and resources. docs.sentry.io

What is an “incident document” and why is Google Docs a common format for it?

An incident document is a written record of an incident that captures impact, timeline, contributing causes, mitigation steps, and follow-up actions, and Google Docs is commonly used because it supports fast collaboration, permissions, and version history in one place.

More specifically, incident documentation tends to evolve in two phases: live incident notes during response, and a post-incident postmortem after stability returns.

In the live phase, responders need a single shared artifact that can be updated quickly: what we know, what we tried, and what changed. Google Docs fits because it is:

- Collaborative by default: multiple editors, comments, and suggestions in real time.

- Easy to share across roles: engineering, support, product, security, and leadership can all access the same source of truth with controlled permissions.

- Template-friendly: you can standardize sections (Impact, Timeline, Detection, Root Cause, Action Items) so people don’t reinvent structure during stress.

In the post-incident phase, the document becomes a learning tool. A widely cited example of postmortem practice is the Site Reliability Engineering approach that emphasizes documenting timelines, triggers, and action items as part of learning from failure. sre.google

What Sentry data is most useful to insert into an incident doc automatically?

There are two main groups of Sentry data to insert into an incident doc—“signal essentials” and “investigation helpers”—based on whether they accelerate triage immediately or enrich analysis later.

More importantly, this grouping prevents your doc from becoming an unreadable paste of raw telemetry.

1) Signal essentials (must-have for every incident doc)

These fields help responders quickly answer “What’s happening and where do we look?”

- Issue/alert title and direct link back to Sentry (the anchor for the entire incident).

- Project + environment (prod/staging), plus release/version when available.

- First seen / last seen timestamps and event frequency (so you can gauge severity).

- User impact proxy if available (e.g., affected users, transactions, or performance threshold).

- Owner/on-call info if your workflow includes routing.

2) Investigation helpers (useful once you’re debugging)

These fields support deeper root cause analysis without forcing the doc to carry everything inline.

- Stack trace summary (often best as a link + top frames summary, not full dump).

- Tags and fingerprints (service name, endpoint, browser, region, tenant).

- Breadcrumbs and contextual metadata (again: link or summarized excerpt).

- Related issues/regressions and recent deploy context (release link).

A practical rule: if a field doesn’t help someone decide the next action in under 10 seconds, it probably belongs behind a link or in an appendix.

Do DevOps teams actually need automation to keep incident docs up to date?

Yes—DevOps teams often need to connect Google Docs to Sentry for automated incident documentation because (1) incidents move faster than manual note-taking, (2) early facts decay quickly, and (3) consistent documentation reduces handoff friction across responders and stakeholders.

Besides, the “not manual” part matters most when your team is under pressure and context-switching constantly.

Reason 1: Incidents punish delay.

During a production incident, the first 10–20 minutes are chaotic: alerts fire, hypotheses change, and responders rotate in. If nobody starts the doc, the timeline becomes guesswork later. Automation ensures the incident record exists as soon as signal exists.

Reason 2: The cost of missing facts is high.

If you forget which release was running, which environment was affected, or when the spike began, your later analysis becomes slower and more argumentative. Automating the basics builds a factual baseline that people can trust.

Reason 3: Documentation is a handoff tool, not paperwork.

Modern incident response is distributed. People join late, teams are on different time zones, and leadership wants updates. A living incident doc reduces repeated explanation and prevents two teams from running the same investigation in parallel.

Evidence is not just theoretical: according to a study by the University of Minho from its Department of Informatics, in 2024, case studies of an improved incident-management process reported increased efficiency by reducing incident response time. di.uminho.pt

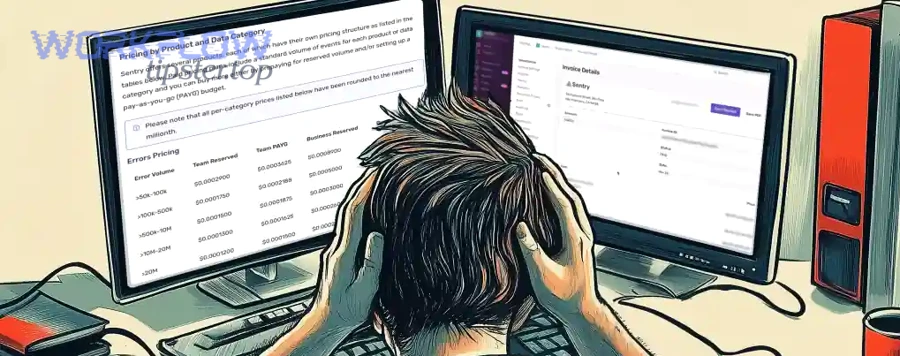

Does “not manual” documentation reduce MTTR or just reduce busywork?

Automation wins at capturing time-sensitive facts and speeding up coordination, while manual writing remains best for interpretation and decisions—so the real MTTR impact comes from better handoffs, not from replacing human thinking.

However, you only see MTTR improvement if your automation changes the right part of the workflow.

- Automation reduces busywork immediately by creating the doc, adding links, and stamping time and context. This prevents “Where’s the incident doc?” and “What’s the Sentry link?” delays.

- Automation can reduce MTTR indirectly when it accelerates alignment: people spend less time reconstructing what happened and more time executing mitigation steps.

- Manual work still drives resolution because someone must choose a mitigation, verify the fix, and decide when to roll forward or rollback.

So the correct expectation is: automate facts + structure; keep humans responsible for analysis + decisions. That combination is what makes “not manual” meaningful.

Is it possible to automate incident docs without risking incorrect or noisy information?

Yes—it’s possible to automate incident docs safely if you apply (1) filtering, (2) deduplication, and (3) a draft-first workflow so the doc stays accurate and readable even during alert storms.

Specifically, the risk is not “automation.” The risk is uncontrolled triggers.

A safe pattern looks like this:

- Filter by signal quality: only create incident docs from high-severity alerts, specific projects, production environments, or sustained error rates.

- Deduplicate by incident key: treat repeated alerts as updates to the same doc, not new docs.

- Write drafts first: label new docs as “Draft – Incident in progress,” then promote when confirmed.

- Prefer links over dumps: include Sentry links and summaries rather than full stack traces.

When these guardrails exist, automation becomes more reliable than ad-hoc manual notes because it follows the same rules every time.

What are the best Google Docs → Sentry automation workflows for incident documentation?

There are four main Google Docs → Sentry automation workflow types—Alert-triggered Docs, Issue-triggered Docs, Regression/Release-triggered Docs, and Escalation-triggered Updates—based on what Sentry signal starts the documentation and how the doc evolves.

Next, you can choose the workflow that matches your incident maturity and alert volume.

1) Alert-triggered incident doc (high signal)

- Trigger: an alert rule fires (error rate, latency, throughput anomaly).

- Action: create a new incident doc from a template, add alert link, environment, release, severity, and initial timeline entry.

2) Issue-triggered triage doc (higher volume, needs filtering)

- Trigger: a new issue appears or changes state (e.g., “unresolved” spike).

- Action: update a triage log doc, or create a doc only if thresholds are met.

3) Regression/release-triggered doc (deployment-aware)

- Trigger: a new release causes a regression, or an issue correlates with a release.

- Action: create/update a doc section “Recent changes” linking to release and suspect commits.

4) Escalation-triggered update (coordination-focused)

- Trigger: escalation to on-call, incident declared, severity upgraded.

- Action: append to the doc with “Escalation” + who joined + what changed.

This is where “Automation Integrations” becomes a mindset: you’re not just connecting tools—you’re designing how operational truth flows through your system, the same way teams design workflows like airtable to evernote knowledge capture or convertkit to google sheets reporting—only here, the stakes are incident response.

Which workflows should be triggered by Sentry alerts versus new issues?

Alerts win for incident-worthy documentation, while issues win for triage and pattern tracking, because alerts are curated signals and issues can be noisy at scale.

Meanwhile, the “best” choice depends on your alert discipline.

Use alert triggers when:

- You have well-tuned alert rules (few false positives).

- You want one doc per incident with clear severity.

- You need fast incident creation for on-call coordination.

Use issue triggers when:

- You’re building a triage knowledge base.

- You want to track recurring problems, not just declared incidents.

- You’re willing to filter aggressively (environment=prod, frequency threshold, new+high priority tags).

In practice, many DevOps teams start with alert-triggered docs (simple, high signal), then add issue-triggered updates later once their filters are mature.

What are the most common “create vs update” document patterns?

There are three common create-vs-update patterns: (1) Create-a-doc-per-incident, (2) Update-a-rolling-incident-log, and (3) Create-a-doc-and-update-an-index, based on how your organization wants to browse incident history.

To illustrate, each pattern solves a different navigation problem.

Pattern 1: Create a new doc per incident

Best for: formal postmortems and learning reviews

- Pros: clean ownership, clear scope, easy sharing

- Cons: many docs to organize without an index

Pattern 2: Update a rolling incident log doc

Best for: fast-moving teams and lightweight incidents

- Pros: one place to scan recent incidents

- Cons: long docs get messy; permissions and sections become hard

Pattern 3: Create doc + update an incident index

Best for: mature teams

- Pros: each incident has its own doc, and the index offers discoverability

- Cons: slightly more automation complexity

If you want a default, Pattern 3 is the most scalable: it keeps incidents atomic while still being easy to browse.

How should you structure a Google Docs template for reliable automation inserts?

A reliable Google Docs incident template is a structured document with stable headings and placeholders that automation can target repeatedly, typically organized into Impact, Timeline, Detection, Root Cause, Mitigation, and Action Items.

More specifically, templates must be designed for machines as well as humans.

- Stable anchors: headings like “Timeline” or “Action Items” that never change name, so the automation can always insert in the right place.

- Placeholder tokens: e.g.,

{{SENTRY_ISSUE_URL}},{{ENVIRONMENT}},{{RELEASE}},{{FIRST_SEEN}}. - A “facts first” top section: so anyone opening the doc sees the essential data immediately.

- A “human analysis” section: clearly marked, so automation doesn’t overwrite it.

If you’re using the Google Docs API directly, designing stable insertion points is even more important because document updates often operate by index or targeted replace operations. For example, Google’s documentation describes inserting text via documents.batchUpdate using an InsertTextRequest. developers.google.com

How do you set up a Google Docs to Sentry automation step by step?

To set up a Google Docs to Sentry automation, use a 6-step method—connect accounts, choose a trigger, define doc behavior, map fields, test failure cases, and roll out with monitoring—so every incident creates or updates a doc with consistent, trusted context.

Then, you can scale from a minimal workflow to more advanced routing once the basics are stable.

Step-by-step setup (platform-agnostic)

Step 1: Decide the trigger boundary (what counts as an “incident”)

Pick a trigger that reflects your operational definition: alert severity threshold, sustained error rate, latency breach, or escalation status.

Step 2: Decide doc behavior (create vs update)

Create per incident if you do postmortems. Update a log if you track many small incidents.

Step 3: Prepare your template

Add stable headings + placeholders. Make a copy to test, so your real template stays clean.

Step 4: Connect Sentry signal to the workflow

If you’re using Sentry’s integration platform, webhooks allow external services to receive event notifications so your automation can react in real time. docs.sentry.io

Step 5: Write the mapping rules

Which fields go to the doc header, and which become links? How do you format timestamps, severity, and environment?

Step 6: Test, then monitor

Simulate triggers, verify doc formatting, and ensure duplicates don’t create doc spam.

What are the minimum permissions and credentials you need in Google Docs and Sentry?

There are three minimum access requirements—Google Docs edit access, a safe authentication method, and scoped Sentry access—based on the principle of least privilege.

More importantly, permission mistakes are the #1 reason automations quietly break later.

Google Docs side (minimums):

- A Google account (or service identity) that can create docs in the target Drive location or edit an existing doc/log.

- Permission to use the template (read) and write to the destination (edit).

- If using API: the necessary OAuth scopes for Docs/Drive operations (keep them minimal).

Sentry side (minimums):

- Access to the relevant organization and project(s) that will produce alerts/issues.

- A token/credential method appropriate to your environment.

- If using webhooks: ensure your endpoint is reachable and secured; treat webhook secrets as sensitive.

Sentry also documents internal integrations as an option for organization-specific integrations, which can simplify credential management for custom workflows. docs.sentry.io

How do you map Sentry fields into Google Docs content without breaking formatting?

Mapping Sentry fields into Google Docs without breaking formatting means inserting only stable, human-readable summaries in the doc while pushing long or volatile data (like full stack traces) into links, so the document remains scannable during incident response.

Specifically, you should design the doc to read like an executive brief at the top and a technical appendix below.

- Header block (top of doc): incident name, severity, environment, release, Sentry URL, start time, owner, status.

- Timeline section: append one line per meaningful change: alert fired, mitigation applied, rollback started, resolved.

- Evidence/Links section: include links to Sentry issue, dashboards, runbooks, deploy logs.

- Appendix (optional): short stack trace summary or top frames (not the full payload).

If you use the Docs API directly, batch updates are validated and applied atomically; if any request is invalid, the entire batch fails—so keeping updates simple and predictable improves reliability. developers.google.com

What testing checklist proves your automation works in real incidents?

There are six essential tests for production-ready incident-doc automation: trigger correctness, permissions, field completeness, deduplication, failure recovery, and doc usability.

In addition, each test should confirm what happens when reality is messy.

- Trigger correctness: does the doc create only when it should (severity, environment, threshold)?

- Permission resilience: what happens if template permissions change or a folder is restricted?

- Missing/unknown fields: does the doc still read well if release or user-impact data is missing?

- Deduplication: do repeated alerts update the same doc instead of creating multiple docs?

- Failure recovery: are retries safe (idempotent) and do they avoid duplicate inserts?

- Usability: can a new responder open the doc and understand what to do next in under 60 seconds?

A simple rule: if your automation is hard to troubleshoot when it fails, it will fail at the worst time.

Which method is best for connecting Google Docs to Sentry for DevOps teams?

No-code automation tools win in speed to launch, custom API/webhook builds win in control and governance, and hybrid approaches are optimal for scaling across teams, because each option optimizes a different constraint that DevOps teams care about.

However, the best method is the one you can operate reliably during incidents—not the one that looks simplest in a demo.

Before the comparison, here’s a quick table showing what each method is best for and why.

| Method | Best when you need | Strength | Tradeoff |

|---|---|---|---|

| No-code workflow builder | Fast setup, minimal engineering | Quick to implement, easy iteration | Less control over edge cases |

| Custom webhooks + Docs API | Advanced routing, strict governance | Full control, precise logic | Engineering + maintenance cost |

| Hybrid (no-code + small services) | Scale + flexibility | Balance of speed and control | More moving parts |

How do no-code automation tools compare to webhooks/APIs for Sentry-to-Docs workflows?

No-code wins for implementation speed, while webhooks/APIs win for precision and robustness, because no-code platforms abstract complexity but custom code lets you enforce your exact incident rules.

On the other hand, many teams underestimate the operational cost of custom integrations.

No-code advantages:

- Faster time-to-value (often hours or days).

- Easier iteration when you adjust triggers, fields, and templates.

- Accessible to non-engineering ops stakeholders.

No-code limitations:

- Complex deduplication and multi-project routing can be difficult.

- Governance controls vary by platform.

- You may hit platform-specific constraints during high-volume alert storms.

Webhooks/API advantages:

- Full control over dedupe, rate limiting, redaction, and routing.

- Better fit for compliance and strict data-handling requirements.

- Easier to standardize across multiple teams with shared libraries.

Webhooks/API tradeoffs:

- You own uptime, monitoring, and maintenance.

- You must handle failures gracefully (retries, idempotency, partial writes).

A strong technical foundation for the API approach is documented by Google: inserting text and updating Docs through batch operations is done using the Google Docs API batchUpdate request model. developers.google.com

What should you choose if you need advanced routing, deduplication, or compliance controls?

Custom webhooks/APIs are best for advanced routing and compliance, while no-code is best for simplicity, because compliance-grade workflows usually require explicit rules for redaction, data retention, and auditability that are easier to guarantee in code.

Meanwhile, the “hybrid” option often wins when you want both speed and control.

Choose custom webhooks/APIs if you need:

- Severity-based routing across many projects/services.

- Strict deduplication rules (one doc per incident key).

- Redaction/allowlists to prevent sensitive data in docs.

- Audit trails and controlled access patterns.

Choose no-code if you mainly need:

- A doc created on high-signal alerts.

- Basic mapping and a simple template.

- Fast iteration and low operational overhead.

Choose hybrid if:

- You want no-code for orchestration, but a small service for dedupe/redaction/routing.

Is a Google Docs ↔ Sentry incident-doc automation secure and reliable enough for production use?

Yes—Google Docs ↔ Sentry incident-doc automation can be secure and production-reliable if you implement (1) least-privilege access, (2) safe data handling, and (3) operational resilience (monitoring, retries, deduplication), because these controls prevent leaks and reduce failure during incident spikes.

More importantly, security and reliability are not features you add later—they are part of the workflow design.

Reason 1: Least privilege reduces blast radius.

Your automation should only access the specific docs/folders it needs and only the Sentry projects relevant to incident documentation.

Reason 2: Safe data handling keeps docs shareable.

Incident docs often get shared broadly. That means you should insert only what is safe to disseminate, and link to restricted systems for sensitive detail.

Reason 3: Operational resilience prevents “doc outages.”

If the automation fails silently, responders lose trust quickly. Reliable workflows treat every write as potentially failing and recover with controlled retries and idempotent logic.

A key building block is that Sentry’s integration platform is designed explicitly for external services via webhooks and APIs, making it feasible to build controlled, production-grade integrations. docs.sentry.io

What are the most common failure modes in this integration, and how do you prevent them?

There are five common failure modes—credential drift, permission changes, rate limits, duplicate triggers, and schema surprises—based on how integrations break in real production environments.

To begin, you prevent most failures by designing for change rather than assuming stability.

- Credential drift (tokens expire or rotate): use managed secrets, rotation procedures, and health checks.

- Permission changes (folders/templates restricted): keep templates in controlled locations, alert on permission errors, assign an owner.

- Rate limits and spikes: backoff retries, queue events, and collapse duplicates into a single doc update.

- Duplicate triggers: idempotency keys (incident ID), dedupe windows, “update if changed” logic.

- Payload/schema surprises: validate input, default missing fields, and log versions of payload structure.

If you treat automation as part of incident tooling, you should also treat its failure as an incident-worthy condition—at least for teams that rely on it heavily.

How do you monitor and audit an incident-doc automation over time?

Monitoring and auditing an incident-doc automation means tracking every trigger, write operation, and failure with logs and alerts, and reviewing access and rule changes periodically, so the workflow remains trustworthy as teams, projects, and permissions evolve.

In short, if nobody owns the automation, it will decay.

- Run logs: record when a doc is created/updated, what incident key was used, and which fields were written.

- Failure alerts: notify an owner when writes fail, when permissions break, or when rate limits spike.

- Change control: record template changes, mapping changes, and trigger rule changes.

- Access reviews: quarterly checks of who can read/write the incident docs and who controls Sentry credentials.

- Periodic drills: simulate an incident to ensure the doc still creates correctly.

If you want one cultural guardrail: adopt blameless postmortem practices so monitoring becomes learning rather than blame, which helps teams surface automation failures early. sre.google

How can you optimize (not manual) incident documentation once Google Docs and Sentry are connected?

Once Google Docs and Sentry are connected, you optimize “not manual” incident documentation by improving mapping quality, reducing noise, enforcing safe-field rules, and designing for rate limits and deduplication, so the doc stays useful during incident pressure instead of becoming clutter.

Next, these optimization steps turn a working integration into a trustworthy operational system.

What are advanced mapping patterns to turn Sentry issues into high-quality Google Docs sections?

Advanced mapping patterns are structured transformations that convert Sentry issue metadata into readable incident narrative blocks, such as translating severity into an “Impact” summary, tags into “Scope,” and release data into “Change context,” so the doc reads like an incident brief.

Specifically, you want the automation to produce a doc that a new responder can scan quickly.

- Severity → Impact statement (e.g., “SEV-1: customer-facing errors in production; elevated 5xx rate since 14:32 UTC.”)

- Environment + service tags → Scope header (e.g., “Service: checkout-api | Env: prod | Region: us-east”)

- Release/regression metadata → Change context (e.g., “Regression suspected after release 2026.01.31.2; compare to previous stable release.”)

- Links-first Evidence block (Sentry issue URL + dashboards + relevant runbook + deploy logs)

- Timeline auto-append (each new alert state change adds a timestamped line)

This is the difference between “we created a doc” and “we created a doc people actually use.”

Which information should be excluded or redacted to prevent sensitive data from entering incident docs?

There are three main categories of information to exclude or redact—PII/customer identifiers, secrets/credentials, and sensitive internal links—based on what turns an incident doc into a security risk.

More specifically, you should design the doc for the widest expected audience and keep sensitive detail behind controlled systems.

- PII and customer identifiers: emails, phone numbers, addresses, user IDs tied to real people, payment identifiers.

- Secrets and credentials: API keys, tokens, passwords, session cookies, signed URLs.

- Sensitive internal links and data: admin console URLs, private dashboards, internal-only endpoints, debug dumps.

A practical micro-rule is an allowlist: only insert fields you explicitly approve. Everything else becomes a link to Sentry (where access is already controlled) rather than raw text in the doc.

How do rate limits, retries, and deduplication change your automation design?

Rate limits, retries, and deduplication change your automation design by requiring idempotent updates, queued writes, and “collapse repeated alerts” logic, so your workflow stays stable when incidents cause sudden spikes in events.

For example, the best time to design for spikes is before you experience one.

- Idempotency key per incident: one incident = one doc ID.

- Backoff retries: avoid hammering APIs when failures occur.

- Event collapsing: treat repeated alerts as “update doc status” instead of “append new block” every time.

- Update-only-if-changed: prevent thrash updates that clutter the doc and waste quota.

- Queue + worker model (for custom builds): decouple inbound webhooks from doc writes so you can absorb bursts.

These choices protect readability and reliability at the same time.

What is the difference between automated incident docs and manual postmortems, and how should teams use both?

Automated incident docs win at speed and factual capture, while manual postmortems are best for analysis, accountability via action items, and long-term learning, so teams should use automation to create the narrative skeleton and use humans to finalize the learning artifact.

Thus, the correct workflow is “automation first, reflection second.”

- Use automated incident docs to capture initial signal and context immediately, maintain a live timeline, and reduce handoff friction.

- Use manual postmortems to establish root causes thoughtfully, agree on preventive actions and owners, and improve playbooks and alerts.

Done well, the automation doesn’t replace your postmortem culture—it supports it by ensuring you never start from zero and you never argue about basic facts.

Evidence (if any): According to a study by the University of Minho from its Department of Informatics, in 2024, case studies evaluating an improved incident-management process reported increased efficiency by reducing incident response time. di.uminho.pt