Exporting Google Docs to GitLab is the most practical way to turn living documents into versioned content: you convert a Doc into Markdown/HTML, commit it to a repository, and use GitLab’s merge requests to review, approve, and publish changes with a clear audit trail.

Next, the key decision is whether you should automate that pipeline or keep it manual: automation saves time, reduces copy-paste errors, and standardizes formatting—but it also introduces setup, maintenance, and “edge-case” debugging you must plan for.

Then, the “best” approach depends on your team: solo writers often prefer a clean manual export + MR routine, while documentation teams typically benefit from automation through a connector or script that outputs consistent Markdown and enforces checks in CI.

Introduce a new idea: once you treat documentation like code—branching, reviewing, and validating—you can build a technical-writer-friendly GitLab workflow that scales without breaking images, links, or formatting.

What does “export Google Docs to GitLab” mean in practical terms?

“Export Google Docs to GitLab” means converting a Google Doc into a Git-friendly format (usually Markdown or HTML), committing it into a GitLab repository, and managing edits via branches and merge requests so changes are trackable, reviewable, and reversible.

To better understand what “export” really implies, it helps to separate the document source (Google Docs) from the publishing system (GitLab repo + pipeline).

Does “export” mean a one-time copy or a repeatable sync?

It means either, but teams succeed more often with a repeatable sync because documentation changes continuously and must stay aligned with releases, product updates, and support realities.

More specifically, a one-time copy is fine for a static document (like a one-off announcement), but it breaks down when you need ongoing updates, approvals, and a consistent publishing cadence.

- One-time copy works when:

- The Doc is “final” and unlikely to change.

- You just need a snapshot stored in GitLab for reference.

- Repeatable sync works when:

- The Doc evolves weekly or per sprint.

- You need consistent formatting and a standard directory structure.

- You want reviewers to comment on diffs inside merge requests.

Which GitLab destination is most common for exported Docs?

The most common destination is a GitLab repository, typically in a /docs folder (or a dedicated docs repo), so you can version changes and publish from the repo using a site generator or GitLab Pages.

In addition, some teams use GitLab Wiki, but repositories are usually better for automation, CI checks, and consistent build outputs.

Common repo patterns that stay maintainable:

/docs/product/feature-name.md/docs/help-center/article-slug.md/handbook/policies/.../release-notes/YYYY/MM/...

What file formats should you expect when exporting?

You should expect Markdown or HTML as the “commit-ready” formats, because they render well, diff cleanly, and work with most docs publishing toolchains.

Meanwhile, Google Docs exports like .docx or .pdf are useful for distribution, but they are usually poor fits for a GitLab-based docs workflow because diffs aren’t meaningful and automation is limited.

Is automating Google Docs → GitLab publishing worth it?

Yes—automating Google Docs to GitLab publishing is worth it when you need consistent formatting, fewer manual errors, and faster review cycles, because automation standardizes conversion, enforces checks, and keeps docs aligned with product changes.

However, the best results come when you automate only the repeatable parts and keep human review where it matters.

Do you need automation if you publish docs weekly?

Yes, you usually need automation if you publish weekly, because repeated manual exports create formatting drift, broken links, and inconsistent asset handling over time.

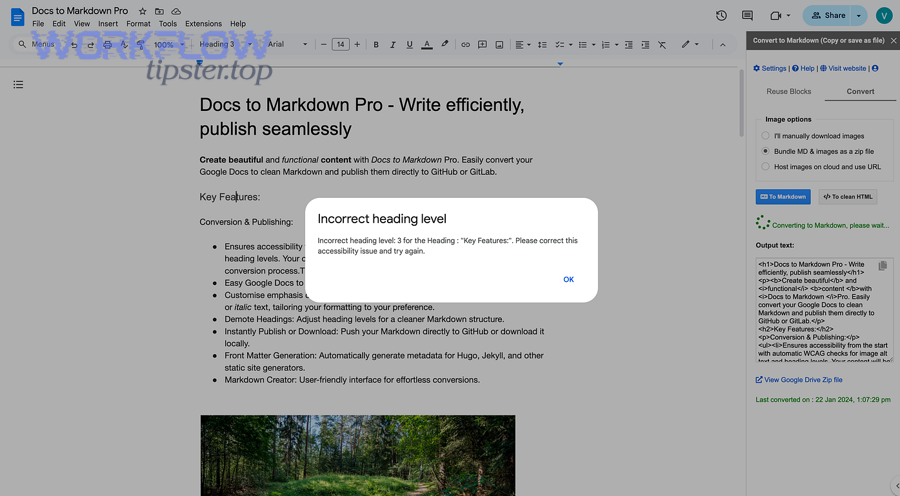

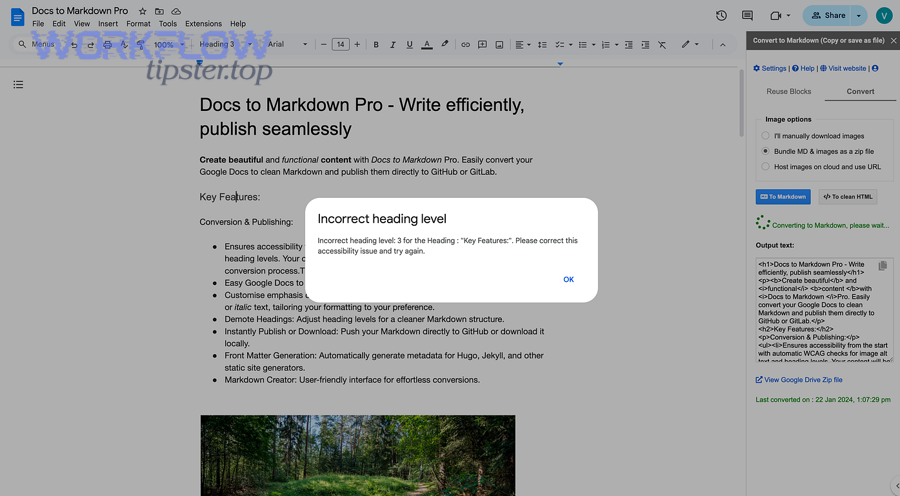

Specifically, weekly publishing tends to amplify small inconsistencies—like heading levels, list spacing, and image naming—until your docs repo becomes noisy and hard to maintain.

Automation helps weekly cadence by:

- Enforcing a consistent output format (Markdown flavor, front matter, heading rules)

- Standardizing asset handling (images, diagrams, attachments)

- Reducing “last-mile” copy/paste steps that create invisible errors

What are the biggest wins you get from treating docs like code in GitLab?

The biggest wins are traceability, review quality, and safe rollbacks, because GitLab gives you diffs, merge request discussions, approvals, and a single source of truth tied to releases.

Moreover, this is exactly where “Automation Integrations” make a measurable difference: you remove repetitive work and keep the team focused on clarity, accuracy, and user impact rather than formatting cleanup.

Concrete wins:

- A clear timeline of who changed what and why

- Easier collaboration between writers, PMs, engineers, and support

- Faster updates during incidents or urgent release changes

- The ability to revert a change in seconds if it causes confusion

What are the tradeoffs and risks of automation?

Automation introduces setup cost, maintenance, and edge-case handling, because Docs content is rich and conversion is never perfect across tables, complex layouts, and embedded objects.

Especially when teams skip a “content contract” , automation can produce inconsistent Markdown and require constant patching.

Typical risks:

- Tables that convert poorly

- Images that export with unstable names

- Links that change shape (Docs links vs site links)

- People bypassing the workflow and pasting directly into GitLab

Evidence: According to a dissertation by the University of California, Berkeley from the Department of Electrical Engineering and Computer Sciences, in 2017, a quantification model used in large-scale software quality analysis estimated the fraction of security issues with only 4% error, showing that automated analysis can be highly accurate when the pipeline and data constraints are well-defined. (www2.eecs.berkeley.edu)

What are the best ways to export Google Docs content into GitLab?

There are 4 main ways to export Google Docs content into GitLab—manual export, Docs-to-Markdown converters, no-code automation, and custom scripts—based on how much control, repeatability, and maintenance you want.

Below, each method is “best” for a different kind of team and publishing requirement.

What is the simplest manual workflow (export → commit → merge request)?

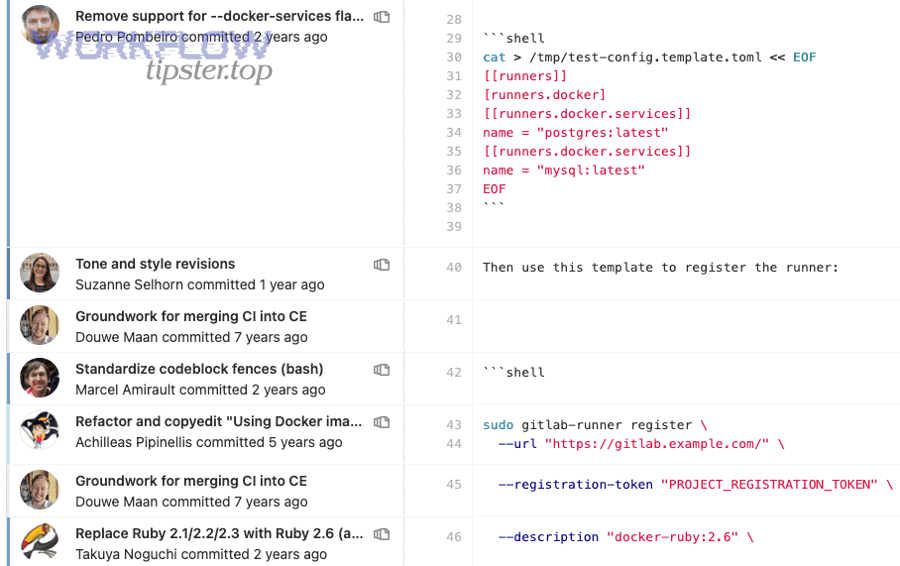

The simplest workflow is export a Doc to Markdown/HTML, commit it to a branch, and open a merge request, so reviewers can comment on diffs and approve changes before merge.

Then, you keep it stable by using naming conventions and a consistent folder structure.

A reliable manual flow:

- Export the Doc (preferably to .html or .docx then convert to Markdown consistently)

- Normalize headings, lists, and links (quick cleanup pass)

- Place images into a predictable

/assets/path - Commit on a feature branch:

docs/update-feature-x - Open a merge request and request review

Where it fits:

- Solo writers

- Small teams

- Low-frequency publishing

Which converters work best for Docs → Markdown?

Docs → Markdown conversion works best when you prioritize consistent structure over “pixel-perfect” fidelity, because Markdown is content-first and Google Docs is layout-first.

More specifically, the best converters are the ones you can configure and test against your most common doc patterns (headings, callouts, tables, code blocks).

What “good conversion” looks like:

- Headings map cleanly (H1/H2/H3)

- Lists remain stable (no random renumbering)

- Links convert into clean Markdown

- Images export with deterministic filenames (or at least deterministic paths)

When should you use a no-code automation platform?

You should use a no-code platform when you need repeatability with minimal engineering, because you can trigger exports on a schedule or on a “publish” action and push results into GitLab automatically.

Meanwhile, you must still decide how you will handle images and formatting normalization, because no-code connectors often treat content as text blobs unless you design around that.

No-code is best for:

- Non-technical documentation teams

- “Good enough” Markdown output

- Straightforward docs (few complex tables, limited embedded objects)

When is a custom script the best option?

A custom script is best when you need full control over conversion rules, assets, and CI checks, because you can enforce a strict output contract and scale without surprises.

In addition, scripts are the easiest path to deterministic asset naming, stable link rewriting, and consistent front matter—especially when your docs are published via a generator like Hugo, Docusaurus, MkDocs, or GitLab Pages.

Scripts are best for:

- Large docs sites

- Strict formatting requirements

- Frequent publishing

- Multi-repo or multi-language docs

What does a “technical-writer-friendly” GitLab publishing workflow look like end-to-end?

A technical-writer-friendly GitLab publishing workflow is a 7-step pipeline—source Doc → convert → normalize → branch → merge request → CI validation → publish—so writers can focus on content while GitLab handles review, validation, and release.

Below is the end-to-end model that scales without turning writers into build engineers.

What does the ideal repo structure look like for docs in GitLab?

The ideal structure is content separated from assets and config, because it keeps diffs clean and makes broken images easy to detect.

Next, keep structure predictable so contributors don’t “invent folders” that fragment your information architecture.

A strong baseline:

/docs/for Markdown content/docs/assets/(or/static/) for images/docs/_includes/for reusable snippetsREADME.mdexplaining how to preview and submit changesCONTRIBUTING.mdwith a Docs Authoring Contract

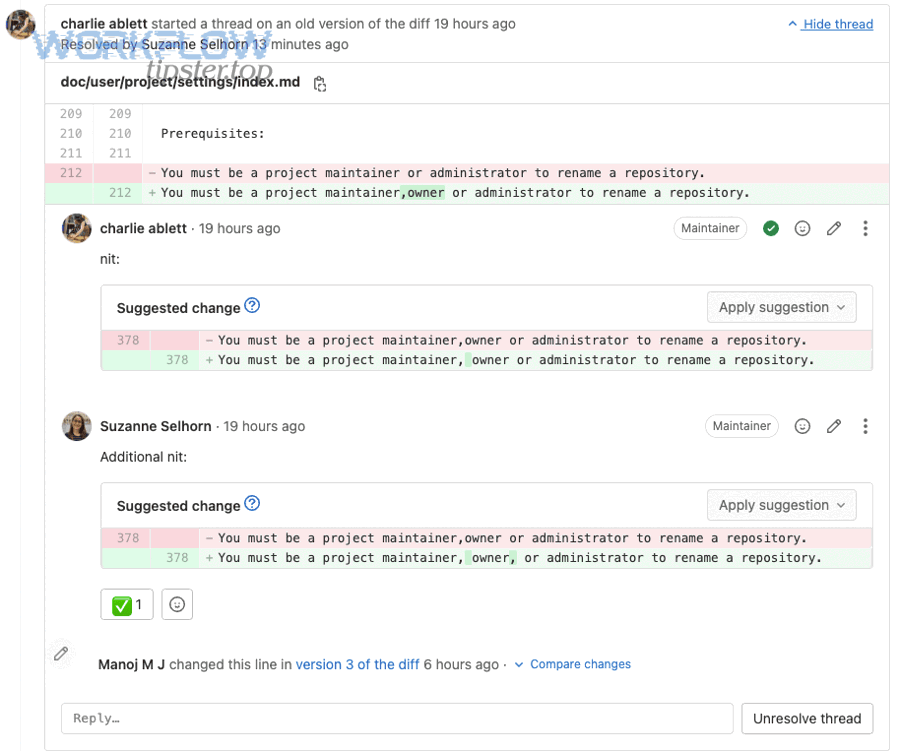

How should writers work with branches and merge requests?

Writers should work in short-lived branches and submit one-topic merge requests, because it reduces review complexity and makes it easier to roll back if needed.

To illustrate, a merge request titled “Update OAuth setup instructions” is easy to review, while “Update 14 docs pages” is hard to validate and tends to ship errors.

A writer-friendly MR routine:

- Branch name:

docs/fix-setup-steps - MR title: action + scope (“Clarify GitLab runner setup steps”)

- Checklist: links tested, images render, headings correct

- Reviewer: product + engineering + support (as needed)

What CI checks should run before merging docs changes?

CI should run checks that catch failures early—broken links, invalid Markdown, missing assets, and build errors—so the main branch stays always publishable.

Especially for teams publishing frequently, this prevents silent regressions and keeps the docs site stable.

Useful CI checks:

- Markdown linting (style + heading rules)

- Link checking (internal and external)

- Image existence validation (referenced assets must exist)

- Build preview (generate site artifact for reviewers)

Which automation approach should you use: no-code tools vs low-code workflows vs scripts?

No-code wins in speed, low-code is best for flexibility, and scripts are optimal for control and scale, because each approach trades simplicity for determinism and maintainability.

However, the “right” choice is the one that matches your publishing frequency, content complexity, and who owns the workflow.

Here’s a quick table (so you can compare the options by decision criteria like control, maintenance, and formatting stability):

| Approach | Best for | Control over formatting/assets | Maintenance | Typical risk |

|---|---|---|---|---|

| No-code | Small teams, fast setup | Low–Medium | Low | Conversion drift |

| Low-code | Teams needing customization | Medium–High | Medium | Hidden complexity |

| Scripts | Large docs, strict standards | High | Medium–High | Engineering ownership |

When should you choose no-code?

Choose no-code when your priority is launching quickly and your Docs are mostly simple (headings, paragraphs, basic lists).

Moreover, no-code is ideal if writers are the owners of the workflow and you want minimal dependency on engineering.

A practical example: if your organization already runs “google docs to slack” notifications for content approvals, you can mirror that same mindset—trigger a “publish” action and push content into GitLab, then let merge requests handle review.

When should you choose low-code?

Choose low-code when you need custom steps—like rewriting links, generating front matter, or enforcing naming rules—without building a full engineering project.

For example, if you also use “google docs to calendly” in your ops workflows and already think in multi-step automations, low-code fits naturally: you orchestrate a sequence (convert → clean → commit → MR) with reusable components.

When should you choose scripts?

Choose scripts when you need deterministic outputs and predictable scaling, because scripts can enforce the content contract exactly and run identically on every machine/CI runner.

In addition, scripts are the best fit when docs publishing is tied to releases and you must guarantee that a merge to main always produces a valid site.

Can you keep formatting, images, and links stable when moving from Docs to GitLab?

Yes—you can keep formatting, images, and links stable if you standardize authoring rules, use a consistent conversion target (Markdown/HTML), and enforce validation in CI, because stability comes from constraints and automation checks, not from “perfect conversion.”

Then, you design your workflow so the most failure-prone elements (tables, images, internal links) are handled in a controlled way.

How do you preserve headings, lists, and basic formatting?

You preserve them by enforcing a Docs Authoring Contract that maps cleanly to Markdown, because converters behave best when the source document uses consistent semantic structure.

Specifically, require:

- One H1 (title) and logical H2/H3 structure

- Real numbered/bulleted lists (no manual numbering)

- Code in monospaced style (or fenced code blocks after conversion)

- Avoid layout hacks (multi-column tables for alignment)

How do you keep images from breaking?

You keep images stable by controlling where images live and how they are referenced, because most broken docs happen when image filenames or paths change between exports.

Practical rules that work:

- Store all exported images under a single path like

/docs/assets/<article-slug>/ - Rename images deterministically (e.g.,

step-01-login.png,diagram-auth-flow.svg) - Reference images with relative paths (stable across environments)

- Add a CI check that fails if an image is referenced but missing

How do you prevent link rot and messy URL rewrites?

You prevent it by separating Docs links from site links, because Google Docs links are not the same thing as published docs URLs.

More importantly, establish link policies:

- Use relative links for internal docs pages (so they work in preview builds)

- Use a consistent slug system for filenames and headings

- Keep a redirect map if your published site supports it

- Add link checking in CI so broken links fail fast

If your support team already manages cross-tool workflows like “freshdesk to smartsheet” to track documentation issues, treat link checking the same way: a failing link is a trackable “ticket” that must be resolved before merge.

What advanced edge cases and optimizations make Docs → GitLab publishing scale smoothly?

There are 6 common advanced edge-case categories—tables, callouts, embedded objects, multi-language, review throughput, and CI performance—based on what typically breaks at scale and what slows publishing down.

Below are the optimizations that keep the pipeline stable when the volume grows.

How should you handle complex tables and layouts?

Handle complex tables by converting them into simpler Markdown tables, HTML tables, or structured lists, because Docs tables are often used for layout, not data, and conversion tools struggle with that ambiguity.

Especially when a table is used for alignment, convert it to a semantic structure:

- Use lists for steps

- Use headings for sections

- Use “definition lists” (or simple patterns) for term → meaning

How do you scale approvals without blocking releases?

Scale approvals by defining review tiers and using merge request templates, because not every change needs the same level of scrutiny.

A tier model that works:

- Tier 1 (typos, clarifications): 1 reviewer

- Tier 2 (procedural changes): writer + SME

- Tier 3 (security/compliance): writer + legal/security approval gates

What performance optimizations matter in CI for docs?

CI performance matters most when your pipeline checks every page every time, because link checking and full-site builds can become slow at scale.

To sum up, optimize by:

- Running incremental checks (only changed files)

- Caching dependencies (site generator caches)

- Splitting jobs (lint vs build vs link check)

- Producing preview artifacts only for merge requests

How do you keep the workflow resilient when conversion isn’t perfect?

Keep it resilient by embracing a “convert → normalize → validate” loop, because conversion alone will never cover every Docs feature perfectly.

A resilient loop includes:

- A normalization step (rewrite headings, clean whitespace, standardize front matter)

- A validation step (lint + link + build)

- A human review step (merge request diff inspection)