Modern engineering teams don’t struggle because they lack alerts—they struggle because alerts arrive in the wrong place, without ownership, and with too much noise. This guide shows you how to set up a GitHub → Basecamp → Slack workflow that turns CI/CD events into clear, actionable DevOps alerts that your team can actually use.

You’ll start by understanding what this workflow means at a system level, then you’ll decide which GitHub events qualify as “alerts,” and how Basecamp should capture them as durable project work instead of ephemeral chatter. From there, you’ll build the pipeline step by step using the method that matches your constraints (native integration, GitHub Actions, no-code automation, or middleware).

Finally, you’ll solve the two things that decide whether alerting works in real life: signal-to-noise and reliability. Introduce a new idea: once you have the basics working, you can optimize alert governance—severity, enrichment, deduplication, and compliance—so the workflow scales with your engineering org.

What does “GitHub → Basecamp → Slack DevOps alerts” mean in a CI/CD notification workflow?

GitHub → Basecamp → Slack DevOps alerts is a CI/CD notification workflow that routes selected GitHub events into Basecamp as actionable project updates and then broadcasts a concise, high-signal summary into Slack for fast team awareness.

To better understand why this pattern works, it helps to separate where alerts originate, where work gets tracked, and where attention gets captured.

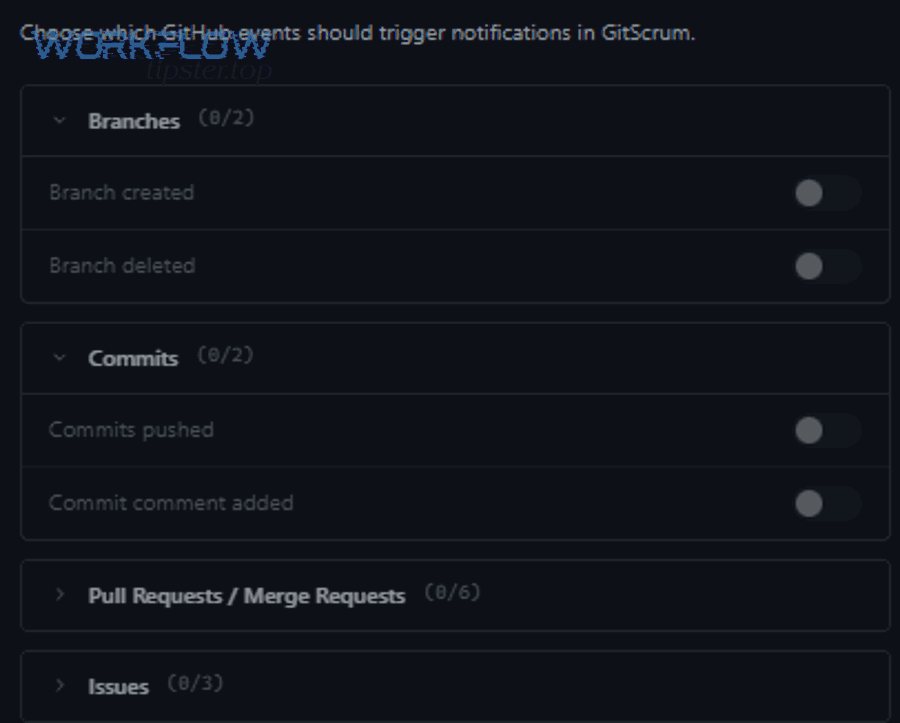

What GitHub events should you treat as DevOps alerts (and why)?

There are 5 main types of GitHub events you should treat as DevOps alerts—Code, CI, Release, Deploy, and Ops—based on a single criterion: actionability (someone can take a clear next step within minutes).

Next, the practical question becomes: which of these types matters enough to justify interrupting a human?

1) Code events (PRs and merges)

- Alert-worthy when: a PR merges to a protected branch, or a hotfix PR merges.

- Not alert-worthy when: every PR opens, every review comment appears, or every commit pushes to feature branches.

- Why it matters: merges often trigger CI/CD, change risk, and downstream work.

2) CI events (workflow failures/success)

- Alert-worthy when: a workflow fails on main/release, or a critical job fails (tests, build, lint gates).

- Not alert-worthy when: routine successes for every branch are posted to a team channel.

- Why it matters: failures block deployment and create expensive context switching.

3) Release events (tags and releases)

- Alert-worthy when: a release is cut, a version is promoted, or release notes are published.

- Not alert-worthy when: lightweight tags are created during internal experimentation.

- Why it matters: releases define what changed and when it should be communicated.

4) Deploy events (environments, promotions, rollbacks)

- Alert-worthy when: production deploy succeeds/fails, rollback occurs, canary is promoted.

- Not alert-worthy when: dev environment deploys happen continuously without any decision needed.

- Why it matters: deploy outcomes affect customers and on-call readiness.

5) Ops/security events (dependency alerts, secrets scanning, policy failures)

- Alert-worthy when: an urgent vulnerability is detected in runtime dependencies, or secrets are exposed.

- Not alert-worthy when: low-severity or informational scans are posted unfiltered to broad channels.

- Why it matters: these events can represent real risk, but they require strong filtering and ownership.

A simple rule you can use immediately: if the alert doesn’t clearly identify an owner and a next action, it’s not an alert—it’s noise.

How should Basecamp represent GitHub activity so it stays actionable?

Basecamp should represent GitHub activity as durable work objects—to-dos, threads, or Campfire updates—where each alert becomes a decision, an assignment, or a documented next step, not just a message.

Then, instead of treating Basecamp like another “notification sink,” you treat it like the system of record that turns signal into execution.

Use Basecamp to store:

- Who owns the response (assignee)

- What action is required (fix, rollback, triage, inform)

- Where to investigate (deep links to run logs, PR, commit, release)

- What the outcome was (resolved, shipped, rolled back)

Choose Basecamp objects by intent:

- To-dos: best for CI failures, rollback tasks, urgent fixes, and ownership.

- Message board: best for release announcements, postmortem summaries, and decision logs.

- Campfire: best for “heads up” updates with a link to a durable record (like a to-do or message thread).

- Docs & Files: best for runbooks, playbooks, and release checklists that alerts can reference.

When Basecamp captures the “why” and “who,” Slack can stay short—Slack becomes the attention surface, while Basecamp becomes the action surface.

How do you set up GitHub → Basecamp → Slack alerts step by step?

The most reliable way to set up GitHub → Basecamp → Slack DevOps alerts is to build it in 6 steps—events, policy, Basecamp structure, delivery method, message mapping, and testing—so GitHub signals become assigned Basecamp work and summarized Slack notifications.

Below, we’ll move from fundamentals to implementation without skipping the parts that usually break.

Step 1: Define your “alert contract”

- Start with 8 fields that every alert must have:

- Event type

- Repo/service

- Environment

- Status (success/fail)

- Severity

- Owner/team

- Primary link (run/PR/release)

- Next action (one sentence)

Step 2: Decide your routing policy

- Which Slack channel?

- Which Basecamp project?

- Which Basecamp object type (to-do vs message)?

Step 3: Build a Basecamp “Ops lane”

- Add a dedicated to-do list like:

- “CI Failures (Main)”

- “Production Deployments”

- “Hotfix Queue”

- “Security Follow-ups”

Step 4: Choose integration method

- Native GitHub→Slack plus separate GitHub→Basecamp method, or one unified approach via automation/middleware.

Step 5: Implement message mapping

- Convert GitHub event payloads into your alert contract fields.

Step 6: Test end-to-end

- Simulate a PR merge, a workflow failure, and a production deployment.

Which integration methods can connect GitHub to Basecamp and Slack?

There are 4 main integration methods to connect GitHub to Basecamp and Slack—native integration, Actions/webhooks, no-code automation, and middleware—based on the criterion of control vs speed.

Then, you can choose the simplest method that still gives you the filtering and ownership you need.

1) Native GitHub → Slack integration

- Best for: quick visibility into repos and basic notifications.

- Limitations: weak cross-tool orchestration and limited Basecamp action modeling.

- Typical use: PR and issue visibility, lightweight channel updates.

2) GitHub Actions → Slack (webhook-based)

- Best for: CI/CD alerts tied directly to workflows.

- Strength: can format messages, include build context, and update messages to reduce noise.

- Limitations: still needs a plan for Basecamp object creation.

3) No-code automation (GitHub ↔ Basecamp, then Slack)

- Best for: teams that want “good enough” quickly without running servers.

- Strength: templates for common triggers (issue created, PR merged, release published).

- Limitations: can become brittle at scale and may require careful rate-limit handling.

4) Middleware/custom webhook service

- Best for: teams needing enterprise-grade control (dedupe, enrichment, audit logs, complex routing).

- Strength: full governance across GitHub, Basecamp, and Slack.

- Limitations: you own uptime, security, and maintenance.

If your team is actively building automation workflows for engineering operations, start with the simplest approach that can still enforce alert contract + routing policy + dedupe. You can always evolve.

How do you map fields from GitHub into Basecamp updates and then into Slack messages?

Mapping GitHub events into Basecamp and Slack means translating a raw payload into a consistent alert schema that creates an actionable Basecamp record first, then posts a short Slack summary pointing back to that record.

Specifically, the mapping works best when you treat Basecamp as the “full context” destination and Slack as the “headline” destination.

To make the mapping concrete, the table below shows a practical event-to-output plan (GitHub → Basecamp object → Slack message). Use it as a default you can refine.

Table context: This table lists common DevOps-related GitHub events and shows how to represent each event as a Basecamp work item and a Slack summary, so alerts stay actionable and consistent.

| GitHub event | Basecamp representation | Slack summary format | Owner rule |

|---|---|---|---|

| Workflow failure on main | To-do: “Fix CI failure: <workflow>” with run link | “CI failed on main: <repo> / <workflow> → Basecamp to-do link” | Code owner or on-call |

| Production deploy failed | To-do: “Investigate deploy failure: <service>” + rollback checklist | “🚨 Deploy failed: <service> (prod) → Basecamp incident to-do” | On-call |

| Release published | Message board post with notes + links | “Release <vX.Y> shipped → Basecamp release post” | Release captain |

| Hotfix PR merged | To-do + short thread on why | “Hotfix merged: <PR> → Basecamp hotfix record” | PR author + reviewer |

| Security alert high severity | To-do + assignment + remediation link | “Security alert: <CVE> → Basecamp remediation to-do” | Security owner |

Slack stays short because it points to Basecamp. Basecamp stays valuable because it holds the who/what/why, not just a link dump.

Should you send every GitHub notification to Slack and Basecamp?

No— you should not send every GitHub notification to Slack and Basecamp because it creates alert fatigue, hides truly urgent signals, and increases response time; the best systems filter for actionability, route by ownership, and summarize outcomes instead of streaming raw events.

More importantly, the fastest way to break a DevOps alerting setup is to let “visibility” become “noise.”

Here are three reasons this is non-negotiable:

- Reason 1: Humans cannot triage unlimited interruptions

- Every low-value ping competes with deep work.

- The team starts ignoring channels, muting notifications, or building unhealthy “ignore until it’s on fire” habits.

- Reason 2: Unowned alerts become orphaned alerts

- If the alert doesn’t name an owner, everyone assumes someone else has it.

- Basecamp gets filled with tasks nobody wants, Slack gets filled with messages nobody reads.

- Reason 3: Noise breaks trust in the system

- Once people feel spammed, they stop believing urgent messages are urgent.

- Your on-call experience degrades and incident response becomes slower.

An engineering principle you can use as a guardrail: every alert must earn the right to interrupt.

According to a study by Slack from Engineering, in 2025, Slack explicitly designed notification logic to reduce duplicate notifications and prevent notification fatigue as systems and roles overlap.

What filtering rules reduce noise while keeping coverage?

There are 7 main filtering rules that reduce noise while keeping coverage—severity, branch, environment, ownership, deduplication, time windows, and thresholds—based on the criterion of whether the alert changes what someone should do next.

Then, you can implement these rules in your integration tool, in GitHub Actions logic, or in middleware.

- 1) Severity filter

- Only send to Slack when severity ≥ P2 (or your equivalent).

- Always create a Basecamp record for P0/P1 with an owner.

- 2) Branch filter

- Only alert on protected branches:

main,release/*,hotfix/*. - Keep feature-branch failures local to the author.

- Only alert on protected branches:

- 3) Environment filter

- Slack channel gets production and staging.

- Basecamp can record lower environments if it impacts delivery timelines.

- 4) Ownership filter

- Route CI failures to service owners.

- Route platform failures to platform team.

- 5) Deduplication/cooldown

- One alert per workflow run ID.

- Cooldown windows prevent “fail-loop storms.”

- 6) Time windows

- Quiet hours policy with escalation rules (e.g., page only for P0).

- Post non-urgent summaries the next business morning.

- 7) Thresholds

- “Alert only if failure repeats 3 times in 15 minutes.”

- “Alert only if deploy fails and rollback occurs.”

If you adopt nothing else, adopt these two: dedupe and ownership. Those are the difference between signal and spam.

How do you route alerts to the right Slack channels and Basecamp threads?

Routing by team is best for accountability, routing by system is best for maintainability, and routing by environment is optimal for incident readiness—so the best setup usually combines all three with a primary route and a fallback route.

However, to keep the system understandable, you should avoid creating dozens of channels without clear ownership.

Routing model A: By team (ownership-first)

- Example channels:

#team-payments-alerts,#team-platform-alerts - Pros: clear accountability, faster response

- Cons: cross-service incidents can fragment context

Routing model B: By system/service (service-first)

- Example channels:

#svc-api-alerts,#svc-web-alerts - Pros: consistent, scales with microservices

- Cons: team boundaries shift over time

Routing model C: By environment (risk-first)

- Example channels:

#prod-deployments,#prod-incidents - Pros: keeps production attention clean

- Cons: needs discipline to avoid dumping everything into prod channels

A practical hybrid:

- Slack:

#prod-deploymentsfor deploy outcomes +#ci-main-failuresfor main branch failures - Basecamp: one “Ops lane” in each project + one central “Incident/Release log” project

If you want Slack to stay readable, make Basecamp the place where the full discussion and task management happens.

Which setup is best for your team: native GitHub→Slack vs Actions/webhooks vs no-code vs custom?

Native GitHub→Slack wins in speed, Actions/webhooks is best for CI/CD context and formatting, and a custom middleware approach is optimal for governance and scale—so the “best” setup depends on how much control you need over routing, noise reduction, and compliance.

Meanwhile, you can choose based on a few criteria instead of personal preference.

To make that decision easier, compare the options on five criteria:

- 1) Time to launch

- Native: fastest

- No-code: fast

- Actions/webhooks: moderate

- Custom: slowest

- 2) Message quality

- Native: limited formatting and transformation

- Actions: strong (rich context, conditional logic)

- No-code: moderate

- Custom: best possible (if built well)

- 3) Noise control

- Native: limited granularity

- Actions: good with logic + message updates

- No-code: depends on platform

- Custom: best (dedupe, aggregation, throttling)

- 4) Reliability and auditability

- Native/no-code: depends on vendor reliability and logs

- Actions: strong workflow logs

- Custom: depends on your ops maturity

- 5) Security/compliance

- Native: simpler but less customizable

- Actions/no-code: requires secrets management

- Custom: best governance but highest responsibility

According to a study by DORA from the DevOps Research and Assessment program, in 2026, delivery performance measures such as change failure rate and recovery time help teams evaluate whether alerting improves response and reduces rework.

When is native GitHub → Slack enough, and when is it not?

Native GitHub → Slack is enough when you need lightweight repo visibility and basic notifications, but it is not enough when you need strict filtering, ownership routing, Basecamp task creation, or deduplication across many workflows and services.

In addition, native setups tend to drift into noise unless you consciously prune subscriptions.

Native is enough if:

- You have a small repo count

- You only need basic PR/issue visibility

- Your CI/CD alerting volume is low

- You don’t need Basecamp to store decisions and tasks

Native is not enough if:

- Your team relies on CI/CD and needs reliable failure ownership

- You want production deploy alerts to become Basecamp tasks automatically

- You need “signal vs noise” policies that evolve over time

- You have multiple teams and want role-based routing

A good compromise: keep native GitHub→Slack for collaboration, but use Actions/no-code/middleware for CI/CD and incident-grade alerts.

Is a middleware/custom service worth it for GitHub → Basecamp → Slack alerting?

Yes, a middleware/custom service is worth it when you need advanced routing, enrichment, deduplication, and audit logs across multiple repos and teams, but no, it is not worth it when your alert volume is low and a simpler method can meet your ownership and filtering needs.

Besides, the biggest hidden cost of custom alerting is not development—it’s operational responsibility.

It’s worth it when you need:

- Unified routing rules for many repos

- Cross-repo dedupe (one incident, many signals)

- Enrichment (service ownership, runbooks, recent deploy history)

- Audit logs for compliance

- Flexible escalation policies

It’s not worth it when:

- You have 1–3 repos and a small team

- You can tolerate occasional manual Basecamp task creation

- Your on-call model is informal

- You’re not prepared to operate another production service

A practical “middle path” many teams use:

- GitHub Actions for CI/CD notifications to Slack

- No-code automation for Basecamp task creation

- Lightweight conventions for ownership and escalation

If you’re already building complex automation workflows in other areas (for example, scheduling automations like “calendly to calendly to google meet to clickup scheduling” or “calendly to calendly to zoom to basecamp scheduling”), you’ll recognize the same pattern here: stability comes from consistent contracts, not from piling on triggers.

How do you troubleshoot failed or missing DevOps alerts in this workflow?

There are 6 main troubleshooting stages for GitHub → Basecamp → Slack DevOps alerts—event emission, workflow execution, authentication, API delivery, mapping/formatting, and routing/deduplication—based on the criterion of where the signal can break in the chain.

Then, you can isolate failures quickly instead of guessing.

A reliable debugging mindset: treat each hop as a checkpoint with proof.

- Checkpoint 1: Did GitHub emit the event?

- Confirm the event is real (PR merged, workflow failed, release published).

- Check whether the event matches your filter rules (branch/env/severity).

- Checkpoint 2: Did your automation run?

- For GitHub Actions: check the workflow run logs and job status.

- For no-code: check run history and trigger logs.

- For middleware: check server logs and webhook delivery.

- Checkpoint 3: Did auth succeed?

- Slack: incoming webhook exists, channel permissions, token scopes if using an app.

- Basecamp: token validity, project access, user permissions.

- Checkpoint 4: Did the API call succeed?

- Look for rate limits, payload errors, invalid IDs, or formatting constraints.

- Confirm retries and backoff behavior.

- Checkpoint 5: Did mapping produce a valid message/task?

- Validate the required fields (owner, link, next action).

- Ensure formatting doesn’t break Slack blocks or Basecamp content rules.

- Checkpoint 6: Did routing or dedupe suppress the alert?

- Many “missing” alerts are actually suppressed by cooldown or dedupe keys.

- Confirm dedupe IDs and thresholds.

What are the most common failure points across GitHub, Basecamp, and Slack?

There are 8 common failure points across GitHub, Basecamp, and Slack—permissions, token expiry, wrong channel/project IDs, rate limiting, payload changes, formatting errors, network failures, and dedupe misconfiguration—based on the criterion of what changes most often in real systems.

Especially as teams grow, the “people and permissions” layer breaks more than the “code” layer.

- 1) Permissions mismatch

- GitHub workflow can’t read the right context

- Basecamp token can’t create to-dos in the project

- Slack webhook posts to a restricted channel

- 2) Token expiry or rotation

- Scheduled rotations break automations silently if not monitored.

- 3) Wrong destination IDs

- Basecamp project ID mismatch

- Slack channel/webhook mismatch

- 4) Rate limiting

- Burst events (like failing workflows across multiple repos) hit API limits.

- 5) Payload changes

- Actions or webhook payload fields change, breaking mapping.

- 6) Formatting errors

- Slack block formatting fails; Basecamp content rejects malformed inputs.

- 7) Network/transient errors

- Without retries, you lose messages during short outages.

- 8) Dedupe suppressing real incidents

- Over-aggressive cooldown windows hide repeated failures that matter.

According to a study by Datadog from its monitoring research team, in 2024, excessive or irrelevant alerts reduce the ability to spot critical issues, which is why filtering and ongoing tuning are foundational—not optional.

How do you validate the workflow end-to-end before relying on it for incidents?

Validating GitHub → Basecamp → Slack alerts means running a controlled test suite of core scenarios—PR merge, CI failure, deploy failure, and release publish—then confirming Basecamp creates the correct durable record and Slack posts a clean summary with ownership and links.

Then, you can trust the system under pressure.

Here’s an end-to-end validation checklist you can run in under an hour:

Test Scenario A: PR merge to main

- Expected Basecamp output: to-do or message depending on your policy

- Expected Slack output: short summary + Basecamp link

Test Scenario B: CI failure on main

- Expected Basecamp output: to-do assigned to owner, contains run link and next action

- Expected Slack output: “CI failed on main” + owner cue or channel mention policy

Test Scenario C: Production deploy failure

- Expected Basecamp output: incident to-do, rollback guidance, owner assigned

- Expected Slack output: urgent notification with environment and quick link

Test Scenario D: Release published

- Expected Basecamp output: release log message with notes and links

- Expected Slack output: release summary to an announcements channel

Operational validation

- Confirm retries work (simulate a transient failure)

- Confirm dedupe works (trigger same event twice)

- Confirm quiet hours behavior (if you use it)

One best practice: record these tests in a Basecamp doc called “Alerting Smoke Test” so anyone on the team can validate after changes.

How can you optimize “signal vs noise” for GitHub → Basecamp → Slack DevOps alerts at scale?

Optimizing signal vs noise at scale means adding a severity model, enriching alerts with context, preventing duplicates and alert storms, and applying security governance—so Slack remains readable while Basecamp remains the system of record for decisions and tasks.

Below, we’ll move beyond “it works” into “it keeps working when the org grows.”

How do you add severity levels and escalation paths to GitHub-driven alerts?

There are 4 main severity levels you can add to GitHub-driven alerts—P0, P1, P2, P3—based on the criterion of customer impact and urgency, and each level should define both a Basecamp action and a Slack behavior.

Next, you make escalation deterministic so people don’t debate urgency while production burns.

A practical severity model:

- P0 (Critical): production outage or severe security incident

- Basecamp: incident to-do assigned immediately + incident thread

- Slack: urgent channel + on-call mention policy

- P1 (High): production degradation, failed deploy with rollback needed

- Basecamp: assigned to-do + rollback/runbook link

- Slack: alert channel + owner mention

- P2 (Medium): CI failing on main, release blocked

- Basecamp: to-do assigned to service owner

- Slack: summary message (no broad mentions)

- P3 (Low): informational or trend-level signals

- Basecamp: optional record or digest

- Slack: digest only, not realtime

The key is consistency: severity is not emotion—it’s a contract.

What enrichment makes alerts more actionable without increasing noise?

There are 6 main enrichment elements that make alerts more actionable—owner, runbook link, recent changes, environment context, impact hint, and next action—based on the criterion of reducing time-to-triage without increasing message length.

More specifically, you want the alert to answer “what do I do next?” in one glance.

High-value enrichments:

- Service owner/team (from a CODEOWNERS map or internal registry)

- Runbook link (Basecamp doc, wiki, or internal guide)

- Last successful deploy and current version

- Environment and region (if relevant)

- Impact hint (“prod deploy failed; rollback recommended”)

- Next action (one sentence, imperative voice)

A strong pattern:

- Slack message = headline + link + one next action

- Basecamp record = details, logs, stakeholders, decisions

How do you prevent duplicates and alert storms in GitHub Actions and webhook-based pipelines?

Preventing duplicates and alert storms means using idempotency keys, cooldown windows, state-change notification rules, and message updates so repeated failures don’t flood Slack while still creating a reliable Basecamp trail for real incidents.

Then, your alerting system remains trustworthy in high-volume moments.

Use these techniques:

Idempotency keys

- Use workflow run ID, deploy ID, or release tag as a unique key.

- Store it (in middleware) or encode it into a task naming convention.

Cooldown windows

- “Only notify once per service per 10 minutes unless severity increases.”

- Pair cooldown with a Basecamp thread update to preserve history.

Notify on state change

- Failure → notify

- Failure repeats → update Basecamp record, don’t spam Slack

- Failure resolves → optional “resolved” summary

Message updates

- Instead of posting a new Slack message each time, update the existing one for the same run ID.

This is where “signal vs noise” becomes a concrete implementation choice.

What security and compliance practices matter for GitHub → Basecamp → Slack alert pipelines?

OAuth-based apps are best for managed permissions, webhook secrets are best for controlled event ingestion, and scoped tokens are optimal for narrowly-defined automation—so the best security approach depends on how much governance you need and how many systems you connect.

Meanwhile, compliance demands auditability, least privilege, and secret rotation.

Compare the security options:

Option A: OAuth apps (managed scopes)

- Best when you want permissions controlled via app installs and predictable scopes.

- Easier governance in larger orgs.

Option B: Incoming webhooks + scoped tokens

- Best for narrow automation tasks (post to one channel, create to-dos in one project).

- Requires disciplined secret management and rotation.

Option C: Middleware with audit logs

- Best for enterprises needing traceability: who triggered what, what was sent, where it went.

- Highest responsibility, but highest control.

Minimum practices that keep you safe:

- Store secrets in a secret manager, not in code

- Rotate secrets on a schedule and monitor failures

- Use least privilege (only the projects/channels needed)

- Keep an audit trail (workflow logs or middleware logs)

When compliance is a requirement, don’t treat alerts as “just messages.” Treat them as operational records.