Automating an Airtable → Confluence → Box → PandaDoc document flow means you can move from “request” to “signed and stored” with one controlled pipeline—so Ops and Legal stop chasing files, copy-pasting fields, and wondering which version is final.

Then, once you understand the end-to-end workflow, you can decide whether you actually need an orchestration tool (like Zapier or an equivalent) and how to structure your automations so approvals and signatures happen in the right order, with the right permissions.

In addition, the workflow only stays reliable if your Airtable data model is designed for signing (not just tracking), because templates, signers, and status gates all depend on clean fields that map consistently across every step.

Introduce a new idea: after the core “happy path” is clear, you’ll see how to strengthen compliance, prevent duplicates, and handle edge cases so your signing pipeline stays fast—even when real-world exceptions show up.

What is an Airtable → Confluence → Box → PandaDoc eSignature workflow, and how does it work end-to-end?

An Airtable → Confluence → Box → PandaDoc eSignature workflow is a cross-app automation system that turns a structured request into an approved, signed document and stores it with traceable links—using Airtable for data, Confluence for review, Box for storage, and PandaDoc for signing.

Next, to keep the workflow “no manual copy-paste,” you need a clear lifecycle that every team can follow.

Think of this as a single process with four “homes,” each with a job:

- Airtable (System of Record): one record = one document journey (request, parties, values, status, links).

- Confluence (Approval Hub): reviewers comment, approve, request changes, and leave a durable decision trail.

- Box (Source of Truth Storage): the signed PDF (and any certificate or attachment) lands in the right folder with consistent naming.

- PandaDoc (Signature Engine): creates the document from a template, routes signatures, captures audit metadata, and marks completion.

A practical lifecycle for Ops & Legal usually looks like this:

- Request captured (Airtable): Ops enters or receives intake data (customer/project, pricing/terms, signer contacts, due date).

- Draft prepared (PandaDoc): a doc is generated from a template using Airtable fields.

- Review packet created (Confluence): a page is created/updated with context, risk notes, and a link to the draft.

- Approval gate (Confluence → Airtable): Legal approves or requests revisions; Airtable status changes accordingly.

- Send for signature (PandaDoc): only when “Approved” is true.

- Store final (Box): signed doc lands in the correct folder; links and metadata are written back.

- Close the loop (Airtable): status becomes Signed/Archived, plus timestamps and document IDs for auditability.

What data should live in Airtable vs Confluence vs Box vs PandaDoc?

There are 4 main “data homes” in this workflow—Airtable, Confluence, Box, and PandaDoc—based on whether the information is structured, review-oriented, file-based, or signature-specific.

To illustrate the separation cleanly, start by mapping each object to its best home.

Airtable: structured fields that drive automation (the “truth” for logic).

Store what needs to be filtered, validated, and mapped:

- Record ID (unique key)

- Document type / template name

- Parties (company/person), signer email/role, signing order

- Terms (pricing, start date, term length, renewal)

- Status fields (Draft, In Review, Approved, Sent, Signed, Archived)

- Links/IDs (Confluence page URL, Box folder URL, PandaDoc document ID)

Confluence: review narrative and decision trail (the “why”).

Store what reviewers need to read and comment on:

- Summary of request and context

- Risk notes, redline guidance, exceptions approved

- Approval decision and conditions

- Links to source record and draft document

Box: the signed file system and retention controls (the “final artifact”).

Store what must be retrievable and governed:

- Final signed PDF

- Attachments and exhibits

- Completion certificate (if applicable)

- Folder-level permissions, retention policies, and naming conventions

PandaDoc: signature execution and audit metadata (the “signature truth”).

Store what belongs to signing:

- Document template, variables, and fields

- Signer routing and reminders

- Completion status, signing timestamps, audit log events

- Final exported PDF and supporting certificate

A mapping table makes this concrete. Below is a practical example that shows where each artifact belongs and what you should link back to Airtable so your pipeline remains traceable.

| Artifact | Where it lives | What you store there | What you link back to Airtable |

|---|---|---|---|

| Intake + terms | Airtable | Structured fields for mapping + logic | Record URL / Record ID |

| Review notes | Confluence | Comments, approvals, conditions | Confluence page URL |

| Draft doc | PandaDoc | Template + variables + routing | PandaDoc document ID + doc link |

| Final signed PDF | Box | File + retention + permissions | Box file link + folder link |

What does “No Manual Copy-Paste” mean in this workflow?

“No Manual Copy-Paste” means your document signing pipeline eliminates human handoffs for creating drafts, moving files, and updating status—so the system transfers data through controlled mappings instead of people retyping or emailing attachments.

More specifically, the workflow replaces the manual version of the process (copy, paste, attach, rename, chase approvals) with automation workflows that enforce the same steps every time.

In real operations, “no manual copy-paste” usually includes these automations:

- Generate document from record fields (Airtable → PandaDoc)

- Create an approval page automatically (Airtable → Confluence)

- Lock and gate sending until approval is captured (Confluence/Airtable → PandaDoc)

- Auto-store final files in the right Box folder (PandaDoc → Box)

- Sync status back to Airtable (PandaDoc → Airtable)

The payoff is not just convenience—it’s governance. When the workflow itself enforces the order of operations, you stop leaking risk through invisible “side emails” and ad-hoc attachments.

Evidence: According to a study by Centre Hospitalier de l’Université de Montréal from the Département de Radiologie, in 2003, median time from transcription to final signature decreased from 11 days to 3 days after introducing electronic signatures (and from 10 days to 5 days for chest radiographs). (pmc.ncbi.nlm.nih.gov)

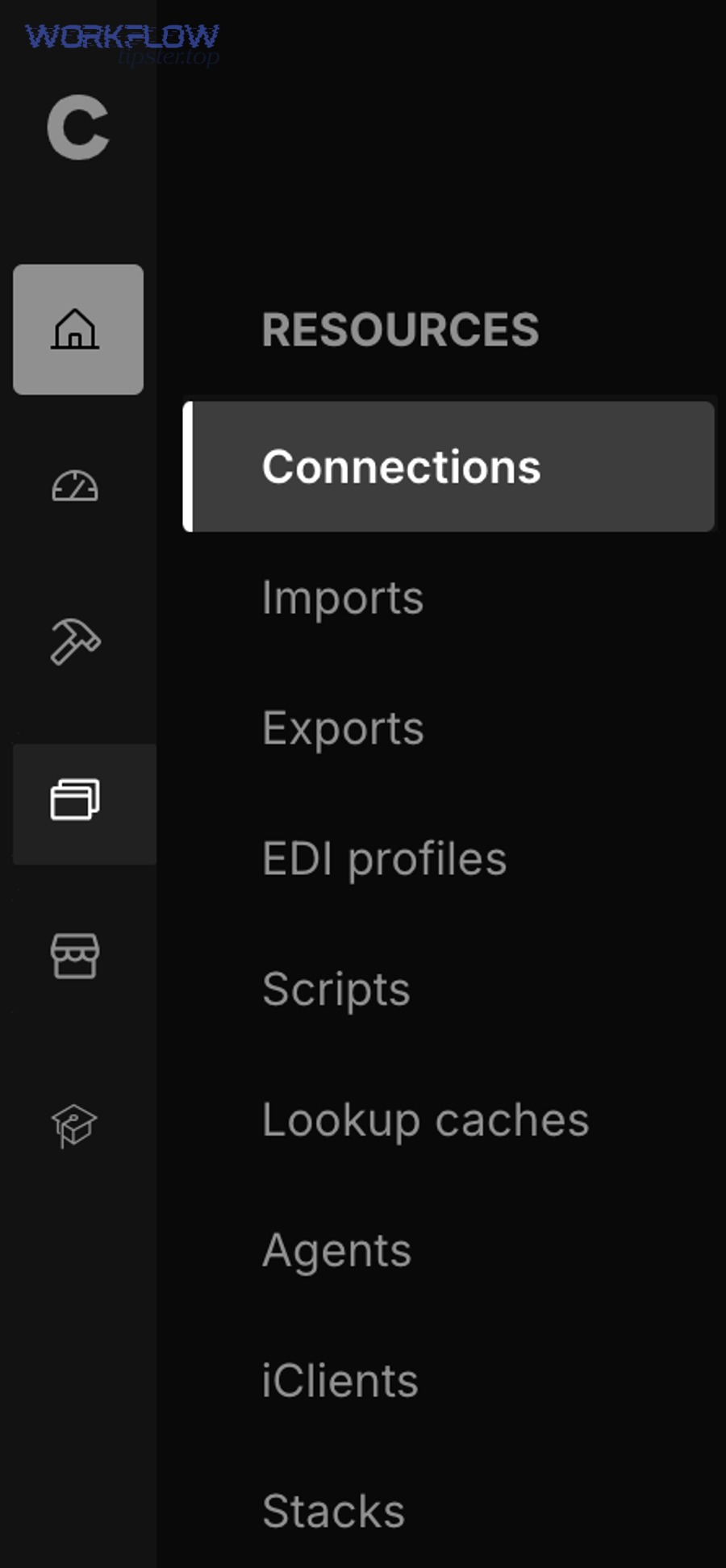

Do you need Zapier (or a similar integration tool) to connect Airtable, Confluence, Box, and PandaDoc?

Yes—most teams need Zapier or a similar integration tool to connect Airtable, Confluence, Box, and PandaDoc because (1) the workflow spans multiple apps, (2) approval gates require conditional logic, and (3) status syncing needs reliable triggers and error handling.

Then, once you accept that orchestration is the glue, you can choose the safest automation architecture.

In practice, native integrations help with single connections (like attaching Box files inside Airtable), but your full pipeline is a multi-step orchestration problem:

- Airtable triggers when a record moves to “Ready for Draft”

- PandaDoc generates a doc from a template

- Confluence creates/updates the approval page

- Legal approves (or requests revision) and that decision must update Airtable

- PandaDoc sends only when “Approved = Yes”

- Box stores final documents automatically

- Airtable updates status, stores doc IDs, and notifies owners

That’s exactly the kind of chain a dedicated automation platform is designed to run reliably.

Which integration patterns work best: single “master automation” or modular Zaps/flows?

A single “master automation” wins in simplicity, modular flows are best for maintenance, and a hybrid is optimal for compliance-driven Ops & Legal workflows.

However, to reduce failures and speed debugging, most teams should start modular and only centralize after the logic is stable.

Here’s the comparison that matters:

- Master automation (monolithic):

- Best for: simple workflows with few exceptions

- Wins on: fewer moving parts, fewer trigger points

- Loses on: painful debugging, high blast radius when one step changes

- Modular automations (stage-based):

- Best for: signing pipelines with approvals, exceptions, and compliance gates

- Wins on: easy troubleshooting, clearer ownership, safer deployments

- Loses on: you must manage state carefully (statuses + IDs)

- Hybrid (recommended):

- Use modular stage automations (Draft → Review → Approved → Sent → Signed)

- Keep one “control record” in Airtable that stores the canonical IDs and lock states

A good “Ops & Legal” hybrid rule: One automation per lifecycle stage, plus one watchdog automation that detects stuck states and alerts owners.

What are the minimum triggers and actions you must support to automate the full flow?

There are 7 minimum trigger/action groups you must support: intake trigger, doc creation, approval page creation, approval gate, send action, storage action, and status sync—based on the lifecycle stage being advanced safely.

Moreover, you should define these “minimums” before you choose tooling so you don’t get trapped by missing connectors.

A clean minimum set looks like this:

- Trigger: Airtable record created or updated to “Ready for Draft”

- Action: Create PandaDoc document from a template + Airtable fields

- Action: Create/Update Confluence page (review packet) with draft link

- Gate: Only advance when Legal approval is captured (field or page marker)

- Action: Send PandaDoc document to signers (with ordering if needed)

- Action: Store final signed PDF in Box (folder + file naming)

- Action: Update Airtable record (Signed, timestamps, doc IDs, links) and notify owner

If your chosen tool cannot handle conditional gates and idempotent updates (update existing doc instead of creating a new one), it will create duplicates and confuse stakeholders.

Evidence: Airtable’s Box integration explicitly supports adding Box attachments to Airtable records and notes that you can integrate with third-party automation to extend workflows across apps. (airtable.com)

How do you design the Airtable data model so it can drive approvals and document signing?

Designing the Airtable data model for document signing means building a schema that treats each record as a state machine—so template selection, signer routing, approvals, sending, and storage are all driven by validated fields rather than free-text notes.

Specifically, the core problem is “structured data must reliably become structured documents,” and the data model is what makes that possible.

A signing-ready Airtable base usually includes at least these tables:

- Requests (primary): one row per document journey (the canonical workflow state)

- Parties: organizations or counterparties (linked to Requests)

- Contacts/Signers: people, roles, and signer order rules

- Documents: doc type, template name, PandaDoc document ID, versions

- Approvals: reviewer, decision, timestamp, notes, conditions approved

If you prefer fewer tables, you can keep it in one table at first—but you must still enforce the same concepts as fields.

What fields are required to generate a PandaDoc document from an Airtable record?

There are 5 required field groups to generate a PandaDoc document: template selection, party identity, signer routing, document variables, and send controls—based on what the document engine needs to create a valid signing packet.

Next, you should add validation rules so the automation refuses to run if critical inputs are missing.

1) Template selection fields

- Document type (e.g., MSA, SOW, NDA)

- Template ID/name

- Locale/language (if relevant)

2) Party + deal context fields

- Counterparty name and address

- Project name, effective date, start date

- Pricing and payment terms

- Term length, renewal terms

3) Signer routing fields

- Signer 1 name + email + role

- Signer 2 (optional) + signing order

- Internal countersign required? (Yes/No)

4) Variable mapping fields

- Variables that match template placeholders (e.g., {CompanyName}, {Price}, {StartDate})

- Optional clauses flags (e.g., add DPA? add exhibit?)

5) Send controls (safety)

- Approval status (Approved / Not Approved)

- Ready-to-send boolean

- Lock fields after send (prevent edits that trigger duplicate runs)

A simple way to enforce completeness is a preflight view: only records that pass required fields appear in “Ready for Draft” or “Ready to Send.”

How do you prevent duplicate documents and repeated sends when records change?

Updating an existing document wins for stability, always-creating a new document is safer for version history, and idempotent “create once then update” is optimal for preventing duplicates while preserving traceability.

However, to stop runaway automation, your system must store a unique document ID and refuse to create a second document for the same record unless you explicitly request a new version.

Use these practical safeguards:

- Store the PandaDoc document ID in Airtable the moment the doc is created.

- Use idempotency logic:

- If PandaDoc document ID is empty → create doc

- If PandaDoc document ID exists → update doc (or do nothing)

- Gate sending with a separate boolean: “Send Approved Doc = Yes”

- Lock key fields after sending (or move record to a “Sent” state that blocks edits)

- Use a “Version” field if you want controlled re-issuance (v1, v2, v3)

A common Ops & Legal pattern is “two-stage creation”:

- Create draft doc (Draft Doc ID stored)

- Only send once “Approved = Yes” and “Send = Yes” are both true

This prevents accidental sends when a teammate edits a price field.

How do you build the Confluence approval step so Legal can review before the document is sent for signature?

Building the Confluence approval step means using a standardized review page as the single approval surface—so Legal reviews context, confirms exceptions, and makes a decision that your automation can read before triggering the “send for signature” action.

Besides making review easier, this creates a consistent audit narrative across every deal.

The key is: Confluence isn’t just a note pad. It’s an approval interface when you structure it like one.

A high-performing Confluence approval page template often includes:

- Request summary (who/what/why)

- Deal terms snapshot (pulled from Airtable)

- Risk checklist (e.g., liability cap changes, non-standard clauses)

- Links: Airtable record, PandaDoc draft, Box folder (if created early)

- Decision block: Approved / Revisions Needed + notes

- Timestamp + approver identity

Your automation then watches one of these signals:

- A field in Airtable updated by Legal (preferred for reliability), or

- A Confluence marker you can parse (less reliable), or

- A structured form/macro that writes the decision back to Airtable

Which approval states should you use to move from draft to “send for signature”?

There are 7 main approval states—Draft, Legal Review, Revisions Needed, Approved, Sent, Signed, Archived—based on who owns the next action and what the system should allow at each stage.

Then, once your statuses are stable, your automations can be “state-driven” instead of “event-driven,” which reduces chaos.

A strong state machine includes:

- Draft: created, editable; not reviewable yet

- Legal Review: legal owns; edits allowed; sending blocked

- Revisions Needed: Ops owns; must update fields/doc; sending blocked

- Approved: send allowed; edits restricted (or versioned)

- Sent: doc out for signatures; prevent re-send

- Signed: completed; store final PDF; notify stakeholders

- Archived: end state; retention policies apply

Your workflow should enforce two policies:

- Only Legal can move Legal Review → Approved

- Only automation can move Sent → Signed (based on PandaDoc completion)

How does Confluence approval compare to approving directly inside PandaDoc?

Confluence wins in cross-team context and long-form decision rationale, PandaDoc is best for in-document collaboration, and a combined approach is optimal when Legal needs a durable approval narrative plus a controlled signing packet.

Meanwhile, the better choice depends on how your organization treats “approval” versus “signature.”

Compare on the criteria that matter:

- Governance: Confluence pages can capture decision context and exceptions in one place; PandaDoc focuses on the document itself.

- Collaboration style: Confluence is best for commentary and policy notes; PandaDoc is best for changes tied to the doc.

- Audit readiness: Confluence creates a structured “why we approved” record; PandaDoc creates “how it was signed” evidence.

A practical compromise:

- Use Confluence to approve the decision (approve, reject, conditions)

- Use PandaDoc to execute the signature (signers, routing, completion)

That way, approval is a gate—not a vague feeling.

How do you store, organize, and secure signed documents in Box without breaking the audit trail?

Storing signed documents in Box without breaking the audit trail means you use consistent folder rules, stable naming, controlled permissions, and bidirectional linking back to Airtable—so anyone can prove what was signed, when, and where the final file lives.

More importantly, storage must be predictable or the workflow becomes “signed but lost.”

Audit-friendly storage is less about the storage tool and more about repeatable policy. In Box, the policy is usually:

- Every document journey creates (or uses) a single folder in the correct location.

- The final signed document has a stable name tied to the Airtable record ID.

- Permissions are set at the folder level based on business rules (client team, Legal, Finance).

- The Airtable record stores the Box folder link and the final file link.

What’s the best Box folder structure for contracts by client/project/type?

There are 3 main Box folder structures—by client, by project, or by document type—based on how your organization searches for contracts and how permissions should inherit.

To better understand which structure fits, decide what “retrieval” means in your company: customer-first, delivery-first, or legal-first.

Option A: Client-first (common for Sales/CS)

- /Contracts/{ClientName}/

- /MSA/

- /SOW/

- /Renewals/

Option B: Project-first (common for Ops/Delivery)

- /Projects/{ProjectCode}/Contracts/

- /SOW/

- /ChangeOrders/

Option C: Document-type-first (common for Legal centralization)

- /Legal/Contracts/{DocType}/

- /{ClientName}/

No matter the structure, enforce a naming rule that supports traceability:

- {RecordID} – {ClientName} – {DocType} – {EffectiveDate}.pdf

This makes it hard to lose the file, and easy to confirm you’re reading the correct version.

Should Box be the “final system of record,” or should the signed doc also live elsewhere?

Box wins as the final system of record for storage governance, PandaDoc is best as the signing system of record for audit events, and Airtable is optimal as the operational system of record for status and routing.

On the other hand, trying to make one tool do all three jobs usually creates gaps.

The best practice is: One “final file home,” many “links.”

- Box: final signed PDF + retention/permissions

- PandaDoc: signing audit log and completion metadata

- Airtable: the workflow timeline, IDs, and links that tie everything together

- Confluence: approval narrative and exceptions

This “linked system of record” approach is what makes your audit trail resilient.

How do you confirm the workflow works and troubleshoot when steps fail?

Confirming the workflow works means you test each stage with controlled data, log every automation run, and implement safeguards (validation, retries, and alerts) so failures become visible and fixable instead of silently breaking your signing pipeline.

Then, when something fails, you debug by stage—not by guessing.

A reliable testing plan looks like this:

- Use a sandbox template and test signers (internal emails)

- Run one “happy path” record all the way to Signed

- Run one “missing field” record to confirm validation blocks it

- Run one “approval denied” record to confirm send is blocked

- Run one “retry scenario” (simulate transient failure like permission denied)

- Document expected outputs at each stage (IDs, links, statuses)

To keep troubleshooting fast, track these canonical identifiers inside Airtable:

- PandaDoc document ID

- Confluence page URL

- Box folder link + final file link

- Last automation run timestamp

- Last error message (if any)

What are the most common failure points across Airtable, Confluence, Box, and PandaDoc—and how do you fix them?

There are 6 common failure points—field mapping, missing permissions, approval gating, duplicate triggers, file storage conflicts, and auth/token issues—based on where cross-app workflows typically break.

Next, fix them in the same order your data travels: input → creation → approval → send → storage → sync.

1) Field mapping errors (Airtable → PandaDoc)

Symptoms: blank variables, wrong signer emails, wrong price fields.

Fix:

- enforce required fields before doc creation

- use consistent variable naming conventions

- log the mapped payload for one test record

2) Permissions errors (Confluence/Box)

Symptoms: page created but not visible, file upload fails, folder cannot be created.

Fix:

- pre-create parent folders with correct inheritance

- ensure service account has access to target spaces/folders

- test with least privileges that still work

3) Approval gate not enforced

Symptoms: doc sent before Legal approval.

Fix:

- separate “Approved” from “Ready to Send”

- allow only Legal role to set Approved = Yes

- block send unless both fields are true

4) Duplicate triggers and duplicate documents

Symptoms: multiple PandaDoc docs created for one record.

Fix:

- idempotency using stored document ID

- trigger only from a “Ready” view or a single controlled field change

- lock record after send

5) Storage conflicts (PandaDoc → Box)

Symptoms: file overwrite, wrong folder, wrong naming.

Fix:

- generate folder path from deterministic fields (client ID/project ID)

- include record ID in file name

- store final file link back to Airtable

6) Auth or token problems

Symptoms: intermittent failures after “it worked yesterday.”

Fix:

- reconnect accounts and reauthorize

- monitor for auth failures in logs

- implement a fallback alert to Ops

To keep support playbooks consistent across workflows, you can log failures in a dedicated “Automation Errors” table in Airtable and route alerts to a single channel—especially if your team runs many automation workflows across tools.

How do you compare “manual checks” vs “automated safeguards” for reliability?

Manual checks win for rare exceptions, automated safeguards are best for daily reliability, and a layered approach is optimal—automated validation for every run plus manual review only when a rule flags risk.

However, if your goal is “No Manual Copy-Paste,” manual checks should be the exception, not the default.

Here’s the contrast that keeps workflows stable:

- Manual checks (human spot-checking):

- Good for: unusual edge cases (custom clauses, high-risk terms)

- Bad for: scale, consistency, speed

- Automated safeguards (system-enforced rules):

- Good for: preventing missing fields, duplicate sends, permission mismatches

- Bad for: capturing nuanced legal judgment unless you encode rules carefully

A recommended safeguard stack:

- Preflight validation (required fields)

- Approval gate (Approved + Ready to Send)

- Idempotency (create once, update thereafter)

- Retries with backoff (for transient failures)

- Alerts (for stuck states or repeated errors)

Evidence: According to a report by the Centre for Clinical and Administrative Transformation (published via the California State University system) from its process efficiency initiative, in 2020, electronic signatures reduced average turnaround time by 73% across six selected cases versus manual processing. (calstate.edu)

What advanced options improve compliance, governance, and edge-case handling in this signing workflow?

Advanced options improve compliance and edge-case handling by adding audit-grade artifacts, stronger identity and permission controls, multi-signer routing rules, and anti-duplication logic—so the workflow stays defensible under legal review and stable under scale.

In addition, these upgrades help you extend the same design to other document stacks without rebuilding everything.

This is the point where micro semantics matters: you’re no longer asking “how do I automate signing,” you’re asking “how do I automate signing safely.”

You’ll also notice that teams often run parallel stacks depending on tool constraints. For example, an adjacent pattern you may already be maintaining is airtable to microsoft excel to dropbox to dropbox sign document signing for organizations that standardized on Dropbox Sign and spreadsheet-driven data entry. Likewise, some teams prototype airtable to microsoft excel to dropbox to pandadoc document signing when Excel is still the finance-native source for pricing tables, but PandaDoc handles templating and routing.

If you manage multiple stacks like that, the goal is not to “pick one forever”—it’s to reuse the same control principles: validation, approval gates, idempotency, and storage governance.

Capture these artifacts consistently:

- Signed PDF (Box final file)

- Completion certificate / audit summary (stored with the signed PDF)

- Signing timestamps (written back to Airtable)

- Signer identity evidence (as supported by the signing tool)

- Approval decision narrative (Confluence page + approver identity + date)

- Chain of custody links (Airtable links to Confluence, PandaDoc ID, Box file URL)

Which compliance and audit-trail artifacts should you capture for legal defensibility?

There are 6 core audit artifacts you should capture—signed PDF, completion certificate, timestamps, signer identity evidence, approval decision record, and storage chain—based on what auditors and Legal typically need to reconstruct intent and execution.

Specifically, you’re building a “proof bundle” that answers: who approved, who signed, what was signed, and where it lives.

A practical rule: if someone can’t reconstruct the full story from the Airtable record alone, your “system of record” isn’t complete yet.

How do multi-signer workflows (ordered signing, CCs, internal countersign) change your automation design?

Single-signer flows win in speed, ordered multi-signer flows are best for governance, and internal countersign is optimal for high-risk agreements—based on how much control you need over sequencing and internal accountability.

However, multi-signer routing changes your data model first, before it changes your automation.

What must change in Airtable:

- A Signers table (or repeated signer fields) with:

- signer role (customer, legal, finance, internal approver)

- email, name, order

- conditional rules (e.g., countersign only if contract value > threshold)

What must change in automation:

- The send step becomes “build routing,” not just “send”

- Status transitions may add intermediate stages (e.g., “Customer Signed → Waiting Internal Countersign”)

- Reminders and escalations must be role-aware (don’t chase the wrong signer)

What’s the best way to handle rate limits, retries, and duplicate events without creating duplicate contracts?

The best method is an idempotent execution model with 3 controls—event deduplication, document ID locking, and staged retries—so your workflow can retry safely without generating extra documents or sending multiple signature requests.

Then, when a run fails, you re-run the stage, not the entire pipeline.

Use this safety blueprint:

- Deduplicate triggers: only allow a stage to run if the record is in the correct state and a “stage completed” flag is false.

- Lock using IDs: once the PandaDoc document ID exists, never create a second doc unless you intentionally increment a version field.

- Retry only the failing action: if Box upload fails, retry Box upload; do not regenerate the PandaDoc doc.

A helpful operational pattern is a Dead-Letter Queue table (in Airtable):

- Record ID

- Failed stage

- Error message

- Retry count

- Owner

- Resolution notes

That turns firefighting into a process.

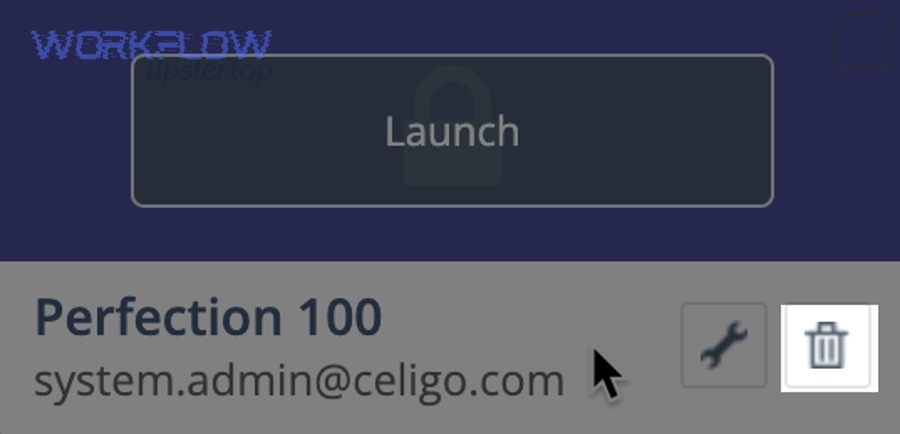

When should you replace parts of the workflow with native integrations instead of an all-in-one automation tool?

Native integrations win for stable, single-purpose links, orchestration tools are best for multi-step conditional pipelines, and a hybrid architecture is optimal for teams that need both governance and maintainability.

More importantly, you should replace only the “low-risk plumbing,” not the workflow brain.

A realistic hybrid for this article’s workflow:

- Keep Airtable ↔ Box attachments native where possible (simple, stable, low logic) (airtable.com)

- Keep approval gating and signing orchestration in your automation tool (this is where conditions, retries, and state management live)

- Keep audit artifacts stored and linked in Airtable/Box/Confluence regardless of which connector moves the data

If you document these decisions and standardize your stage model, you can scale your automation workflows across departments without losing control. And if you publish your internal playbooks, you can even centralize them under a knowledge hub like WorkflowTipster.top so teams reuse patterns instead of reinventing them.