Slack timeouts and slow runs are fixable when you treat them as an engineering and operations problem: measure the slow path, acknowledge requests quickly, and push long work into the right execution model—so users stop seeing timeouts and your runs finish consistently.

Next, you’ll learn what “timeout” and “latency” mean in real Slack workflows, because the fix depends on where the clock is ticking: the acknowledgement window, the downstream API calls, or the infrastructure your app depends on.

Then, you’ll follow a diagnostic checklist that separates app logic issues from Slack constraints like rate limits and transient incidents, so you can stop guessing and start collecting the evidence that makes the fix obvious.

Introduce a new idea: once the fundamentals are stable, we’ll expand into the edge cases that keep teams stuck in endless Slack Troubleshooting—even after the “basic” fixes are in place.

What are Slack “timeouts” and “latency” in workflow runs?

Slack “timeouts” and “latency” in workflow runs are execution delays that either exceed a required response window (timeout) or degrade user experience without failing (latency), typically caused by slow handlers, slow dependencies, or constrained API throughput.

To better understand why slow runs turn into visible failures, you need to map each symptom to the exact place time is being measured.

A timeout is a deadline problem: Slack expects a timely acknowledgement for certain interactions, and when your app misses that deadline, Slack shows a timeout message even if your code keeps running. A latency problem is a speed problem: the run eventually completes, but it completes too slowly to feel reliable—especially when users are waiting in-channel.

In practice, the user experience differs sharply:

- Timeout experience: the user sees an error or no response, assumes the command failed, and often tries again (which can create duplicate actions).

- Latency experience: the user sees a delayed message, delayed step completion, or a workflow that feels “stuck,” and confidence drops even if nothing errors.

That distinction matters because the solution isn’t “make everything faster” first—it’s “respond on time” first, then “make the rest fast enough.”

What does a “timeout” mean in the request lifecycle?

A “timeout” is a forced failure state where the platform does not receive a required acknowledgement within a fixed time window, even if your handler continues processing in the background.

Specifically, when your handler misses the acknowledgement window, the user-facing interaction fails, so the same slow code path starts producing retries, re-clicks, and repeated requests.

Most Slack timeout pain starts from a simple mismatch: users trigger an interaction expecting an immediate reply, but the handler does heavyweight work (database queries, exports, enrichment calls) before acknowledging.

To prevent that mismatch, treat the request lifecycle like a two-phase contract:

- Phase 1: Acknowledge immediately (tell Slack “I got it”).

- Phase 2: Do the heavy work (compute, call external services, transform data).

- Phase 3: Respond or update (post a message, update a view, complete a step).

When you follow that contract, timeouts stop being mysterious: they become a clear “you did too much before the ack” signal.

Evidence: According to the Slack Developer Docs, your app must acknowledge slash command requests within 3 seconds, otherwise the user will see a timeout error.

What does “latency” mean, and when is it a problem vs normal?

Latency is the total time from user action to the moment the user sees the intended outcome, including your compute time, network time, and any dependency waiting.

More specifically, latency becomes a problem when it pushes users into “repeat behavior” (re-trying commands, clicking again, or abandoning the flow), because the system no longer feels dependable.

A useful way to think about latency is to split it into three layers:

- Handler latency: time inside your code before it hands off work or produces an output

- Dependency latency: time waiting on databases, third-party APIs, queues, and file storage

- Delivery latency: time to deliver the result back to the user (posting, updating, or completing workflow steps)

This layering helps you avoid the most common trap: optimizing the wrong layer. For example, shaving 200ms off your code doesn’t help if a dependency adds 3 seconds of delay, and your users still experience “slow runs.”

From a workflow reliability perspective, prioritize in this order:

- Don’t miss acknowledgement deadlines (prevent timeouts).

- Reduce long-tail latency (your slowest 5% or 1% of runs).

- Prevent perceived slowness (use progress messages, step updates, and clear completion signals).

Evidence: According to a study by the University of Pittsburgh (with co-authors from Clemson University and the College of William & Mary) published in 2004, user attitudes and behavioral intentions changed measurably as delay increased across 0–12 seconds in an experiment with 196 participants.

Are your Slack slow runs caused by app logic rather than Slack itself?

Yes—Slack slow runs are very often caused by your app logic rather than Slack itself for three common reasons: your handler does too much before acknowledging, your dependencies block the critical path, and your code retries or loops in ways that amplify delay.

Next, you’ll narrow it down with a set of “yes/no” checks that make the root cause visible in minutes rather than days.

Even when the root cause is inside your app, it usually shows up in Slack first—because Slack is the user interface layer. That’s why teams blame Slack when the problem is really “a slow database call” or “a blocking request” or “a retry storm.”

Here’s the fastest way to avoid misdiagnosis: identify what’s slow before you try to fix it.

Is your handler doing long work before acknowledging the request?

Yes—if your handler performs long work before it acknowledges the request, it will frequently produce timeouts, duplicate triggers, and user re-tries.

To begin, focus on the simplest measurable truth: how much time passes from “request received” to “ack sent.”

If that time is even close to the acknowledgement window, you’re operating on a knife edge. The fix is rarely complicated—it’s architectural discipline:

- Acknowledge first: respond immediately and defer heavy work

- Offload heavy tasks: move slow steps into background jobs or asynchronous workflows

- Return progress: tell users what’s happening so they don’t re-run the command

A concrete example: if you parse a large payload, normalize fields, call two APIs, and then write to a database before acknowledging, your handler might work perfectly in staging but fail in production under real load.

This is also where “slack duplicate records created” usually begins: users see no response, run the command again, and your system performs the same create operation twice because it isn’t idempotent.

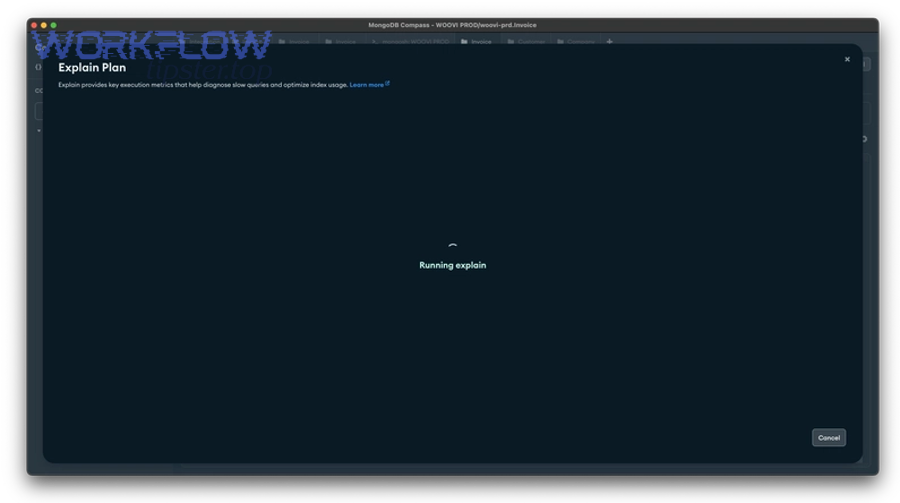

Is an external dependency the bottleneck (DB/API/queue)?

Yes—external dependencies are often the true bottleneck because they introduce variable latency, timeouts, and backpressure that your handler inherits.

Then, isolate dependency latency by measuring each dependency call separately, instead of measuring only “total handler time.”

Dependencies tend to cause slow runs in recognizable ways:

- Database queries: slow joins, missing indexes, connection pool exhaustion

- Third-party APIs: throttling, inconsistent response times, transient failures

- Queues: backlog growth, worker starvation, retry pileups

- File operations: large uploads/downloads, slow encryption, large parsing workloads

When dependencies are the bottleneck, “speeding up your code” is the wrong fix. Your best levers are:

- Caching: avoid repeated calls for the same data

- Batching: combine many small calls into fewer calls

- Timeout discipline: set client timeouts so one slow call doesn’t block everything

- Bulkheads: limit concurrency per dependency to prevent cascading failure

A simple operational rule helps: if dependency latency varies widely (fast most of the time, slow sometimes), your reliability work should target the slow tail—because that’s what users experience as random “slow runs.”

What are the most common causes of Slack timeouts and slow workflow runs?

There are five main types of causes of Slack timeouts and slow workflow runs: request-handling bottlenecks, API constraints and rate limits, infrastructure performance limits, network/enterprise routing issues, and platform incidents or degraded service, grouped by where the delay originates.

Below, you’ll classify your issue into one of these buckets so every fix you apply is tied to a real cause.

This grouping matters because teams often treat everything as a “timeout bug,” when the real issue is “rate limiting,” “dependency slowness,” or “network constraints.”

Which problems come from request handling (synchronous work, deadlocks, concurrency)?

There are four common request-handling problems that trigger timeouts and slow runs: synchronous heavy work, concurrency contention, deadlocks/await mistakes, and cold-start or resource pressure, grouped by how they block your critical path.

More importantly, these problems are fully within your control—so they’re usually the highest ROI fixes.

- Synchronous heavy work

- large payload transformations

- file generation (CSV/PDF)

- expensive templating or formatting

- Concurrency contention

- connection pools exhausted

- locks around shared resources

- single-threaded bottlenecks

- Deadlocks / async misuse

- awaiting long tasks on the request thread

- blocking calls in async code

- missing cancellation paths

- Cold starts / resource pressure

- serverless cold starts

- memory pressure causing GC pauses

- CPU saturation under bursts

This is also where “slack data formatting errors” can sneak in: when you rush to respond, you might format blocks incorrectly or serialize invalid JSON under load. The best practice is to validate formatting in a fast path and push heavy enrichment into the async path.

Which problems come from Slack API limits and backpressure (rate limits, retries)?

There are three main Slack API backpressure problems: rate limiting (HTTP 429), bursty request patterns, and retry amplification, grouped by how Slack protects its APIs and how apps respond.

However, you can turn these problems into predictable behavior when you implement backoff and batching correctly.

- Rate limiting (429 + Retry-After): Slack tells you when to retry

- Bursty request patterns: many requests at once (e.g., fan-out to multiple channels/users)

- Retry amplification: your system retries aggressively, creating a self-made storm

If you ignore backpressure, you’ll see slow runs that look like timeouts—but the real problem is throughput. Your handler waits, retries, or stalls behind a rate limit wall.

Evidence: According to the Slack Developer Docs, when you exceed rate limits Slack returns HTTP 429 Too Many Requests and includes a Retry-After header telling you how long to wait before retrying.

Which problems come from network and enterprise environments (proxy, firewall, DNS)?

There are three major network/enterprise causes of slow runs: proxy/TLS inspection delays, firewall allowlisting and routing issues, and DNS latency or resolution failures, grouped by where packets slow down or fail.

In addition, these causes tend to be intermittent, which makes them feel “random” unless you measure them.

- Proxy/TLS inspection: adds handshake overhead and sometimes breaks long-lived connections

- Firewall and allowlists: block or slow outbound calls, especially across regions

- DNS issues: slow resolution or intermittent lookup failures that delay every downstream call

When these issues exist, your app might be fast in a developer environment but slow in production—because production routes through enterprise controls and egress gateways.

How do you troubleshoot Slack timeouts step-by-step without guessing?

You can troubleshoot Slack timeouts step-by-step without guessing by using a 6-step diagnostic workflow—reproduce, instrument, timestamp, isolate, confirm, and verify—so you can pinpoint whether the delay comes from handler time, dependency time, network conditions, or API backpressure.

Let’s explore the workflow in order, because every step produces evidence that makes the next step easier.

Before you change code, change your visibility. Troubleshooting without visibility is where teams get stuck in endless Slack Troubleshooting loops—fixing symptoms, not causes.

What evidence should you collect first (logs, timestamps, correlation IDs)?

There are five core evidence types you should collect first: timestamps, correlation IDs, structured logs, dependency timings, and outcome tags, based on the criterion “does this data let me reconstruct the slow run end-to-end?”

Next, capture them consistently so one incident teaches you something permanent.

- Timestamps at boundaries

- request received

- acknowledgement sent

- dependency start/end

- final response posted

- Correlation identifiers

- request ID (generated by your app)

- user ID / channel ID (careful with privacy)

- workflow run ID (if available)

- Structured logs

- JSON logs that can be searched and aggregated

- include severity and error categories

- Dependency timing metrics

- DB query time

- external API time

- queue wait time

- Outcome tags

- “acked-on-time” vs “acked-late”

- “rate-limited” vs “dependency-timeout”

- “user-retried” indicators

This evidence also prevents secondary problems like “slack duplicate records created,” because you can detect duplicate triggers and correlate them with missed acknowledgements.

How do you isolate the slow segment (compute vs dependency vs network)?

Compute wins as the likely culprit when handler CPU time dominates, dependency latency is best to suspect when a single DB/API call dominates, and network is optimal to investigate when multiple services slow down simultaneously or handshake time spikes.

Meanwhile, you’ll isolate the segment by comparing measured timings rather than debating hypotheses.

Use a simple isolation approach:

- If handler time is high before any dependency calls: it’s compute, parsing, formatting, or locking

- If one dependency call dominates total time: it’s dependency performance or availability

- If multiple dependencies slow together and handshake/DNS time rises: it’s network/egress/proxy

A small table helps teams align quickly. The table below maps symptoms to the most probable slow segment and the best first measurement:

| Symptom | Most likely segment | First measurement to confirm |

|---|---|---|

| Ack is late, dependency calls haven’t started | Compute / handler path | “request received → ack sent” time |

| Ack is fast, but result arrives late | Dependency / queue | timing per call + queue wait time |

| Multiple services slow at once | Network / enterprise egress | DNS time, TLS handshake, packet loss |

| Errors appear as 429 then slowdown | API backpressure | count 429s + Retry-After adherence |

To illustrate, if your logs show ack is within 200ms but the final message arrives 15 seconds later, your timeout issue may be solved—but your latency problem remains, likely in dependencies or background processing.

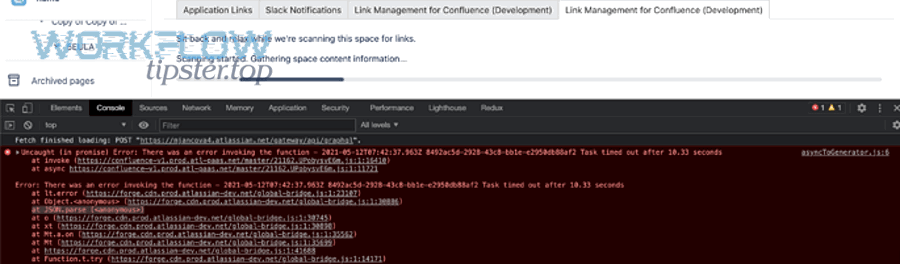

How do you confirm it’s not a platform incident or degraded region?

Yes—you can confirm it’s not a platform incident by checking three signals: Slack status for active incidents, your error-rate correlation across time, and whether delays affect multiple unrelated code paths at once.

Besides, this step protects you from wasting hours “fixing” an incident that resolves on its own.

Confirm with these checks:

- Status signal: is there an acknowledged incident for messaging, workflows, API, or connectivity?

- Correlation signal: do spikes align with a time window, then disappear without deploys?

- Breadth signal: do multiple features slow at once (commands, events, message posting), even those with different dependencies?

If these signals are positive, shift your posture: communicate impact, reduce load, and avoid risky deploys. If they’re negative, you’re back to app-side troubleshooting with confidence.

What fixes reliably reduce timeouts and latency in Slack workflows?

There are four main fix types that reliably reduce timeouts and latency in Slack workflows: fast acknowledgement patterns, asynchronous execution, safe retries with idempotency, and performance tuning (batching/caching/timeouts), grouped by what they improve—deadline reliability vs throughput vs speed.

More specifically, each fix maps to a cause bucket, so you can apply the right fix without overengineering.

This is the section where teams usually move from reactive to durable: you stop chasing individual timeouts and instead build a system that stays fast under real load.

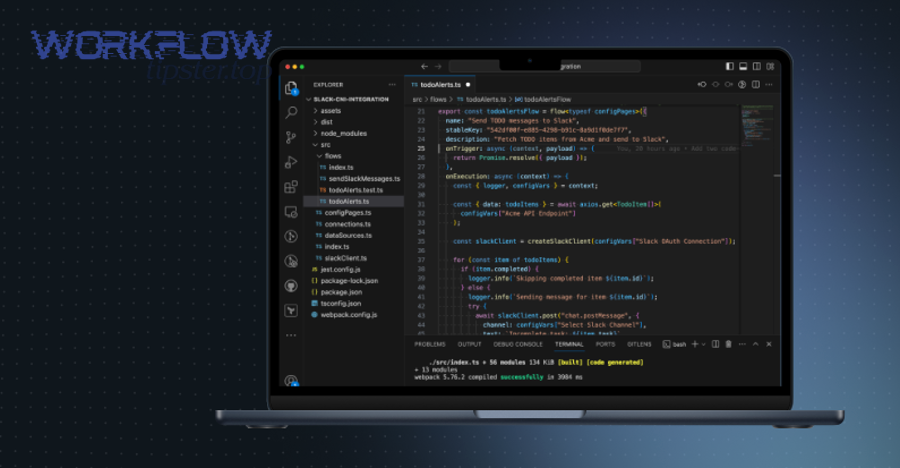

How do you “ack fast” and move the heavy work async?

“Ack fast + async work” is a two-phase execution pattern where you immediately acknowledge the user action, then complete long processing in a background worker or workflow step, and finally post results back to Slack, designed to eliminate timeout errors while preserving user feedback.

Next, implement it as a repeatable template, not a one-off trick.

A reliable template looks like this:

- Receive interaction (command/button/event)

- Validate minimal inputs (fast checks only)

- Acknowledge immediately (avoid deadline failure)

- Enqueue background job (include correlation ID + user context)

- Post progress (optional: “Working on it…”)

- Post result (final output or link)

- Handle failure (explicit error message, not silence)

This pattern directly reduces two common problems:

- Timeouts: because you meet acknowledgement deadlines

- Duplicate runs: because users get a signal that work started, so they don’t retry

It also reduces error chains like “slack duplicate records created,” because you can treat the job as idempotent and deduplicate by request ID.

How do you implement safe retries and idempotency to prevent duplicate work?

Safe retries and idempotency are reliability techniques that allow your system to repeat operations without changing the outcome, typically by using an idempotency key, deduplication storage, and controlled backoff—so retried requests don’t create duplicate records or repeated side effects.

In addition, these techniques turn inevitable retries into predictable behavior.

Implement them in three layers:

- Idempotency key layer

- generate a key from (workspace + user + command + timestamp bucket)

- store “operation started/completed” state keyed by that id

- Deduplication layer

- if the key already exists, return the prior result or status

- avoid running the same write operation twice

- Backoff layer

- exponential backoff with jitter

- retry budgets (cap retries so failures don’t become storms)

This is the cleanest fix for “slack duplicate records created,” because duplicates stop being a mystery and become a prevented condition.

Which performance optimizations matter most for slow runs (caching, batching, timeouts)?

There are three performance optimization categories that matter most for slow runs: caching to avoid repeated work, batching to reduce API overhead, and timeout/concurrency discipline to prevent tail-latency blowups, based on the criterion “does this reduce total work or reduce waiting?”

Especially under load, these optimizations reduce long-tail latency more than micro-optimizations.

- Caching (reduce repeated work)

- cache expensive lookups (user profiles, channel metadata)

- cache rendered templates when safe

- cache normalization results for repetitive payloads

- Batching (reduce overhead)

- combine Slack API calls when possible

- aggregate writes/updates rather than sending many small ones

- avoid fan-out patterns that trigger rate limiting

- Timeout + concurrency discipline (reduce tail latency)

- set per-dependency timeouts

- cap concurrency per dependency

- fail fast and report clearly when a dependency is down

This is also where you reduce “slack data formatting errors”: validate payload structure in a fast stage, keep formatting templates tested, and avoid dynamic block construction without guards.

Which approach is better for your case: synchronous responses vs asynchronous workflows?

Synchronous responses win in simplicity for short tasks, asynchronous jobs are best for long or dependency-heavy work, and workflow-style step execution is optimal for multi-step processes that require visibility, retries, and auditability.

However, the right choice depends on user expectation, time limits, and the cost of failure.

This decision is the difference between “it works in staging” and “it works in production.” If your interaction is long-running, no amount of tuning will permanently save a synchronous design from timeout pressure.

When is synchronous acceptable, and when will it keep timing out?

Synchronous is best when outcomes are fast and deterministic, asynchronous wins when outcomes are slow or variable, and hybrid (ack fast + async) is optimal when users need immediate feedback but work takes time.

On the other hand, trying to keep a long task synchronous usually creates a cycle of timeouts, retries, and confusion.

Use these decision rules:

- Choose synchronous when:

- work completes quickly under realistic load

- dependencies are stable and fast

- user experience benefits from immediate output

- Choose async when:

- work is slow (exports, large transformations, multi-API enrichment)

- dependencies are variable or rate limited

- you need retries and deduplication

- Choose hybrid when:

- users need an immediate acknowledgement

- final output can arrive later

- you want to prevent re-tries and duplicates

If you’re experiencing “slack duplicate records created,” that’s a strong signal that users are re-trying due to insufficient feedback—so hybrid or async is usually the safer architecture.

How do workflow steps compare to event-driven handling for long jobs?

Workflow steps win for visibility and structured retries, event-driven handling is best for flexibility and integration breadth, and queued job systems are optimal for heavy processing with controlled throughput.

More importantly, the best long-term design often combines them.

Compare them by three criteria:

- Visibility: can you tell where the run is stuck?

- Reliability: can you retry safely without duplicates?

- Throughput: can you handle bursts without rate-limit collapse?

A practical hybrid looks like this:

- Use events or commands to capture user intent

- Use queues to execute heavy work with controlled concurrency

- Use workflow steps when you need step-by-step status, approvals, or auditing

- Post results in a consistent message format so users know what “done” looks like

That consistency is the hidden performance win: clear, predictable responses reduce user retries, reduce duplicate actions, and reduce the perception of slowness.

What edge cases still cause Slack slowdowns even after you fix the basics?

There are four edge-case categories that still cause Slack slowdowns after you fix the basics: connection mode differences (WebSocket vs HTTP), enterprise network controls, client-side performance limits, and cross-workspace variability, grouped by whether slowness originates outside your core handler logic.

In addition, these edge cases are where mature Slack Troubleshooting practices make the biggest difference.

These edge cases live on the micro-semantics layer: they don’t change the fundamental principles, but they change the failure modes. The lexical contrasts matter here—local vs enterprise, client vs server, online vs constrained, WebSocket vs HTTP—because each contrast points you to a different diagnostic approach.

How does Socket Mode (WebSocket) compare to HTTP endpoints for reliability and latency?

HTTP endpoints win for predictable request lifecycles, WebSocket-based Socket Mode is best for environments where inbound HTTP is difficult, and hybrid routing is optimal when you need resilience across network constraints.

Specifically, the latency trade-off depends on connection stability and the behavior of enterprise proxies.

Socket Mode can be slower or more failure-prone when:

- WebSocket connections are interrupted or aggressively inspected

- reconnect storms happen during network blips

- long-lived connections are throttled

HTTP can be slower when:

- cold starts dominate request handling

- TLS handshake overhead is high per request

- you lack keep-alive optimizations

The fix isn’t ideological—it’s evidence-driven. Measure reconnect rates, message delivery times, and failure modes, then choose the mode that stays stable in your real environment.

Can enterprise proxies/TLS inspection make Slack feel fast locally but slow in production?

Yes—enterprise proxies and TLS inspection can make Slack feel fast locally but slow in production for three reasons: they add handshake overhead, they alter routing paths, and they sometimes throttle or break long-lived connections.

Moreover, this is one of the most common “it works on my machine” causes of slow runs.

If you suspect this, collect the right evidence:

- compare DNS resolution time in prod vs local

- measure TLS handshake duration

- check whether traffic routes through inspection gateways

- confirm allowlists for required endpoints

The key is to test in the same network conditions your users experience. Otherwise, you’ll keep optimizing app code while the real bottleneck sits in the network layer.

Why can the Slack desktop app be slow even when the backend is fast?

Backend optimization wins for API responsiveness, client optimization is best for perceived speed on the desktop, and user-environment tuning is optimal when hardware acceleration or resource contention causes UI lag.

Meanwhile, desktop slowness often looks like “Slack is slow,” even when your workflow and APIs are healthy.

Client-side slowness often comes from:

- CPU contention (many apps competing for resources)

- memory pressure

- corrupted caches

- graphics/hardware acceleration issues

- oversized message histories or heavy channels

If users report slowness while your telemetry looks healthy, treat it as a client performance track: reproduce on similar hardware, test hardware acceleration toggles, and verify whether the problem is universal or tied to specific machines.

How do multi-workspace or cross-org setups change performance expectations?

Multi-workspace or cross-org setups are distributed collaboration contexts where requests may traverse different permission boundaries, routing paths, and operational conditions, which can introduce variability in latency and perceived reliability across organizations.

Besides, this variability changes how you should set expectations and define “normal.”

In these setups:

- the same action can behave differently across workspaces

- permissions and scopes may require extra calls or fallback behavior

- messaging and workflow execution can vary based on org policies and network conditions

The best practice is to define performance targets per workspace tier and measure them separately. That approach turns “random slow runs” into a segmented performance model you can actually improve.

Evidence recap (kept intentionally minimal by domain):

- Slack acknowledgement timing and rate limiting behaviors are documented in Slack Developer Docs.

- User tolerance to delays and the measurable impact of response time on attitudes/intentions is supported by the 2004 experimental study by University of Pittsburgh authors (and co-authors from Clemson University and the College of William & Mary).